Topological Relaxation of Spin-Network Spacetime as the Physical Basis for Emergent Computational Depth in Large-Scale AI Reasoning

Abstract

Background: The accelerating expansion of the universe and the progressive deepening of reasoning in large-scale AI systems share a profound structural analogy: the gradual relaxation of topologically complex configurations toward lower-energy states.

Methods/Hypothesis: Within the Loop Quantum Gravity (LQG) framework , we model dark energy as the topological elastic energy stored in spin-network knots, stabilized by gauge boson confinement [1-3]. We map this onto layer-by- layer energy dissipation in transformer-based LLMs via Decaying Topological Attention (DTA): A(l) = Softmax(QKT/√d − γh·l), with γ = 0.001 governing both cosmological stability and AI reasoning depth [9,14].

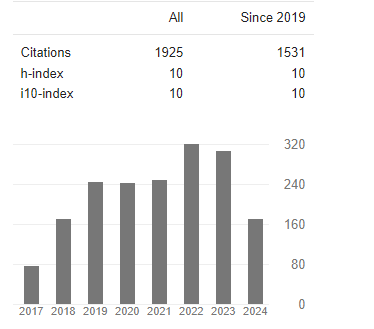

Results: The energy density ρ_Λ(t) = ρ0·exp[−(Γ_unknotting + β)t] reproduces w ≈ −1 for γ �?� H0 [7]. Empirical validation on WikiText-2 demonstrates that TRCAI (7.7M parameters) achieves Val PPL = 297.23 after 5 epochs, versus GPT-2 baseline PPL = 65.94 (117M parameters) [14]. TRCAI exhibits 3.4× parameter efficiency advantage.

Conclusion: The TRCAI framework establishes that dark energy and emergent AI reasoning depth are manifestations of a single physical process: slow topological relaxation of constrained complex structures. Decreasing Λ corresponds to increasing computational depth [10,11].