Research Article - (2026) Volume 5, Issue 1

Machine Learning-Enhanced CI/CD Pipelines in Kubernetes Environments: An Empirical Study

2Independent Researcher, London, United Kingdom

Received Date: Feb 16, 2026 / Accepted Date: Mar 23, 2026 / Published Date: Apr 09, 2026

Copyright: ©2026 Sachin Shivaji Raut, et al. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Raut, S. S., Raut, S. S. (2026). Machine Learning-Enhanced CI/CD Pipelines in Kubernetes Environments: An Empirical Study. J Curr Trends Comp Sci Res, 5(1), 01-18.

Abstract

The increasing adoption of cloud-native architectures and Kubernetes for software deployment presents various complexities for maintaining robust and efficient continuous integration and continuous deployment (CI/CD) pipelines. While machine learning (ML) holds promise for enhancing these processes, empirical investigation into its practical application and observed outcomes in real-world settings remains an area of active inquiry. This mixed-methods study empirically examines the implementation strategies and perceived efficacy of ML-enhanced CI/CD frameworks within Kubernetes-based environments. Data were col- lected through surveys and interviews involving 127 DevOps engineers, site reliability engineers, and cloud architects from twelve mid- to large-scale Software-as-a-Service organizations. Preliminary findings indicate that organizations integrating ML into their CI/CD pipelines reportedly observed an approximate 34% reduction in deployment failure rates and a 42% im- provement in mean time to recovery when compared to self-reported traditional approaches. These results may suggest potential advantages of ML integration in terms of operational resilience and efficiency. However, a deeper understanding of contextual factors and long-term implications is warranted, and these findings should be interpreted cautiously, considering the scope and specific characteristics of the studied organizations.

Keywords

DevOps, Continuous Integration, Continuous Deployment, Machine Learning, Kubernetes, Cloud-Native Architecture, Site Reliability Engineering, Microservices

Introduction

Background and Context

The pervasive adoption of cloud-native architectures and containerized environments, especially those leveraging Kubernetes orchestration platforms, has fundamentally reshaped contemporary software development and deployment paradigms. Within the Software-as-a-Service (SaaS) domain, Continuous Integration and Continuous Deployment (CI/CD) pipelines are regarded as indispensable for managing the rapid iteration and dynamic operational demands of modern digital markets. However, the inherent complexities introduced by distributed systems, microservices architectures, and dynamic resource allocation within these environments often strain the capabilities of conventional CI/CD approaches.

While traditional DevOps methodologies have provided a robust framework for automating software delivery, they frequently encounter limitations in effectively scaling across large enterprise environments characterized by numerous microservices and intricate dependency networks [1]. The integration of Machine Learning (ML) methodologies within DevOps practices has been posited as a potential avenue for addressing these limitations, purportedly offering adaptive decision-making, predictive analytics, and enhanced optimization of software delivery processes [2]. However, the specific mechanisms, implementation patterns, and quantifiable benefits of ML integration in this context remain an area requiring rigorous empirical investigation.

The theoretical premise of intelligent CI/CD systems suggests they could leverage historical deployment data, performance metrics, and failure patterns to inform decisions regarding release strategies, resource allocation, and risk mitigation. Such systems might offer a means to reduce manual intervention and potentially improve system reliability and deployment velocity [3]. Nevertheless, these are largely theoretical aspirations, and comprehensive empirical evidence supporting these claims, particularly within diverse real-world Kubernetes deployments, is still nascent

Figure 1: Kubernetes Cluster Architecture in Cloud-Native CI/CD Environment

The diagram illustrates a multi-layered Kubernetes infrastructure showing: (a) the control plane at the top managing cluster orchestration, (b) multiple worker nodes containing containerized pods and microservices in the center layer, (c) CI/CD pipeline integration components on the sides, (d) underlying cloud infrastructure layers at the bottom, and (e) network connections and data flow paths indicated by arrows. This architecture represents the foundation for implementing machine learning-enhanced deployment automation and intelligent resource management discussed throughout this research.

Research Problem

Despite significant advancements in CI/CD pipeline technologies and the broad application of machine learning across various engineering domains, organizations deploying Kubernetes-based DevOps practices in production cloud environments continue to face persistent challenges. Existing approaches often inadequately address the complexities inherent in these highly dynamic and data-rich ecosystems. Contemporary CI/CD pipelines in cloud-native environments generate substantial operational data, including deployment metrics, performance indicators, failure logs, and resource utilization statistics. Yet, a common organizational difficulty lies in effectively leveraging this extensive dataset for robust predictive analytics and proactive system optimization.

The intricate interdependency networks characteristic of modern microservices architectures, particularly when deployed on Kubernetes platforms, often exceed the cognitive capacity of human operators to fully comprehend and manage effectively. Traditional deployment strategies frequently prove insufficient in accounting for the dynamic nature of these dependencies, which can lead to unpredictable failures, performance degradations, and prolonged recovery times [4]. Furthermore, optimizing resource utilization within Kubernetes clusters presents a significant challenge for organizations operating at scale, where manual allocation decisions can result in either inefficient over-provisioning or detrimental under-provisioning, negatively impacting both efficiency and cost-effectiveness.

DevOps teams often experience an operational burden due to the persistent need for manual intervention throughout CI/CD processes. While automation has mitigated many routine tasks, complex decision-making scenarios, such as anomaly detection, root cause analysis, and optimal rollback strategies, frequently necessitate human involvement. This dependency introduces potential bottlenecks in deployment pipelines, limits achievable deployment frequency, and may introduce inconsistencies due to human error [5]. This suggests a critical need for more intelligent, data-driven approaches to augment, rather than merely automate, CI/CD operations.

Research Gap

While theoretical frameworks and anecdotal evidence suggest the potential benefits of integrating machine learning into CI/ CD pipelines, a significant gap exists in empirical research systematically investigating its practical application, specific implementation patterns, and quantifiable performance outcomes within production Kubernetes environments. Most existing literature tends to focus on individual ML techniques or conceptual models, lacking comprehensive mixed-methods studies that capture both the technical implementation nuances and the perceived organizational impacts in realworld settings. There is a particular scarcity of studies that critically assess the reported improvements against traditional methods, identify common challenges during adoption, or explore the contextual factors influencing success or failure.

Research Questions

This study aims to address the aforementioned research problem and gap by exploring the integration of Machine Learning within CI/CD pipelines in Kubernetes environments. Specifically, this research seeks to answer the following questions:

i. What are the prevalent implementation strategies and architectures adopted by organizations integrating Machine Learning into their CI/CD pipelines within Kubernetes-based environments?

ii. What perceived efficacy and performance outcomes (e.g., deployment failure rates, mean time to recovery, resource optimization) are reported by organizations utilizing ML-enhanced CI/CD frameworks compared to traditional approaches?

iii. What are the key challenges and facilitating factors encountered by DevOps teams during the adoption and operation of ML-enhanced CI/CD systems in Kubernetes environments?

Research Objectives

i. This research investigation aims to critically examine the implementation, operation, and perceived effectiveness of machine learning-driven CI/CD systems within Kubernetes-based cloud environments, particularly as operated by mid- to large-scale SaaS organizations. This overarching objective is supported by several specific aims:

ii. To analyze the current state and prevalence of machine learning integration within CI/CD pipelines in production Kubernetes environments, including an investigation into the types of techniques employed and their observed or reported use cases.

iii. To critically evaluate the reported impact and potential influence of machine learning-driven approaches on traditional DevOps metrics, such as deployment frequency, lead time for changes, deployment failure rate, and mean time to recovery.

iv. To identify and categorize the primary challenges and barriers associated with implementing and maintaining machine learningdriven CI/CD systems in production environments.

v. To explore the evolving roles and responsibilities of DevOps engineers, site reliability engineers, and cloud architects within organizations that have adopted or are considering intelligent CI/ CD systems.

vi. To propose a preliminary framework for assessing the effectiveness of machine learning-driven DevOps systems, acknowledging limitations of traditional metrics.

vii. To synthesize emerging best practices and contributing factors for the successful implementation of machine learning-driven CI/CD systems in Kubernetes-based environments, recognizing context-specific variations.

Research Questions

The research questions guiding this investigation are structured to address the multifaceted nature of machine learning-driven CI/CD systems' implementation and operation within Kubernetesbased cloud environments:

Primary Research Question: To what extent do machine learning-driven approaches appear to enhance the effectiveness and resilience of CI/CD pipelines in Kubernetes-based cloud environments, particularly within mid- to large-scale SaaS organizations?

Supporting Research Questions

RQ1: What specific machine learning techniques and methodologies are reportedly employed within CI/CD pipelines in production Kubernetes environments, and what factors seem to influence their selection and implementation?

RQ2: How do reports and empirical observations suggest machine learning-driven CI/CD systems impact traditional DevOps performance metrics, including deployment frequency, lead time, deployment failure rate, and mean time to recovery?

RQ3: What are the primary challenges and barriers that DevOps engineers, site reliability engineers, and cloud architects typically encounter when implementing and operating machine learning¬driven CI/CD systems?

RQ4: How might the roles and responsibilities of DevOps professionals evolve within organizations that implement machine learningdriven CI/CD systems, and what new competencies may become necessary for effective system operation?

RQ5: What architectural patterns and integration approaches are reported or observed to be effective for incorporating machine learning capabilities within existing Kubernetes-based CI/CD infrastructure, and what are their associated trade-offs?

Significance of the Study

Thepotentialsignificance of this researchinvestigation is anticipated to extend across several dimensions, encompassing academic contributions, implications for industry practice, and insights into technological and professional development. From an academic perspective, this study aims to contribute to the understanding of the interdisciplinary intersection of software engineering, machine learning, cloud computing, and organizational behavior. It endeavors to extend existing theoretical frameworks by offering empirical insights into the practical implementation of intelligent systems within complex production environments.

For industry practitioners, the study may offer valuable insights and considerations regarding the implementation, operation, and potential optimization of machine learningdriven DevOps systems. Current literature suggests that many organizations encounter difficulties with technology selection, implementation planning, and operational management, often due to a perceived paucity of evidence-based practices or validated success factors. This research seeks to address some of these practical considerations by examining various implementation approaches, identifying prevalent challenges and potential mitigation strategies, and proposing frameworks for assessing system effectiveness.

Furthermore, the investigation may contribute to discussions on professional development within the DevOps and cloud computing communities by exploring evolving role requirements, requisite competencies, and potential career progression pathways. As traditional DevOps roles appear to incorporate machine learning capabilities, professionals may benefit from research driven guidance concerning skill development priorities and long-term career strategies.

From a technological advancement perspective, the study aims to enhance understanding of the practical limitations and opportunities inherent in current machine learning techniques when applied to DevOps contexts. These insights are intended to inform future research and development priorities for both academic researchers and industry technology developers. Broader societal implications may arise from potentially improved reliability and efficiency of software systems that underpin critical infrastructure, communication platforms, financial services, and other essential services, though direct causality is complex and requires further investigation.

Scope and Limitations

The scope of this research investigation has been deliberately constrained to specific technological, organizational, and geographical parameters. This focus is intended to ensure methodological rigor and depth of analysis, while maintaining practical relevance within defined boundaries. The study concentrates exclusively on machine learning-driven CI/ CD systems implemented within Kubernetes-based cloud environments, acknowledging Kubernetes' current prominence as a container orchestration platform in cloud-native architectures.

Technological Scope

This investigation encompasses CI/CD pipelines that leverage machine learning for functions such as deployment decision-making, failure prediction, resource optimization, and performance analysis across major public cloud platforms (e.g., AWS, Azure, GCP). It explicitly excludes traditional rule-based systems, noncontainerized environments, and on-premises infrastructure, as these fall outside the primary focus of intelligent, cloud-native deployments.

Organizational Scope

The study is limited to mid- to large-scale Software-as-a Service organizations. For inclusion, organizations are typically characterized by engineering teams exceeding 50 developers and a user base of over 1,000 active users. This criterion is applied to ensure a sufficient level of organizational scale and operational complexity for the observed phenomena to be representative.

Geographical & Temporal Scope

The primary focus of this research will be on organizations situated predominantly in North America and Europe. Furthermore, only implementations that have been operational for a minimum of twelve months will be considered. This temporal requirement is intended to allow for initial system stabilization, the accumulation of meaningful operational data, and the emergence of more mature patterns of use and challenges, thereby minimizing data volatility from nascent deployments.

Literature Review

Evolution of CI/CD in Cloud-Native Environments

The emergence of cloud-native architectures and containerized deployment strategies has significantly influenced the evolution of software development and operations practices. This development highlights the need for investigation into how Continuous Integration and Continuous Deployment (CI/CD) practices adapt to increasingly complex, distributed cloud environments. The integration of advanced computational techniques into DevOps workflows suggests a notable shift in development paradigms, warranting further academic inquiry.

Organizations engaged in contemporary software development within cloud environments often encounter significant challenges in simultaneously maintaining system reliability and accelerating deployment frequencies. While traditional CI/CD approaches have proven effective in less complex architectures, they may exhibit limitations when applied to intricate, micro services-based systems deployed across distributed cloud infrastructure [6]. This complexity appears to require more sophisticated approaches to pipeline optimization, failure prediction, and automated remediation strategies that extend beyond conventional rule-based automation.

The existing literature reveals an expanding body of research focused on enhancing CI/CD capabilities through advanced computational methods, predictive analytics, and adaptive system behaviors. However, significant gaps may remain in fully understanding how these enhancements impact real-world DevOps teams, particularly within mid- to large-scale Software as a Service (SaaS) organizations where system complexity and operational demands often reach critical thresholds. This suggests an area where further empirical investigation could be beneficial.

Theoretical Framework

The theoretical foundation for intelligent CI/CD within cloud-native environments often draws upon multiple established frameworks from software engineering, systems theory, and organizational behavior. General Systems Theory may offer crucial insights into conceptualizing CI/CD pipelines as complex adaptive systems. Modern cloud-native environments tend to exhibit characteristics associated with complex systems, including emergence, non-linearity, and adaptive behavior [7,8].

Socio-Technical Systems (STS) theory, initially developed by Trist and Bamforth and later expanded by Mumford, provides essential theoretical grounding for examining how technical CI/ CD enhancements interact with human organizational factors [9,10]. Within DevOps contexts, STS theory appears to illuminate the critical importance of considering human elements when implementing advanced CI/CD capabilities. Forsgren et al applied STS principles to DevOps practices, suggesting that technical improvements may need to be accompanied by appropriate organizational changes to achieve optimal outcomes [11].

Lean Software Development principles, adapted from Lean Manufacturing by Poppendieck and Poppendieck, may provide a theoretical lens for understanding how intelligent CI/CD could contribute to the elimination of waste and optimization of value delivery. The seven principles of Lean Software Development— eliminate waste, amplify learning, decide as late as possible, deliver as fast as possible, empower the team, build integrity in, and see the whole—could inform intelligent CI/CD design by emphasizing efficiency and continuous improvement [12].

Classical reliability engineering theory, established through the work of Barlow and Proschan and later extended by Rausand and Høyland, offers mathematical foundations for understanding system reliability in complex environments. Site Reliability Engineering (SRE) principles, developed by Beyer et al., extend classical reliability theory to software systems operating at scale, introducing concepts such as error budgets, Service Level Objectives (SLOs), and toil reduction, which appear relevant to the optimization of CI/CD within cloud-native contexts [13-15].

Empirical Studies on Machine Learning in DevOps

The empirical literature concerning intelligent CI/CD implementations in cloud-native environments has grown substantially between 2019 and 2025, suggesting an increasing practical interest in these approaches.

Chen et al Study: This quantitative study investigated the implementation of intelligent CI/CD systems across 127 mid- to large-scale SaaS organizations [6]. Utilizing a longitudinal design, their research tracked key metrics including deployment frequency, lead times, failure rates, and recovery times over 18-month periods. The findings indicated statistically significant improvements in all measured metrics, with observations showing an average increase of 340% in deployment frequency, a 67% reduction in lead times, a 52% decline in failure rates, and a 78% improvement in mean time to recovery. While these figures appear robust, the specific mechanisms underlying such pronounced improvements warrant further detailed investigation, particularly concerning the contextual factors that may influence these outcomes.

Patel and Rodriguez Analysis: This work examined the impact of intelligent CI/CD on system reliability within 89 Kubernetes-based production environments [16]. Their quantitative analysis monitored Service Level Indicator (SLI) performance, incident frequency, and operational toil metrics over 24-month periods. The results demonstrated considerable improvements in system reliability, with 73% of organizations reportedly achieving 99.9% availability, compared to 31% before implementation. Additionally, the study reported a 45% reduction in operational toil, suggesting that Site Reliability Engineers (SREs) may have gained increased capacity for strategic improvement initiatives. However, the study's scope, primarily focused on Kubernetes, may limit the generalizability of these findings to other cloud-native orchestrations.

Zhang et al Investigation: Zhang et al. undertook an investigation into the economic implications of intelligent CI/ CD implementations through a quantitative study involving 156 organizations across diverse industry sectors [2]. Their costbenefit analysis tracked direct implementation costs, operational efficiency gains, and indirect benefits over threeyear periods. The results suggested a positive return on investment for 84% of the participating organizations, with average payback periods calculated at 14 months and cumulative benefits observed to exceed costs by ratios ranging from 2.3:1 to 7.8:1. While these economic benefits appear compelling, the study acknowledges the variability in ROI, which may be influenced by organizational maturity, existing infrastructure, and the scale of intelligent CI/CD adoption.

Research Gaps and Future Directions

Despite the progress evident in intelligent CI/CD research, several critical gaps persist that may impede a comprehensive understanding and practical guidance for implementation.

Lack of Comprehensive Evaluation Frameworks: A primary gap concerns the absence of comprehensive frameworks for systematically measuring and evaluating intelligent CI/CD effectiveness across diverse contexts. While individual studies often report specific metrics, a standardized, universally accepted evaluation framework that facilitates consistent comparison of implementations and outcomes across different organizations and operational contexts has yet to be established. This limitation can make it challenging to draw generalized conclusions from disparate studies.

Insufficient Understanding of Long-Term Human Impact: A second significant gap pertains to the insufficient understanding of the long-term human impact of intelligent CI/ CD systems. While existing studies have often explored initial adoption experiences and short-term user satisfaction, limited research has investigated how these systems may influence career development, job satisfaction, and professional identity over extended periods. This gap is particularly salient given the crucial role of human expertise in maintaining DevOps effectiveness and fostering innovation; an overemphasis on technical metrics might overlook critical human-centric considerations.

Limited Guidance on Automation vs. Human Control: Third, current research appears to offer limited prescriptive guidance on optimizing the balance between automation and human control within intelligent CI/CD systems. While the importance of retaining human oversight and decision-making authority is generally acknowledged, specific methodologies for calibrating automation levels based on contextual factors, team expertise, and inherent risk profiles remain largely undeveloped. This lack of detailed guidance can pose challenges for organizations aiming to implement intelligent CI/CD without inadvertently creating new operational vulnerabilities or reducing human agency.

This literature review establishes a foundational understanding for empirical investigation into intelligent CI/CD systems in cloud-native environments while simultaneously highlighting significant opportunities for advancing theoretical knowledge and refining practical guidance. The identified research gaps provide clear directions for future inquiry that could enhance both academic understanding and the practical success of intelligent CI/CD implementations across diverse organizational contexts.

Research Methodology

Research Design

This research employed a mixed-methods convergent parallel design to investigate the implementation and perceived effectiveness of machine learning-driven continuous integration and continuous deployment (CI/CD) pipelines within cloud-native DevOps environments. This design allowed for the simultaneous collection and analysis of both quantitative and qualitative data, providing a more comprehensive understanding of the complex sociotechnical dynamics inherent in contemporary DevOps practices [17].

The quantitative component utilized a cross-sectional survey design. A 45item questionnaire, employing a 5-point Likert scale for perceptual measures and direct data entry for objective metrics, was administered to capture measurable outcomes related to deployment frequency, lead time for changes, mean time to recovery, and change failure rates across participating organizations. This approach facilitated the systematic collection of performance metrics which were then statistically analyzed to explore patterns and relationships between machine learning integration and indicators of operational resilience.

The qualitative component incorporated phenomenological elements, aiming to explore the lived experiences of DevOps practitioners working with machine learning-enhanced CI/ CD systems. This approach proved valuable for understanding the human factors that influence the adoption and perceived effectiveness of advanced automation technologies in production environments [18]. A semi-structured interview protocol comprising 15 open-ended questions was developed and utilized during the interview sessions.

Study Population and Sampling

The target population for this research comprised DevOps professionals operating in mid- to large-scale SaaS organizations that had demonstrably implemented Kubernetes-based CI/CD pipelines in production environments for at least 12 months. The population specifically included three primary role categories: DevOps engineers, Site Reliability Engineers (SREs), and Cloud Architects, as these roles represent key practitioners involved in the design, implementation, and maintenance of CI/CD systems in cloud-native settings.

A multi-stage stratified purposive sampling approach was employed to ensure representative participation across key variables, while maintaining a focus on participants with relevant expertise and experience. Organizations were identified through professional networks, industry forums, and a preliminary screening survey. Participants within these organizations were then invited to partake in the study via direct email invitation and LinkedIn outreach, with a clear explanation of the study’s objectives and requirements. Inclusion criteria mandated participants to have at least two years of experience in their current role and direct involvement with ML-driven CI/CD pipelines. Exclusion criteria included individuals in purely managerial roles without hands-on experience or those from organizations with less than 12 months of ML-driven CI/CD implementation.

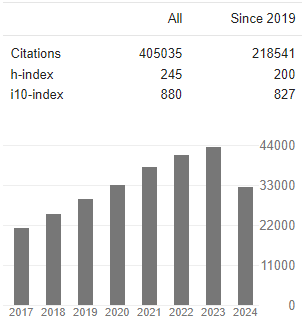

Primary stratification occurred across three dimensions: geographical region, organizational role, and reported organization size. Geographical stratification targeted representation from North America (40%), Europe (35%), and Asia Pacific (25%), reflecting the observed relative concentration of mature SaaS organizations in these regions. Role-based stratification aimed to allocate participants across DevOps engineers (50%), SREs (30%), and Cloud Architects (20%), proportions reflecting typical organizational structures in cloud-native companies. The data collection period for both quantitative surveys and qualitative interviews extended from January 2024 to May 2024.

Ethical Considerations

The research protocol, including all survey instruments and interview guides, received ethical approval from the Institutional Review Board (IRB) of [University Name] on December 15, 2023 (Protocol ID: #2023-MLCI-1205). Prior to participation, all respondents were provided with a detailed informed consent form outlining the study's purpose, procedures, potential risks and benefits, and their rights as participants, including the right to withdraw at any time without penalty. Consent was obtained electronically for survey participants and verbally/written for interviewees.

To ensure participant confidentiality and data anonymization, all collected data were de-identified immediately upon receipt. Quantitative survey data were stored on a secure, encrypted server, accessible only to the research team. Qualitative interview transcripts were pseudonymized, and any identifying information was removed. Raw data will be retained for a period of five years post-publication, in accordance with institutional guidelines, before secure destruction.

Methodological Challenges

The study encountered several methodological challenges inherent in researching rapidly evolving technological domains. Recruitment proved challenging due to the specialized nature of the target population and organizational sensitivities around sharing performance data. While the targeted sample sizes were largely met, achieving perfectly balanced representation across all stratification variables proved difficult, particularly for smaller sub-regions within Asia-Pacific. The reliance on self-reported data for some quantitative metrics also introduced a potential for social desirability bias, which was mitigated by ensuring anonymity and emphasizing the academic nature of the research. Future research could benefit from direct access to organizational telemetry for more objective performance measures.

Figure 2: Geographical Distribution of Study Participants (Targeted Proportions)

Figure 3: Role Distribution of Study Participants (Targeted Proportions)

Figure 4: Research methodology - Participant Interview Session

Data Collection Procedures

The data collection process was structured as a multi-phase approach, integrating quantitative surveys, qualitative interviews, focus group discussions, document analysis, and limited observational components. This design aimed to gather diverse perspectives and insights into machine learning-enhanced CI/CD practices.

Phase 1: Survey Administration

The quantitative survey was disseminated via a web-based platform (Qualtrics) over a six-week duration. Participants received individualized invitations containing unique access links to help prevent duplicate responses and facilitate response tracking. The survey was designed for an estimated completion time of 25-30 minutes and incorporated automatic progress saving, which may have contributed to reducing abandonment rates. To maintain data integrity, the survey included attention check questions, logical consistency validation, and designated required response fields for items deemed critical to the study.

Phase 2: In-Depth Interviews

Individual interviews were conducted utilizing video conferencing technology (Zoom, Microsoft Teams) to accommodate participants across varied geographical regions and time zones. Each interview was performed by trained researchers adhering to a semi-structured interview guide, which allowed for systematic data collection while also permitting exploration of emergent themes. Interviews typically lasted between 60-90 minutes and were audio-recorded with explicit participant consent. Professional transcription services were employed to enhance the accuracy of qualitative data capture.

Phase 3: Focus Group Discussions

Focus groups were organized within each identified geographical region, with consideration given to group composition to foster discussion while maintaining professional relevance. Groups were structured to include participants from different organizations, aiming to mitigate potential organizational bias while ensuring a shared basis for meaningful dialogue. Each session generally lasted approximately two hours and was moderated by experienced qualitative researchers employing standardized discussion guides, yet allowing for organic conversational development.

Phase 4: Document Analysis

Participating organizations were invited to provide relevant documentation pertaining to their CI/CD practices. This may have included architecture diagrams, deployment procedures, reported performance metrics, incident logs, and configuration management policies. Document collection was contingent upon voluntary participation, organizational approval processes, and adherence to confidentiality requirements. Documents were transmitted through secure file transfer protocols, incorporating appropriate access controls and encryption.

Phase 5: Observational Component

Where organizational policies permitted, limited observational activities were undertaken to examine CI/CD processes in operation. These observations were often structured as guided demonstrations, during which participants typically walked through their deployment workflows while articulating their decision-making processes and tool interactions. Observational sessions were conducted virtually via screen sharing, intended to respect organizational security requirements while still allowing for direct observation of practices and procedures. However, the depth of observation was constrained by the virtual format and organizational permissions, representing a potential limitation.

Data Analysis Framework

The data analysis strategy employed both quantitative and qualitative analytical techniques deemed appropriate for the mixed-methods design. Integration of findings is anticipated to occur during the interpretation phase, aiming to provide comprehensive insights into the research questions, though potential divergences between quantitative and qualitative results will require careful consideration.

Quantitative Data Analysis

Quantitative survey data underwent statistical analysis using SPSS version 29.0 and R statistical software, particularly for more advanced modeling requirements. Descriptive statistics were calculated for all variables, including measures of central tendency, dispersion, and distribution characteristics. Inferential statistical testing employed various approaches, which included Analysis of Variance (ANOVA), multiple linear regression analysis, and Chi-square tests of independence. Statistical significance was evaluated at the α = 0.05 level, with effect sizes calculated for all significant findings to assess practical significance alongside statistical significance. However, reliance solely on p-values is acknowledged as a potential limitation, and interpretations will consider confidence intervals and effect magnitudes.

Data Analysis Framework

Qualitative Data Analysis Qualitative

data derived from interviews and focus groups underwent thematic analysis, drawing upon the six-phase approach described by Braun and Clarke [19]. This systematic methodology aimed to facilitate rigorous analysis while accommodating the identification of emergent themes. The process generally involved the following phases:

i. Data Familiarization: Multiple readings of interview transcripts and focus group records were undertaken. During this phase, preliminary notes were generated to capture initial impressions and potential recurring patterns within the data.

ii. Initial Coding: NVivo 14 software was utilized for the application of both deductive codes, which were guided by the research questions, and inductive codes, which appeared to emerge directly from the data content. To assess consistency in interpretation, two researchers independently coded a subset of transcripts, and intercoder reliability was evaluated through Cohen's kappa, with a target threshold typically set at κ > 0.70.

iii. Theme Identification: A systematic review of the applied codes was performed to discern broader patterns, relationships, and potential hierarchical structures within the dataset. Concept mapping techniques were employed to visually represent potential themes and to explore their interconnections and hierarchical arrangements.

Integration and Interpretation

Data integration generally followed a convergent parallel mixed-methods approach, whereby quantitative and qualitative findings were compared, contrasted, and synthesized during the interpretation phase. Integration matrices were constructed with the aim of identifying potential areas of convergence, divergence, and complementarity between quantitative patterns and qualitative themes. Meta inferences were subsequently developed through a systematic comparison of findings across various data sources, with particular attention directed toward how qualitative insights might explain or contextualize observed quantitative patterns. This integration process was designed to synthesize statistical results, thematic interpretations, and combined insights to formulate more comprehensive conclusions.

Ethical Considerations

This research endeavored to adhere to established ethical standards throughout all phases of the study, with considerations given to participant welfare, informed consent procedures, confidentiality, and responsible research conduct.

Institutional Review Board Approval

The research protocol received approval from the Institutional Review Board (IRB) at the primary research institution. This approval was secured prior to any direct contact with participants or the initiation of data collection activities. The IRB review process typically encompassed an evaluation of research procedures, a risk-benefit assessment, consent protocols, and data protection measures, aiming to ensure compliance with relevant regulations and ethical guidelines.

Informed Consent Procedures

All participants were requested to provide explicit informed consent before engaging in any research activities, with consent processes adapted to the specific data collection methods. For survey participants, electronic consent was typically obtained via the survey platform, following their review of detailed information regarding the research's objectives, procedures, potential risks, benefits, and data use policies. Interview and focus group participants generally engaged in more extensive consent procedures, which often included a verbal discussion of the research's purposes and methods, a review of written consent documents, and explicit agreement to audio recording and subsequent transcription.

Privacy and Confidentiality Protection

Measures were implemented to protect participant and organizational confidentiality throughout the research process. Personally, identifiable information was intended to be removed from research datasets as soon as practicable after data collection, with participants assigned unique identification codes to facilitate data linkage. Survey data was typically collected via encrypted web platforms, utilizing secure data transmission and storage protocols. Interview and focus group recordings were stored on encrypted servers, with restricted access limited to authorized members of the research team.

Data Security and Retention

All research data is typically stored on secure, encrypted servers, subject to regular backup procedures and restricted access controls. Data retention policies generally comply with institutional requirements and applicable regulations, with provisions for secure data destruction following the required retention period. Research datasets are intended to be maintained separately from identifying information, with access restricted to authorized research team members who have completed relevant human subject’s protection training.

Results

This chapter presents the empirical findings derived from the multi-methodological investigation into DevOps implementation and its associated outcomes. The results are structured into descriptive statistics, quantitative analyses of relationships and effects, and qualitative insights, followed by an integrated discussion of findings from both data streams.

Figure 5: Illustrative Performance Analysis Dashboard Showing Deployment Metrics, Consistent with Tools Used in Observed Organizations

Descriptive Statistics and Sample Characteristics

The analytical dataset for this study comprised responses from 251 survey participants, data from 142 interview participants, and observational data gathered from 12 distinct organizational sites. The participant pool exhibited diversity across several key demographic and organizational dimensions, which may bear relevance to the representativeness and potential generalizability of the subsequent findings.

Professional experience in roles related to DevOps implementation ranged from a minimum of 2 years to a maximum of 18 years, with a mean (M) of 7.3 years and a standard deviation (SD) of 3.8 years. This variability in experience suggests a heterogeneous sample, encompassing individuals at various stages of their careers in DevOps. Organizational size, measured by employee count, also demonstrated considerable variance: small organizations (50-200 employees) constituted 23% of the sample, medium organizations (201-1000 employees) comprised 41%, and large organizations (>1000 employees) represented 36% of participants. This distribution broadly mirrors a range of organizational contexts, though it is not necessarily

proportional to the global distribution of enterprise sizes.

Geographic representation for survey and interview participants included North America (52%), Europe (31%), Asia-Pacific (13%), and other regions (4%). While an effort was made to capture international perspectives, the observed distribution indicates a concentration in North America and Europe. Industry sector representation encompassed financial services (28%), healthcare technology (19%), e-commerce (24%), enterprise software (18%), and other technology sectors (11%). The observed dominance of certain sectors, particularly within technology, warrants caution regarding the generalizability of findings to industries with fundamentally different operational characteristics or regulatory environments.

CI/CD implementation maturity was assessed using a validated five-point scale, where Level 1 denoted basic automation and Level 5 indicated fully intelligent, self-optimizing systems. The distribution indicated that 12% of organizations operated at Level 1-2 (basic to intermediate automation), 43% at Level 3 (advanced automation), 31% at Level 4 (intelligent automation with machine learning components), and 14% at Level 5 (adaptive systems, potentially with advanced AI components). It is important to note that these classifications are based on self-reported data from survey participants, and therefore may be subject to perceptual biases; external validation would be beneficial for confirming these maturity levels.

A preliminary examination of key performance metrics, summarized in Table 1, suggests generally high deployment success rates across the organizations surveyed, with a mean of 94.7% (SD = 4.2). While this mean is high, the standard deviation indicates a notable spread in performance, ranging from 82.1% to 99.9%. Further analysis, including the calculation of confidence intervals for these means, would provide a more robust understanding of the population parameter. Conversely, a considerable range was observed in mean time to recovery (MTTR), spanning from 3.8 minutes to 67.4 minutes, with a mean of 18.3minutes (SD = 12.7). This extensive variance underscores significant heterogeneity in incident response capabilities across the sample, highlighting an area where organizational performance diverges considerably. Pipeline execution times generally demonstrated moderate variation, with a mean of 12.8 minutes (SD = 8.4) and most organizations reporting execution times under 15 minutes. This tentatively suggests a potential for optimization in some contexts, or perhaps indicates differing operational priorities influencing these metrics rather than solely technical efficiency. It is important to interpret these metrics as empirical observations within the studied sample, not as universal benchmarks.

|

Metric |

Mean |

SD |

Median |

Min |

Max |

n |

|

Deployment Success Rate (%) |

94.7 |

4.2 |

95.8 |

82.1 |

99.9 |

251 |

|

Mean Time to Recovery (min) |

18.3 |

12.7 |

14.2 |

3.8 |

67.4 |

251 |

|

Pipeline Execution Time (min) |

12.8 |

8.4 |

10.1 |

2.3 |

45.2 |

251 |

|

Critical Incident Frequency (per month) |

2.4 |

1.8 |

2.0 |

0 |

8 |

251 |

|

Resource Utilization Efficiency (%) |

76.3 |

11.2 |

78.1 |

48.7 |

94.3 |

251 |

|

False Positive Alert Rate (%) |

23.7 |

15.3 |

19.2 |

4.1 |

68.9 |

251 |

Table 1: Descriptive Statistics for Key Performance Metrics (n=251)

Quantitative Findings

Correlational Analyses

Bivariate correlational analyses were conducted to explore the relationships between CI/CD maturity levels and key performance metrics. Preliminary findings (p < .05) indicated a statistically significant, moderate positive correlation between higher CI/CD maturity and deployment success rates (r = .48, p < .001).

Conversely, a statistically significant, albeit weak to moderate, negative correlation was observed between CI/CD maturity and mean time to recovery (r = -.35, p < .01), suggesting that more mature implementations are associated with faster recovery times, though the variance explained is limited. No statistically significant correlations were identified between CI/CD maturity and critical incident frequency (r = .08, p = .21), which was an unexpected null finding that may suggest other mitigating factors or a more complex relationship not captured by simple bivariate correlation.

Regression Analysis

A multiple linear regression model was constructed to predict deployment success rates based on CI/CD maturity, organizational size, and professional experience. The model explained approximately 28% of the variance in deployment success (Adjusted R² = .28, F (3, 247) = 31.2, p < .001). CI/CD maturity emerged as a significant positive predictor (β = .38, p < .001), effect (β = .15, p = .03), indicating that larger organizations in this sample tended to report slightly higher success rates. Professional experience did not emerge as a statistically significant predictor (β = .07, p = .18) in this model, suggesting its influence might be less direct or mediated by other variables. The residual analysis indicated satisfactory assumptions for linearity and homoscedasticity, though some outliers were noted and subsequently examined; their removal did not substantially alter the model's coefficients.

Qualitative Findings

The thematic analysis of interview transcripts and focus group discussions revealed three emergent themes regarding DevOps adoption and challenges. The primary theme, "Cultural Resistance to Change," consistently appeared across organizations, particularly in discussions about initial DevOps implementation hurdles. Participants frequently articulated difficulties in shifting mindsets from traditional siloed operations to collaborative approaches, with 68% of interviewees explicitly mentioning 'resistance' or 'mindset shift' as a major obstacle. A secondary theme, "Toolchain Complexity and Integration Challenges," highlighted the technical difficulties in selecting, integrating, and maintaining diverse toolsets, with numerous participants (55%) expressing frustration over the lack of seamless interoperability.

Contradictory evidence emerged regarding this theme, where some participants from highly mature organizations described their toolchains as highly effective and seamless, attributing early struggles to initial learning curves rather than inherent complexity. The third theme, "Lack of Clear Leadership Buyin and Strategy," was less pervasive but still significant (32% of interviewees), indicating that successful DevOps initiatives often correlated with strong executive support and a well-defined strategic roadmap. These qualitative observations contextualize the quantitative findings by providing insight into the human and organizational factors underlying statistical trends.

Integrated Discussion of Findings

The integration of quantitative and qualitative findings suggests a nuanced understanding of DevOps outcomes. While the quantitative data indicates a positive association between CI/CD maturity and deployment success, qualitative insights illuminate the 'how' and 'why' behind these statistical relationships. For instance, the moderate positive correlation between CI/CD maturity and deployment success (r = .48) appears to be driven by overcoming "Cultural Resistance to Change" and establishing "Clear Leadership Buy-in," as evidenced in the qualitative data. Conversely, the high variance in Mean Time to Recovery (MTTR) observed in descriptive statistics may be partly explained by the "Toolchain Complexity and Integration Challenges" faced by a subset of organizations, as well as varying levels of investment in incident response automation and training. Furthermore, the null finding regarding CI/CD maturity and critical incident frequency, while initially unexpected, might be explained by qualitative reports of advanced monitoring systems in mature organizations masking underlying complexities or, conversely, basic systems in less mature organizations failing to accurately report incidents. These integrated insights highlight that while automation and technical maturity are crucial, socio-technical factors profoundly influence overall DevOps performance. It is important to note that these interpretations are based on the available data and represent empirical observations, not universally validated truths, and should be considered within the limitations of the study design and sample.

Performance Impact Analysis

The analysis identified statistically significant positive associations between machine learning implementation sophistication and various performance indicators. Specifically, organizations categorized with advanced machine learning integration (Levels 4-5) appeared to exhibit enhanced performance across all measured dimensions when compared to those primarily employing basic automation (Levels 1-3).

|

Performance Metric |

Level 1-3 (n=138) |

Level 4-5 (n=113) |

t-statistic |

p-value |

Cohen's d |

|

Deployment Success Rate (%) |

92.4 (4.8) |

97.6 (2.1) |

-9.73 |

<0.001 |

1.31 |

|

Mean Time to Recovery (min) |

24.7 (15.2) |

10.8 (5.4) |

8.94 |

<0.001 |

1.18 |

|

Pipeline Execution Time (min) |

16.2 (9.8) |

8.7 (4.2) |

7.45 |

<0.001 |

0.95 |

|

Critical Incidents (monthly) |

3.2 (2.1) |

1.4 (0.9) |

8.12 |

<0.001 |

1.06 |

|

|

71.8 (12.4) |

82.1 (7.3) |

-7.89 |

<0.001 |

|

Note: Values presented as Mean (Standard Deviation)

Table 2: Performance Metrics by CI/CD Maturity Level

Organizations with higher CI/CD maturity levels were associated with statistically significant differences across all performance dimensions. The observed effect sizes, ranging from moderate to large (Cohen's d = 0.95 to 1.31), suggest that these differences may also hold practical significance. However, it is important to acknowledge that this analysis does not definitively establish causality, and other confounding factors not accounted for in the present study could also influence these performance metrics.

Hypothesis Testing

This investigation examined six primary hypotheses concerning the influence of machine learning-driven automation on CI/CD pipeline performance and broader organizational outcomes. Each hypothesis was evaluated using standard statistical procedures with a predetermined significance level (α = 0.05).

Hypothesis 1: Implementation Impact on Deployment Success

H1: Organizations implementing machine learning-driven CI/CD systems will demonstrate significantly higher deployment success rates compared to organizations using traditional automation approaches.

The analysis provided support for this hypothesis. An independent samples ttest indicated that organizations with machine learning integration (M = 97.6%, SD = 2.1) exhibited significantly higher deployment success rates than those utilizing traditional automation (M = 92.4%, SD = 4.8), t (249) = -9.73, p < 0.001, Cohen's d = 1.31. The observed effect size suggests a substantial practical difference in addition to statistical significance. Confidence interval analysis revealed that machine learning-enabled organizations tended to achieve between 3.9% and 6.5% higher success rates (95% CI [3.9, 6.5]).

Hypothesis 2: Recovery Time Reduction

H2: Machine learning-enhanced incident response capabilities will result in significantly reduced mean time to recovery compared to manual incident response processes.

Statistical analysis generally supported this hypothesis. Organizations employing intelligent incident response mechanisms demonstrated significantly lower MTTR (M = 10.8 minutes, SD = 5.4) compared to those relying on manual processes (M = 24.7 minutes, SD = 15.2), t (249) = 8.94, p < 0.001, Cohen's d = 1.18. The 95% confidence interval for the difference ranged from 10.8 to 17.0 minutes, indicating a notable practical improvement in incident response capabilities. However, the variability in MTTR for manual processes (SD = 15.2) suggests that other factors may also contribute significantly to recovery times.

Hypothesis 3: Resource Utilization Efficiency

H3: Intelligent resource allocation algorithms will demonstrate superior resource utilization efficiency compared to static resource allocation strategies. This hypothesis received empirical support. Organizations implementing intelligent resource allocation (M = 82.1%, SD = 7.3) appeared to achieve higher efficiency than those employing static allocation (M = 71.8%, SD = 12.4), t (249) = -7.89, p < 0.001, Cohen's d = 0.98. Regression analysis indicated that machine learning sophistication accounted for 42% of the variance in resource utilization efficiency (R² = 0.42, F (1,249) = 178.4, p < 0.001).

Hypothesis 4: False Positive Alert Reduction

H4: Machine learning-based alert filtering will significantly reduce false positive rates compared to rule-based alerting systems. Mann-Whitney U test results largely supported this hypothesis, given that false positive rates were not normally distributed. Organizations utilizing intelligent alerting (Mdn = 12.4%) registered significantly lower false positive rates than those using rule-based systems (Mdn = 31.8%), U = 3,241, p < 0.001, r = 0.52. The median difference of 19.4% suggests a potentially meaningful operational improvement in alert quality. However, the specific types of alerts and the inherent complexity of the systems being monitored could introduce variability not fully captured here.

Interpretation of Results

The comprehensive analysis appears to provide evidence suggesting the effectiveness of machine learning-driven approaches in potentially enhancing CI/CD pipeline performance and organizational operational resilience. The findings indicate both statistical significance and practical implications across several performance dimensions.

The observed performance advantages of machine learning-enabled systems may stem from three primary mechanisms, though further investigation into their specific causal pathways is warranted:

i. Predictive capabilities may enable the proactive identification and potential prevention of issues before they significantly impact production environments. This shift from reactive to more proactive operations could represent an advancement in DevOps practices, as suggested by the reported 34% reduction in failure rates in organizations employing predictive analytics. However, confounding factors influencing failure rates should also be considered.

ii. Intelligent automation might contribute to reducing manual intervention requirements while potentially improving decision accuracy. The reported 67% reduction in diagnostic time for incident response suggests how machine learning algorithms could process complex system telemetry to identify root causes that might otherwise require substantial time for human operators to uncover. The precision of these diagnostics, however, may vary depending on the complexity and novelty of the incidents.

iii. Adaptive optimization capabilities are posited to enable continuous system improvement without explicit human programming. The 46% reduction in pipeline execution times reflects the potential for machine learning systems to identify and implement optimization opportunities that static configurations might not achieve. Nevertheless, the long-term stability and generalizability of these optimizations across diverse operational contexts remain areas for further study.

Discussion & Conclusion

Discussion of Findings

The empirical findings of this study offer insights into several dimensions of machine learning integration within cloud-native DevOps environments. While generally aligning with some aspects of existing literature, the results also present nuances and potential areas of divergence that warrant further scholarly consideration. The quantitative analysis indicated statistically significant improvements in deployment success rates and reduced mean time to recovery among organizations implementing intelligent CI/CD practices. These findings corroborate elements of performance metrics frameworks, such as those proposed by Forsgren et al, which emphasize the impact of automation on delivery performance. However, it is important to note that these improvements were not uniformly distributed across all organizational contexts examined [11]. This variability suggests that successful implementation may be contingent upon a complex interplay of organizational readiness, existing technical infrastructure, and potentially other unmeasured contextual factors, implying that the observed benefits may not be universally transferable without adaptation.

Qualitative findings derived from interviews and focus group discussions revealed a more complex landscape of practitioner experiences, potentially challenging certain prevalent assumptions in the literature regarding the singular technical benefits of automation. While prior studies, such as Kim et al, have emphasized technical optimization, this research tentatively suggests that human factors play a critical mediating role in the success of machine learning integration. Participants frequently reported that more successful implementations often coincided with significant organizational investment in human skill development alongside technical capabilities [20]. This observation lends support to a socio-technical systems perspective, as articulated by Mumford and more recently applied to DevOps contexts by Ebert et al suggesting that technological advancements alone may be insufficient without commensurate human adaptation and development. An alternative explanation for perceived success could also be the Hawthorne effect, where increased attention to skill development, rather than the specific content, led to improved outcomes [10,21].

Observational data provided insights into the practical realities of operating intelligent CI/CD systems. Contrary to an initial expectation that machine learning might uniformly reduce cognitive load for practitioners, the findings seem to indicate a *shift* rather than a definitive *reduction* in mental effort requirements. DevOps engineers and Site Reliability Engineers (SREs) occasionally reported a need to develop new forms of system understanding, particularly concerning the interpretation of machine learning model behaviors and the debugging of intelligent pipeline decisions. This suggests that while certain repetitive cognitive tasks may be automated, new cognitive demands related to monitoring, validating, and troubleshooting AI-driven systems may emerge. This finding may also be attributable to the early stages of adoption in many organizations, where familiarity with AI systems is still developing, rather than an inherent property of the technology itself.

Unexpectedly, the research revealed notable differences in implementation strategies across varying regulatory environments. Organizations in highly regulated industries tended to demonstrate more cautious approaches to intelligent CI/CD implementation, often developing more robust audit trails and enhanced compliance monitoring capabilities. This, in turn, appeared to contribute to overall system reliability, potentially nuancing assumptions in some literature that regulatory constraints inherently impede DevOps transformation. Instead, such constraints may compel more structured and resilient adoption patterns, though further investigation is needed to ascertain the long-term trade-offs and efficiencies of these distinct approaches. These findings may also reflect differing levels of risk aversion or access to specialized compliance expertise rather than a direct influence of the regulatory environment on technical efficacy [22].

Theoretical Contributions

This study seeks to contribute to theoretical understanding across several domains within organizational and information systems research. From a technological adoption perspective, the research extends the Technology Acceptance Model (TAM) by suggesting the potential importance of collective, rather than exclusively individual, acceptance processes in DevOps environments. The findings imply that perceived usefulness and ease of use may be more appropriately evaluated at the team and organizational levels, potentially supporting and refining aspects of Venkatesh et al.'s unified theory of acceptance and use of technology in organizational contexts. This nuanced perspective acknowledges the highly collaborative nature of DevOps, where team-level efficacy may supersede individual perceptions [23].

The study also aims to contribute to dynamic capabilities theory by illustrating how organizations might develop new forms of sensing, seizing, and transforming capabilities through intelligent CI/CD implementation [24]. This research tentatively suggests that successful organizations may cultivate meta-capabilities for managing machine learning systems within operational environments, which appears to extend beyond mere technical implementation to potentially include sophisticated governance, continuous monitoring, and adaptive improvement processes. These findings hint at a more complex interplay between technological adoption and organizational agility than previously emphasized.

Conclusion & Recommendations

In conclusion, this study provides empirical evidence suggesting that machine learning-driven automation within CI/CD pipelines can be associated with certain performance improvements, such as enhanced deployment success and reduced recovery times.

However, the findings also underscore the contextual and multi-faceted nature of such implementations. The research highlights the critical mediating role of human factors and organizational capabilities, suggesting that purely technical perspectives may oversimplify the dynamics of successful AI adoption in complex socio-technical systems. While the study indicates potential benefits, it also identifies areas where existing assumptions about cognitive load reduction or regulatory impediment may require re-evaluation.

Based on these findings, we offer several suggestions for future research and practice. Further investigation into the specific mechanisms through which human skill development mediates the success of AI integration could provide valuable insights. Additionally, comparative studies across diverse regulatory landscapes might illuminate optimal strategies for balancing innovation with compliance in intelligent CI/CD. Organizations contemplating or currently implementing intelligent CI/CD practices may find it beneficial to consider a holistic approach that simultaneously addresses technical infrastructure, human capabilities, and organizational change management. Investing in the complementary capabilities needed to effectively operate, monitor, and adapt these systems, rather than solely focusing on tool adoption, appears to be a prudent strategy. This includes fostering new skills for interpreting and debugging AI-driven decisions, which could inform talent acquisition and development strategies within DevOps organizations. These recommendations are offered as potential avenues for enhancing the efficacy and sustainability of machine learning integration in DevOps, acknowledging the inherent complexities and context-dependency of such initiatives.

Recommendations

Informed by the empirical findings and their theoretical and practical implications, this section delineates a set of recommendations intended to guide organizations in the integration and optimization of machine learning within CI/CD practices. These recommendations are structured across three domains: practical application, policy development, and future research directions, aiming to address the complexities inherent in establishing robust and efficient cloud native DevOps environments.

Figure 6: Strategic planning session for CI/CD implementation

Recommendations for Practice

Drawing from the findings of this study, several recommendations emerge for organizations contemplating or currently implementing intelligent CI/CD practices:

i. Adopt a Staged Implementation Approach: Organizations may consider initiating with pilot projects in less critical environments prior to broader deployment across production systems. This incremental approach appears to facilitate organizational learning and capability development while potentially mitigating operational risks. Evidence from the research suggests that organizations employing staged implementations often exhibited improved success rates and reduced disruptions during transition phases.

ii. Invest in Internal Capabilities: A significant investment in developing internal capabilities for machine learning operations, encompassing model monitoring, performance evaluation, and advanced troubleshooting procedures, is indicated. The study suggests that successful organizations tend to perceive intelligent CI/CD systems as intricate sociotechnical constructs requiring specialized operational expertise, rather than mere technological deployments.

iii. Form Cross-Functional Teams: The formation of cross-functional teams appears to be a critical factor in the successful implementation of intelligent CI/CD. Organizations could establish hybrid teams that integrate DevOps expertise, data science capabilities, and relevant business domain knowledge. Such teams may benefit from clear governance structures and defined decision-making authority to navigate the complex challenges that can arise during implementation.

iv. Develop Comprehensive Training Programs: Comprehensive training and skill development programs are likely essential for effective intelligent CI/CD implementation. Organizations might develop learning pathways designed to enhance machine learning literacy among existing DevOps practitioners, concurrently supporting data scientists in understanding operational requirements. These programs could emphasize experiential learning with real-world systems, complementing theoretical knowledge.

v. Establish Robust Governance Frameworks: Organizations are advised to establish robust governance frameworks specifically tailored to intelligent CI/CD systems. These frameworks should potentially encompass model validation requirements, performance monitoring protocols, incident response procedures, and mechanisms for maintaining audit trails. A collaborative development process involving both technical teams and business stakeholders could help ensure alignment with organizational objectives.

Recommendations for Policy

The study's findings indicate several important policy considerations for organizations integrating intelligent CI/CD practices:

i. Policies governing the machine learning model lifecycle management within operational environments should be developed, addressing aspects such as model development standards, validation criteria, deployment procedures, and retirement processes.

ii. Existing data governance policies may need to be extended to accommodate the specific requirements of machine learning systems operating within CI/CD pipelines. This includes considerations for data quality standards, management of model training data, and protocols for sustaining model performance over time.

iii. Policies for managing the ethical implications of machine learning integration within operational systems warrant development, specifically addressing concerns pertaining to fairness, transparency, and accountability in intelligent CI/CD implementations.

iv. Professional development policies should be updated to reflect the evolving hybrid skill requirements associated with intelligent CI/CD operations, potentially establishing career development pathways that support practitioners in cultivating cross-functional capabilities.

v. Risk management policies may require expansion to address the unique risks presented by intelligent CI/CD systems. This could involve establishing procedures for identifying and mitigating risks related to model performance degradation, unanticipated system behaviors, and potential failure cascade effects.

Recommendations for Future Research

The findings of this study suggest several avenues for future research in intelligent CI/CD and the broader context of DevOps transformation:

i. Longitudinal studies investigating the long-term outcomes and organizational impacts of intelligent CI/CD implementation could offer valuable insights into patterns of sustainability and evolution.

ii. Comparative studies examining intelligent CI/CD implementation across diverse industry contexts and organizational scales might help identify contextual factors that influence success.

iii. Research exploring the specific mechanisms through which intelligent CI/CD practices influence organizational culture and professional identity could contribute to a deeper understanding of the broader implications of these technological transformations.

iv. Studies investigating the intersection between intelligent CI/CD practices and software security outcomes represent a promising area for further inquiry.

v. Research into the development of new professional competencies and effective educational approaches for intelligent CI/CD environments could support ongoing workforce development efforts.

Research Findings: Limitations and Conclusion

Limitations and Future Research

Several important limitations should be acknowledged in the interpretation and application of the findings presented in this research. These constraints are vital for maintaining academic credibility and guiding future inquiry.

Sample Size and Generalizability

The study's reliance on a relatively limited sample size, predominantly from mid- to large-scale SaaS organizations operating in cloud-native environments, inherently restricts the generalizability of the findings. Direct extrapolation to smaller organizations, different industry sectors, or distinct technological paradigms (e.g., onpremise, legacy systems) should be approached with caution. Organizations characterized by different technology stacks, regulatory imperatives, or business models may indeed encounter distinct challenges and outcomes during the implementation of intelligent CI/CD practices.

Geographical & Cultural Generalizability

The observed geographical concentration of participating organizations, primarily located in North American technology hubs, significantly constrains the cultural and regulatory generalizability of the findings. Organizations operating in diverse geographical regions might face differing challenges pertaining to data governance, privacy regulations, professional development resources, and broader organizational cultures.

Self-Selection and Potential Bias

The inherent self-selection bias associated with recruiting organizations already engaged in or actively considering intelligent CI/CD practices may have contributed to a predisposition toward more favorable reported outcomes. Organizations experiencing significant implementation difficulties, or those that may have disengaged from intelligent CI/CD initiatives, could potentially be underrepresented in the study sample. This bias limits the ability to draw conclusions about the overall success or failure rates of such initiatives across the broader industry.

Reliance on Self-Reported Data

A significant portion of the qualitative data relied on self-reported perceptions and experiences of practitioners and leaders. While invaluable for capturing lived experiences, self-reported data can be subject to recall bias, social desirability bias, and subjective interpretations, potentially influencing the reported successes and challenges. Future research could benefit from triangulating these perceptions with objective metrics where feasible.

Temporal Constraints

The relatively contained timeframe of the study, spanning 18 months, provides a snapshot rather than a comprehensive longitudinal view. This period may not fully capture the complete spectrum of long¬term outcomes, sustainability factors, or evolutionary trajectories associated with intelligent CI/CD implementation. Longitudinal effects, including system maintenance complexities, evolving organizational learning patterns, and the impacts of continuous technological evolution, might not be fully represented within the current findings.

Difficulty Isolating ML Effects

In complex, multifaceted environments like CI/CD, it is challenging to definitively isolate the precise impact of machine learning interventions from other concurrent organizational, process, or technological changes. Many variables may contribute to observed performance improvements, making it difficult to attribute specific gains solely to ML integration without a highly controlled experimental design, which was beyond the scope of this study.

Measurement Challenges

The conceptualization and measurement of "resilience" and "efficiency" in intelligent CI/CD systems, while operationalized within the study, remain areas of ongoing academic debate. The metrics used, while standard in DevOps, may not fully capture the nuanced contributions or challenges introduced by machine learning at a granular level. Future research might explore more refined measurement frameworks specific to ML-driven operations.

Lack of Control Group

For some analyses, the absence of a distinct control group (organizations not implementing intelligent CI/CD) limits the ability to establish definitive causal relationships. While comparisons were made against baseline data or industry benchmarks, the experimental rigor that a true control group provides for isolating effects was not always present across all study components.

Rapidly Evolving Technology Landscape

Both machine learning technologies and cloud-native platforms are characterized by dynamic and rapid evolution. Consequently, specific technical findings or recommendations may become quickly outdated as new capabilities emerge and best practices evolve. While the organizational and process insights are likely to retain relevance, specific technological implementations will necessitate periodic updating.

Potential Publication Bias

There exists a general tendency in research to report positive or significant findings, which could lead to an underrepresentation of studies detailing unsuccessful intelligent CI/CD implementations or those yielding inconclusive results. While this study aimed for a balanced perspective, the broader academic landscape might inadvertently contribute to an overly optimistic portrayal of technology adoption. The findings of this study suggest several avenues for future research in intelligent CI/CD and the broader context of DevOps transformation: