Research Article - (2024) Volume 5, Issue 2

Human-Trust Based Feedback Control (HTBFC) Five-Dimensional Manifold Info-Geometric Analysis and how HTBEC Impacts Robotics

Received Date: Mar 08, 2024 / Accepted Date: Mar 30, 2024 / Published Date: Apr 10, 2024

Copyright: ©Â©2024 Ismail A Mageed. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Mageed, I. A. (2024). Human-Trust Based Feedback Control (HTBFC) Five-Dimensional Manifold Info-Geometric Analysis and how HTBEC Impacts Robotics. Adv Mach Lear Art Inte, 5(2), 01-11.

Abstract

This work examines the five-dimensional HTBFC Manifold’s info-geometrics using effective information geometry (IG) techniques. The performance of HTBFC can be analysed from a novel relativistic approach by merging IG framework. Subcases of manifolds in two and three dimensions are studied. Human-machine interactions (HMIs) can now be unified through IG in a novel way because to this study. The presentation includes the essential robotic applications of HTBFC. A few newly identified unsolved issues and suggestions for additional study are included in the conclusions.

Keywords

Human- Machine Interactions (HMIs), Human-Trust Based Feedback Control (HTBFC), Robotics

Introduction

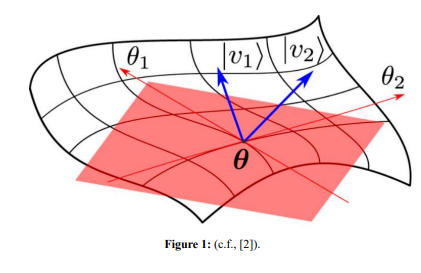

Information geometry (IG) is a state-of-the-art geometric methodology that looks at both model analysis and geometry visualization from an IG standpoint. The wide spectrum of IG applicability includes much-needed new disciplines, such as machine learning [1]. Even more intriguingly, statistical manifolds (SMs) were studied using IG. Figure1 illustrates a statistical manifold SM(θ) [2], θ ∈ â?ÂÃÃÂ??ÂÃÂ?ÂÂn

Figure 1: (c.f., [2])

In this context, IG is a geometric methodology used to analyze models and visualize geometry. It involves studying statistical manifolds, which are defined by probability measurements. The Fisher information metric (FIM) is a key concept in IG, representing a smooth statistical manifold that quantifies the informative difference between measurements [3,4].

The current exposition establishes the first- time ever revolutionary HTBFC info-geometric analysis. The real motivation behind this current innovative study is based on the provided probabilistic distribution of human response time [5]. This drives our creative line of investigation into the reimagining of IG-connected HumanMachine Interactions (HMIs)

This paper’s mind map reads: Section II provides IG definitions. Section III provides a summary of the HTBFC's historical background. The primary results are presented in Section IV by calculating FIM, its inverse, and the FIM of the HTBFC. Section V highlights the important contribution that HTBFC has made to the advancement of robotics. In conclusion, Section VI includes next phase research as well as a few difficult unsolved challenging open problems.

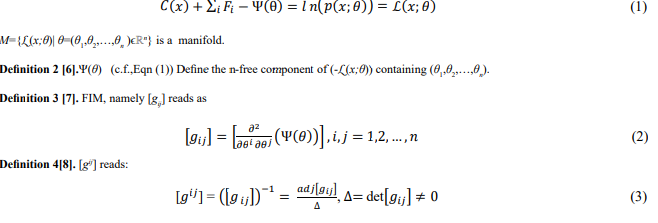

Definitions

Definition 1 [6]

. M={p(x,θ)|θÃÃÃÃÃÃÃÃÂ???????ÂÃÃÃÃÃÃÂ??????ÂÃÃÃÃÃÂ?????ÂÃÃÃÃÂ????ÂÃÃÃÂ???ÂÃÃÂ??ÂÃÂ?µΘ} is SM with a probability density function p(x,θ) and coordinates, (θ1 ,θ2 ,..,θn )ÃÃÃÃÃÃÃÃÂ???????ÂÃÃÃÃÃÃÂ??????ÂÃÃÃÃÃÂ?????ÂÃÃÃÃÂ????ÂÃÃÃÂ???ÂÃÃÂ??ÂÃÂ?µΘ. 2. Θ= {pθ |θÃÃÃÃÃÃÃÃÂ???????ÂÃÃÃÃÃÃÂ??????ÂÃÃÃÃÃÂ?????ÂÃÃÃÃÂ????ÂÃÃÃÂ???ÂÃÃÂ??ÂÃÂ?µΘ} is given by

.

The Information Geometrics of the HTBFC

A. Background: HCIs and HTBFC

There is a great need for humans to supervise and intervene in automated systems despite technological advancements. Therefore, future HMIs will require efficient coordination, and emphasize the importance of human trust in automation to achieve this coordination [9-13].

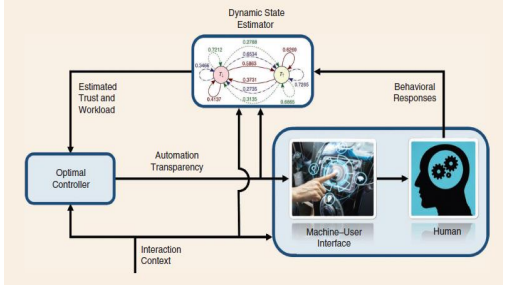

HTBFC is crucial in interactions with automation. To improve this interaction, the user interface can be augmented with more information, which is called automation transparency. Higher levels of transparency increase access to information and help in decision making, leading to an increase in trust. However, high transparency also increases workload and may lead to fatigue that reduces performance. Therefore, for optimal or nearly ideal performance, adaptive automation should vary based on workload - human trust variations utilizing a dynamic model of human behavior implemented through control rules [14-17].

Researchers have studied human behavior and developed models of trust, showing that transparency influences both trust and workload. However, there is currently no model that can sufficiently detect how human behaviour regarding workload and trust are dynamically impacted by automation transparency. This gap needs to be addressed for control policies based on changes in transparency to improve interactions between humans and machines [18,19]. Figure 2 illustrates a trust-based feedback control approach that uses automation transparency to improve context-specific performance targets during HMIs [5].

Figure 2: A ddiagram that shows how humans and machines interact with each other [5]

The importance of human trust in automation is demonstrated by its influence on overall performance [5]. Lack of trust or over trust can lead to negative consequences, so it's important to calibrate human trust for optimal interactions with machines. The article presents a framework that uses probabilistic modeling and behavioral data to adjust the amount and utility of information provided by automation based on human workload dynamics. This approach has been experimentally validated with positive results, suggesting that dynamically updating transparency levels can optimize team performance in real-time adaptive automation systems for humans interacting with intelligent decision aid systems.

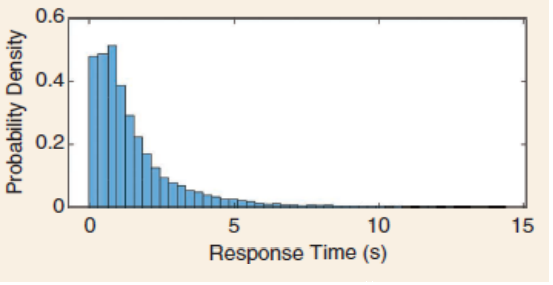

The authors of have explored response time and how it is analyzed in experimental psychology [5]. Response time refers to the duration between a stimulus being presented and a human's response, which can vary from trial to trial for the same person. The positively skewed unimodal structure of the response time distribution makes it impossible to represent it as a Gaussian distribution using just mean and variance. However, one of the most common distributions is an exponentially modified Gaussian (exGaussian) distribution (c.f., figure 3), which is formed by mixing an exponential and Gaussian component. Standard distributions such as gamma or log-normal have also been utilized in literature.

Figure 3: The shape of a response time distribution, which is used to measure how long it takes for humans to respond to stimuli [5]. The distribution has a skewed, bell-shaped curve with most responses occurring quickly and fewer taking longer- times

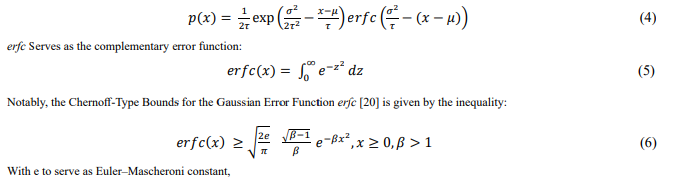

The ex-Gaussian distribution is a statistical model used to describe human response time [5]. The distribution has three parameters: μ,σ and τ represent the average and a Gaussian component’s standard deviation. In this paper, τ defines the decay rate of an exponential component, with σ,τ>0. Researchers have attributed these components to decision-making processes and residual processes in humans, but this idea remains unproven. However, it is discovered that the ex-Gaussian distribution fits response time data more accurately than other distributions, such as the gamma or log-normal distributions. Having defined σ,τ>0, the ex-Gaussian distribution is characterized by the probability density function

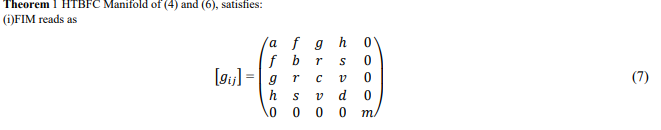

The Information Geometrics of the HTBFC Manifold

Based on the above analysis, [gij] always exists in the twodimensional case, whereas its existence is constrained by τ ≠ 4 + √19, τ,μ, and λ are positive real numbers. It is expected that the four-dimensional case will impose more restrictions to the existence of [g^

HTBFC Applications to Robotics

Researchers ran an experiment where participants engaged with a simulation comprising numerous reconnaissance missions to better understand HTBFC and workload in a decision-aid system [16]. Participants had to search buildings to assess their level of safety or danger based on the presence of armed men. To maximize both speed and safety, a decision-assistance robot made suggestions regarding whether to wear light or heavy armor during the hunt. The study examined the effects of various system transparency levels on user workload, performance, and humanrobot interaction trust.

Participants were helped by a robot that varied in transparency during each mission of the experiment [16]. The low transparency robot suggested Armor and disclosed whether there were any armed assailants. As figure 4 [16] illustrates, it is crucial to remember that various degrees of transparency might change according to automation, context, and viability.

The participants completed the six trials that made up the instructional mission before the main experiment started so they could get acquainted with the three degrees of transparency and the research interface. Every participant was assigned the identical lesson task. To mitigate potential biases and the impact of variables like task completion order, participants were randomised to the missions for every transparency level.

As part of the study, participants had to evaluate robot reports on whether there were shooters inside a facility. Seventy percent of the time, the robot's suggestions were accurate, and the errors were either misses or false alarms, see figure 5(c.f., [16])

Figure 5: The displayed information is relevant for understanding the timing and presentation of data during the trial, providing insights into the experimental setup and the temporal aspects of the study.

Most existing trust models in human-robot interaction (HRI) are designed for specific types of interactions or robotic agents, making it difficult to compare their accuracy [21]. This calls for the creation of a general HRI trust model that can be used in a variety of robotic domains, doing away with the necessity to create unique models of trust for each one.

Within HRI’s domain, trust is a multifaceted notion that can be divided into two categories: relation-based trust and performancebased trust [22-24]. A more encompassing definition of trust is required since current definitions from other domains do not adequately convey the multifaceted nature of trust within HRI.

Additionally, while there are studies on trust violation and repair, there is a need for research exploring the dynamics of trust loss and repair over time, particularly in relation to different types of failures and trust repair strategies. This raises issues about the efficacy of trust-repair techniques in long-term interactions as well as the effect of growing accustomed to a robotic agent on trust loss resulting from robot failure [22-24]. Additionally, it draws attention to the dearth of trust models that are currently available and applicable to a variety of robotic activities and domains, which makes it more difficult to assess and contrast trust models. Notably, this suggests the potential use of trust measurement methods from other fields, such as psychology and sociology, to accurately assess trust in human-robot interaction and develop trust models independent of various influencing factors.

Existing trust models in HRI are often specific to certain types of interactions, tasks, or robotic agents, making them difficult to compare or apply to different domains [22-24]. This lack of a general trust model hinders the evaluation and development of trust models in HRI. It would be unnecessary to develop new models for every new robotic activity if there was a universal trust model that could be used across different robotic activities and domains. Many disciplines, including psychology, sociology, and physiology, are interested in the concept of trust. In these domains, trust is measured using a variety of indicators, including objective evaluations like trust games and physiological measurements. By using these measurement techniques, trust in HRI can be assessed more accurately, circumventing the drawbacks of existing techniques, and aiding in the development of trust models that are not influenced by a variety of variables.

Conclusion and Future Works

The goal of the current study is to use robust IG methods to assess HTBFC info-geometrically. To enable relativistic analysis of its linked manifold and to offer fresh, imaginative insights into HTBFC performance, IG is included into HTBFC theory. This current study’s arising open problems are:

• How feasible is it to calculate the inverse FIM (c.f., (19)) in four dimensions?

• Having solved open problem 1, can we proceed with this revolutionary IG analysis to obtain the exact form of [gij] (c.f., (19)) for the five-dimensional HTBFC manifold?

• Assuming the solvability of open problem 2, is it possible to employ this influential IG approach to analyse the dynamics of Human- Driven vehicles?

• Future research pathways include finding answers to these open problems.

References

1. Mageed, I. A., Zhou, Y., Liu, Y., & Zhang, Q. (2023, August). Towards a Revolutionary Info-Geometric Control Theory with Potential Applications of Fokker Planck Kolmogorov (FPK) Equation to System Control, Modelling and Simulation. In 2023 28th International Conference on Automation and Computing (ICAC) (pp. 1-6). IEEE.

2. [Belliardo, F., & Giovannetti, V. (2021). Incompatibility in quantum parameter estimation. New Journal of Physics, 23(6), 063055.

3. S. Eguchi, S., & Komori, O. (2022). Minimum Divergence Methods in Statistical Machine Learning.

4. I. Mageed, I. A., Zhang, Q., Akinci, T. C., Yilmaz, M., & Sidhu, M. S. (2022, October). Towards Abel Prize: The Generalized Brownian Motion Manifold's Fisher Information Matrix With Info-Geometric Applications to Energy Works. In 2022 Global Energy Conference (GEC) (pp. 379-384).

5. XYang, X. J., Schemanske, C., & Searle, C. (2021). Toward quantifying trust dynamics: How people adjust their trust after moment-to-moment interaction with automation. Human Factors, 65(5), 862-878.

6. Kondor, R., & Trivedi, S. (2018). On the generalization of equivariance and convolution in neural networks to the action of compact groups. In International conference on machine learning (pp. 2747-2755). PMLR.

7. A. Mageed, X. Yin, Y. Liu and Q. Zhang. (2023). "Z(a,b) of the Stable Five-Dimensional M/G/1 Queue Manifold Formalism's Info- Geometric Structure with Potential Info-Geometric Applications to Human Computer Collaborations and Digital Twins. 28th International Conference on Automation and Computing (ICAC), Birmingham, United Kingdom, 2023, pp. 1-6.

8. Mageed, I. A., & Kouvatsos, D. D. (2021, February). The Impact of Information Geometry on the Analysis of the Stable M/G/1 Queue Manifold. In ICORES (pp. 153-160).

9. Kousi, N., Dimosthenopoulos, D., Aivaliotis, S., Michalos, G., & Makris, S. (2022). Task allocation: Contemporary methods for assigning human–robot roles. The 21st Century Industrial Robot: When Tools Become Collaborators, 215-233.

10. Soga, L. R., Vogel, B., Graça, A. M., & Osei-Frimpong, K. (2021). Web 2.0-enabled team relationships: A perspective. European Journal of Work and Organizational Psychology, 30(5), 639-652.

11. Simon, L., Guérin, C., Rauffet, P., Chauvin, C., & Martin, É. (2023). How humans comply with a (potentially) faulty robot: Effects of multidimensional transparency. IEEE Transactions on Human-Machine Systems.

12. Lee, J. D., & See, K. A. (2004). Trust in automation: Designing for appropriate reliance. Human factors, 46(1), 50-80.

13. Sheridan, T. B., & Parasuraman, R. (2005). Human-automation interaction. Reviews of human factors and ergonomics, 1(1), 89-129.

14. Skraaning, G., & Jamieson, G. A. (2021). Human performance benefits of the automation transparency design principle: Validation and variation. Human factors, 63(3), 379-401.

15. O’Neill, T., McNeese, N., Barron, A., & Schelble, B. (2022). Human–autonomy teaming: A review and analysis of the empirical literature. Human factors, 64(5), 904-938.

16. Akash, K., McMahon, G., Reid, T., & Jain, N. (2020). Human trust-based feedback control: Dynamically varying automation transparency to optimize human-machine interactions. IEEE Control Systems Magazine, 40(6), 98-116.

17. Han, L., Du, Z., Wang, S., & Chen, Y. (2022). Analysis of traffic signs information volume affecting driver’s visual characteristics and driving safety. International journal of environmental research and public health, 19(16), 10349.

18. Akash, K., Polson, K., Reid, T., & Jain, N. (2019). Improving human-machine collaboration through transparency-based feedback–part I: Human trust and workload model. IFAC- PapersOnLine, 51(34), 315-321.

19. Akash, K., Reid, T., & Jain, N. (2019). Improving human- machine collaboration through transparency-based feedback– part ii: Control design and synthesis. IFAC-PapersOnLine, 51(34), 322-328.

20. Han, Q., & Kato, K. (2022). Berry–Esseen bounds for Chernoff- type nonstandard asymptotics in isotonic regression. The Annals of Applied Probability, 32(2), 1459-1498.

21. Khavas, Z. R., Ahmadzadeh, S. R., & Robinette, P. (2020). Modeling trust in human-robot interaction: A survey. In International conference on social robotics (pp. 529-541). Cham: Springer International Publishing.

22. Khavas, Z. R. (2021). A review on trust in human-robot interaction.

23. Wang, Q., Liu, D., Carmichael, M. G., Aldini, S., & Lin, C. T. (2022). Computational model of robot trust in human co- worker for physical human-robot collaboration. IEEE Robotics and Automation Letters, 7(2), 3146-3153.

24. Aldini, S., Akella, A., Singh, A. K., Wang, Y. K., Carmichael, M., Liu, D., & Lin, C. T. (2019). Effect of mechanical resistance on cognitive conflict in physical human-robot collaboration. In 2019 international conference on robotics and automation (ICRA) (pp. 6137-6143). IEEE.