Research Article - (2022) Volume 6, Issue 2

Findel: A Deep Learning Approach To Efficient Artifact Removal from Cancer Genomes

2SenseTime Research, Shanghai 200233, China

3Qing Yuan Research Institute, Shanghai Jiao Tong University, Shanghai 200240, China

Received Date: Nov 20, 2022 / Accepted Date: Nov 26, 2022 / Published Date: Dec 06, 2022

Copyright: ©Â©2022 : Jie Zhang. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Tan, D., Zhou, P., Zhang, S., Wong, P., Zhang, J., Long, E. (2022). Findel: A Deep Learning Approach To Efficient Artifact Removal from Cancer Genomes. Stem Cell Res Int, 6(2), 140-152.

Abstract

Next-generation sequencing technologies have increased sequencing throughput by 100-1000 folds and subsequently reduced the cost of sequencing a human genome to approximately US$1,000. However, the existence of sequencing artifacts can cause erroneous identification of variants and adversely impact the downstream analyses. Currently, the manual inspection of vari- ants for additional refinement is still necessary for high-quality variant calls. The inspection is usually done on large binary alignment map (BAM) files which consume a huge amount of labor and time. It also suffers from a lack of standardization and reproducibility. Here we show that the use of mutational signatures coupled with deep learning can replace the current stan- dards in the bioinformatics workflow. This software, called FINDEL, can efficiently remove sequencing artifacts from cancer samples. It queries the variant call format file which is much more compact than BAM files. The software automates the variant refinement process and produces high-quality variant calls.

Keywords

Bioinformatics Software, Cancer Genomics, Deep Learning, Machine Learning, Mutational Signatures, Sequencing Arti- facts, Somatic Mutations, Variant Refinement

Introduction

Since the discovery of the DNA structure in 1953, significant progress in genetic understanding has been made over the past few decades [1]. The advent of DNA sequencing instruments made possible the Human Genome Project (HGP) which was a global research initiative in 1990 to sequence the entire human genome consisting of 3 billion nucleotide base pairs [2, 3]. The internation- al and cross-disciplinary nature of HGP has popularized the idea of open-source software and data accessibility where scientists and researchers all over the world have equal access to the ever-in- creasing repository of human genomes. The availability of indi- vidual genome data through cloud infrastructure and technologies has revolutionized the field of precision medicine where scientists can mine valuable information and recommend personalized treat- ment [4]. This can greatly improve the healthcare standards of the current and future generations.

The completion of the HGP has led to higher demand for sequenc- ing technologies and datasets to solve more sophisticated biolog- ical problems. However, those efforts were hampered by the rel- atively low throughput and high costs of sequencing at that time [5]. This was changed in the mid-2000s with the invention of the high-throughput sequencing platform which resulted in a 50,000 times decrease in the cost of sequencing the entire human genome [6]. The promising results led to the term “next-generation se- quencing” (NGS) which refers to platforms capable of massively parallel sequencing technologies that enable ultra-high through- put, scalability, and speed [7]. NGS technologies have increased sequencing throughput by 100-1000 folds and subsequently re- duced the cost of sequencing a human genome to approximate- ly US$1,000 [8]. These advances have made possible the use of sequencing technologies in clinical settings [6]. NGS technologies have found large-scale usage in de novo sequencing, mapping of diseases quantifying expression levels using RNA sequencing, and conducting population genetic studies [9-16].

NGS technologies generate millions to billions of short-read sequences with read lengths of around 75-300 base pairs (bp) . More advanced NGS technologies (PacBio, Nanopore, 10x Ge- nomics) are capable of much longer read sequences of more than 10 kilobases [17]. NGS technologies, while cheap and fast, are not flawless. The sequencing procedure is just the initial step of a typical bioinformatics pipeline that spans multiple phases. The raw sequence reads produced by the sequencing machine is stored in a FASTQ or unaligned Binary Alignment Map (uBAM) format. These formats are text-based which store information on the se- quence reads such as read identifiers and base quality scores. Next, the reads are aligned to a reference genome and the related meta- data are stored in either a Sequence Alignment Mapping (SAM), Binary Alignment Mapping (BAM), or CRAM file formats [18]. These files contain alignment characteristics such as matches, mismatches, and gaps represented in the Concise Idiosyncratic Gapped Alignment Report (CIGAR) format [19]. The BAM or equivalent files are consumed downstream through variant calling algorithms to identify genetic mutations such as single nucleotide polymorphisms (SNPs) consisting of transversions and transitions, insertions and deletions (InDels), and tumor mutation burden [20, 21]. The identified variants are stored in a tab-delimited text file format commonly known as the Variant Call Format (VCF).

Due to the downstream implications of NGS data, the inaccurate reads can cause erroneous identification of variants, specifically false positives (FP) and false negatives (FN). NGS data are sus- ceptible to errors due to a myriad of factors such as base-calling and alignment errors [22]. This is further exacerbated by the lack of standardization in bioinformatics pipelines which are crucial to obtaining the correct data interpretation and clinical insights [23]. Errors in variant calling can result in missed detection of mutations which can be disastrous. Mutations are the cause of several diseas- es especially cancer [23].

A major shortfall of NGS technology is the frequent incorrect scoring of bases attributed to the existence of artifacts during the sample preparation and sequencing stage [24]. Heterogeneous mixtures can suffer from amplification bias during the polymerase chain reaction (PCR) process which produces skewed populations [25]. Other types of polymerase mistakes during the PCR stage such as base misincorporations and rearrangements because of template switching can also cause inaccurate variant calls. Clus- ter amplification, sequencing cycles, and image analysis are also prone to mistakes contributing to roughly 0.1-1% of bases being erroneously called [26]. These artifacts present a challenge for calling rare genetic variants as deep sequencing is ineffective when the base call error rate is high. This effectively limits the applica- tion of NGS in fields such as metagenomics, forensics and human genetics [27-30]. The accuracy demands of some clinical applica- tions are even higher where the base calling error rate has to fall below 1 in 10,000. Examples of these clinical applications include detection of circulating tumor DNA monitoring of response to chemotherapy using personalized tumor biomarkers, and prenatal screening for fetal aneuploidy [31-34]. Due to these artifacts, the common NGS technologies suffer from a base-calling error rate of around 1 in 100 which severely falls short of the standard required by these clinical applications. Automated pipelines to facilitate reads-to-variants workflow are widely available in this day and age, a prominent one being the Genome Analysis Toolkit (GATK) Best Practices Workflows which contain stepby-step recommendations for conducting vari- ant discovery analysis using NGS data. These pipelines have built- in filters to remove false variant calls from sequencing errors, read misalignments, and other types of errors. However, there is little support for the efficient removal of artifacts due to variant call- er inaccuracies [35]. According to the Association for Molecular Pathology (AMP) guidelines for interpretation and annotation of somatic variation, it is crucial to perform additional refinement of somatic variants to remove variant caller inaccuracies as these can result in suboptimal patient management and therapeutic opportu- nities [36, 37]. As of now, the standard practice for somatic variant refinement is to manually inspect the variants and this is usually performed by trained personnel such as bioinformaticians. Manual inspection is useful for incorporating domain knowledge common- ly excluded by automated variant callers such as Mutect Somat- icSniper Strelka and VarScan2 [38-41]. They can detect inaccurate variant calls due to amplification bias of small fragments, errors in sequencing reads, and poor alignment in certain areas [35]. Ef- forts have been made to develop automated methods for variant refinement but progress is slow due to computational limitations. Manual inspection of variants for additional refinement is still nec- essary for high-quality variant calls and subsequent downstream analyses [42].

Although manual inspection of variants has been utilized for sev- eral years in clinical diagnostic and molecular pathology appli- cations somatic variant refinement strategies are not documented extensively and reported minimally by studies involving postpro- cessing of automated variant calls [43-49]. These results in a lack of standardized protocol for somatic variant refinement, increased variability of work performed by different labs, and difficulty in reproducing the work of others [49]. Additionally, manual inspec- tions are usually conducted on BAM files using genomic viewers such as Integrative Genomics Viewer (IGV) Savant Trackster and BamView [50-54]. These BAM files are extremely large and re- quire sophisticated storage solutions. This is because the format stores data on a per-read basis and the space requirement grows almost linearly with the number of reads [55]. A BAM file for a 30x whole genome requires about 80-90 gigabytes of storage. A lab or research institution which handles around 1000 samples needs to secure approximately 80 terabytes of disk space [56]. The sheer size of these BAM files impedes the progress of the manual inspection process which will require more labor and time. A more efficient and scalable variant refinement solution with light pro- cessing and storage requirements while eliminating variability in the downstream analyses needs to be developed.

A promising area of research beneficial to variant refinement lies in the study of mutational signatures. Mutational signatures have been linked to mutational processes which drive somatic mutations in cancer genomes [57]. The Pan-Cancer Analysis of Whole Genomes (PCAWG) Consortium of the International Can- cer Genome Consortium (ICGC) and The Cancer Genome Atlas (TCGA) analyzed 84,729,690 somatic mutations identified from 4,645 whole-genome and 19,184 exome sequences that cover a wide range of cancer types and generated many mutational sig- natures [58, 59]. The mutational signatures consist of 49 single- base-substitution (SBS), 11 doublet-base-substitution (DBS), 4 clustered-base-substitution, and 17 small insertion-and-deletion (Indel) signatures. Further classification of each mutation type was performed whereby SBSs consisted of 96 classes , DBSs consisted of 78 classes , and Indels consisted of 83 classes . These mutational signatures can be found in the Catalogue of Somatic Mutations in Cancer (COSMIC ) database [60].

The PCAWG study also discovered that DNA sequencing artifacts, analysis artifacts, technical artifacts, variability in NGS technolo- gies, and usage of different variant calling methods can generate characteristic mutational signatures as well [59]. These discoveries open up the possibility of using mutational signatures to filter arti- fact-mediated variant calls from true variants. Our team proposes FINDEL (Find & Delete), a variant refinement software written in Python programming language that removes sequencing artifacts from human cancer samples using mutational signatures and deep learning. Along with each sample, FINDEL generates a Hypertext Markup Language (HTML) report and 2 separate VCF files con- taining refined and artifactual mutations respectively. FINDEL is light on computational requirements and is capable of running on a simple local machine. The performance and speed of FINDEL are validated using open-source datasets.

Methods

Overview of FINDEL

The main function of FINDEL is to automate the process of re- moving sequencing artifacts using mutational signatures from the COSMIC database coupled with a deep learning approach. The software is intended for use in the variant refinement stage and is not meant to replace the variant calling algorithms. FINDEL can remove variability in the bioinformatics pipeline within the post variant calling phase. Currently, FINDEL focuses on artifacts involving single-base substitutions (SBSs) or also known as sin- gle-nucleotide polymorphisms (SNPs). SNPs form the majority of the genetic variations found in the human genome. In a typical human genome, more than 99.9% of variants are characterized as SNPs and short indels with SNPs being 6-7x higher in frequency than short indels [61]. SNPs are also responsible for the onset of multiple cancer types including breast cancer chronic lymphocyt- ic leukemia neuroblastoma gastric carcinogenesis prostate cancer and others [62-79].

Identification of Single-Base Substitutions Mutational Signa- tures Linked to Sequencing Artifacts

The initial step in building the artifact removal algorithm is to identify the SBS mutational signatures that are linked to the se- quencing artifacts. These can be found in the COSMIC database. A total of 18 SBS mutational signatures have been classified as possible sequencing artifacts: SBS27, SBS43, and SBS45-SBS60 . The remaining signatures either have valid proposed aetiologies or unknown causes. As of now, SBS signatures can be segregated into 96 different contexts. There are 6 possible base substitutions: C>A, C>G, C>T, T>A, T>C, and T>G. Each base substitution can be further analyzed in its 5’ and 3’ nucleotide context forming a total of 96 trinucleotide contexts [80].

Input Requirements for Algorithm

FINDEL takes in a VCF file containing a single sample. PyVCF module in Python is used to parse the VCF file for further process- ing steps. The other input required by the algorithm is the reference sequence. The reference sequence is used to extract the mutation type and reference context.

Output Files

FINDEL outputs 2 separate VCF files containing the refined and artifactual mutations respectively. The refined VCF file can be used for downstream analyses.

Supervised Deep Learning

After the information from the mutational signatures are obtained, we propose a supervised deep neural network-based method to di- rectly predict the mutation types from the VCF file. We devised the specific modules to deal with a variety of features in addition to the mutational signatures and utilized a complex network to fuse the diverse information for the mutation site identification. The deep learning architecture has a comprehensive embedding rep- resentation to enhance the algorithm performance. Upon training completion, FINDEL can conduct inference efficiently without the need for a customized variant refinement optimization process for every new cancer sample.

Data Preprocessing

Firstly, we constructed the features of mutation types. All point mutations are classified into one of the 96 SBS nucleotide con- texts, represented by an index ranging from 0 to 95. Secondly, we parsed the site features. Specifically, we used the “INFO” and “SAMPLE” columns from the provided input VCF file. One-hot encoding and standard scaling were used to preprocess the discrete and continuous features respectively. Thirdly, we built context fea- tures. We selected 10 bases at the left and right of each mutation site (a total of 21 bases). Each base is represented by a number (T:0, C:1, A:2, G:3, others:4), and the context feature was repre- sented as a 21-dimensional vector. Finally, we adopted the Hap. py tool to process the original annotation file as the ground-truth labels in this study.

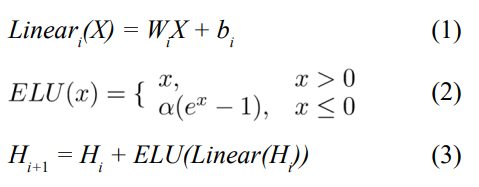

Neural Network Architecture

Fig. 1 shows the proposed neural network architecture. We used a learnable embedding layer to map the 96-dimensional mutation type into a high-dimensional space. Each mutation type is con- verted into a point in the high-dimensional space, the coordinates of which constitute an embedding vector for the type. The parsed site features were combined and fed into a linear layer. The refer- ence context is passed into another embedding layer, converting the 21 bases in the mutation neighborhood into a two-dimensional context matrix. We used a two-layer 1D-convolution to model the multiple sequences and extract the backward and forward relation- ships in the context matrix. The context matrix was transformed into a vector.

After extracting the mutation types, site features, and context features, we concatenated the embedding vectors and fed them into a five-layer fully connected network. Each layer consisted of dropout linear transformation, ELU (Exponential Linear Unit) ac- tivation function, and highway module. The dropout was used at the beginning of each layer to randomly set the unit weights to 0, helping the model avoid overfitting specific features. Equations (1), Equations (2), and Equations (3) show the formulas for the linear transformation, activation function, and highway module respectively:

where W and b are learnable parameters. The ELU hyperparameter α controls the value at which the activation function saturates for negative inputs. The highway module connects the input of each layer to the end, solving the gradient vanishing problem caused by overly deep networks.

Equation (4) defines the cost function.

L = LBCE + γLL2 (4)

LBCE refers to the binary cross-entropy loss while γLL2 is the L2 regularization which adds a penalty term to reduce overfitting. We adopted the mini-batch gradient descent algorithm for training and each batch consisted of 5000 variants. The Adam optimizer was used to update the network weights. All hyperparameters were tuned using grid search and evaluated on the validation set. The GIAB consortium reference samples (HG001-HG007) were used for training and testing. Two different training methods were used. The first method built 1 model using 1 VCF file. Within each file, the SNPs were split such that 60% of them were part of the train set, 20% of them were part of the validation set, and 20% of them were part of the test set. The second method used all the SNPs from 1 VCF file and tested them on all the remaining files. For example, a model was trained on all the SNPs from the HG001 VCF file and evaluated on the SNPs from the HG002-HG007 files. Table I shows the final values chosen for the hyperparameters.

Evaluation Data Sources and Processing

The performance and speed of FINDEL are evaluated using high-quality open-source datasets. For the proof of concept train- ing and validation, we will be using 4 different datasets containing a total of 33 VCF files.

HCC1954 Cell Line

This breast cancer cell line whole genome sequencing (WGS) data from a 61 years old Asian female was initiated on October 30 1995 and took around 4 months to establish [81]. We downloaded the VCF file and the benchmarking results from the International Can- cer Genome Consortium Data Portal [82]. The reads were aligned to the hs37d5 reference genome. The benchmarking results con- taining the true positive and false positive variants were stored in 2 mutation annotation format (MAF) files which are tab-delimited text files with aggregated mutation information on a project level. No processing was required since the VCF file was provided di- rectly. A total of 1 VCF file was obtained.

Formalin-Fixed Paraffin-Embedded (FFPE) and Fresh Frozen Whole Exome Sequencing (WES) Samples

This study was part of an attempt by Samsung Medical Center to compare the WES data between FFPE and fresh frozen sam- ples. The datasets were obtained from the Sequence Read Archive (SRA) with the accession number PRJNA301548 [83]. The sam- ples were sequenced using the Illumina HiSeq 2000 sequencing system . We used the DNA sequencing data from the following 3 runs: SRR2911437, SRR2911438, and SRR2911453. The paired- end reads were aligned to the HG19 reference genome using the Burrows-Wheeler Alignment tool [84] with the Maximal Exact Match option, more commonly know as the BWA-MEM algo- rithm. After the reads were aligned, Mutect2 was chosen for the variant calling process. The tumor-only mode with default settings was used. A total of 3 VCF files was obtained.

Whole Exome Sequencing of Biological and Technical Repli- cates in Breast Cancer Samples

This study was conducted by the Memorial Sloan Kettering Can- cer Center on 3 Feb 2016. The datasets were obtained from the SRA with the accession number SRP070662. The samples were sequenced using the Ion PGM sequencing system We used the DNA sequencing data from the following 22 runs: SRR3182418, SRR3182419, SRR3182421, SRR3182423, SRR3182424, SRR3182427, SRR3182429, SRR3182430, SRR3182431, SRR3182432, SRR3182436, SRR3182437, SRR3182440, SRR3182442, SRR3182444, SRR3182446, SRR3182447, SRR3182474, SRR3182475, SRR3182476, SRR3182477, and SRR3182478. The processing steps are the same as the FFPE and fresh frozen WES datasets. A total of 22 VCF files was obtained.

Genome in a Bottle (GIAB) Consortium Reference Samples

The GIAB consortium is jointly hosted by the National Institute of Standards and Technology (NIST) and the Joint Initiative for Metrology in Biology (JIMB) to develop the technical infrastruc- ture and improve clinical insights from whole human genome sequencing. GIAB has a total of 7 human genomes: 1 pilot ge- nome of Utah/European ancestry (NA12878/HG001) from the HapMap project , and 2 son/father/mother trios of Ashkenazi Jew- ish (HG002/HG003/HG004) and Han Chinese (HG005/HG006/ HG007) ancestry from the Personal Genome Project. Each human genome is also accompanied by benchmark variant calls and re- gions to validate variant calling pipelines.

These are considered the gold standard benchmarks within the bio- informatics community. The processing steps are mostly the same as the FFPE and fresh frozen WES datasets with some differences. The b37 reference genome was used in place of the HG19 reference genome. Both originate from the GRCh37 reference genome with some minor differences. However, from a bioinformatics pipeline technical perspective, these variations of reference genomes are not directly interchangeable due to contig name changes. The dis- crepancies are further elaborated in .The change was due to the addition of the germline resource and the panel of normals (PoN) during the Mutect2 variant calling process. Both VCF files used the b37 reference genome. Therefore, the alignment phase re- quired the use of the same reference genome as well. A total of 7 VCF files was obtained. The HCC1954 cell line, FFPE and fresh frozen WES, and WES of biological and technical replicates in breast cancer datasets lack the labeling of true mutations. DL_v11 used an unsupervised training method with a negative objective function as the loss function. In the GIAB annotated dataset, we used chromosomes 1 to 19 as the training set, chromosomes 21 and 22 as the validation set, and chromosome 20 as the test set. We used cross-entropy as the loss function,

Results

FINDEL will be evaluated based on its performance and speed on the evaluation data. For performance evaluation, benchmark VCF files are required as they contain the ground truth variant calls. The datasets without benchmark VCF files will be used for speed evaluation. The following 5 metrics will be used for performance evaluation:

precision = TP/(TP + FP) (5)

recall = TP/(TP + FN) (6)

F1 = (2∗ precision ∗ recall)/(precision + recall) (7)

accuracy = (TP + TN)/(TP + FN + TN + FP) (8)

specificity = TN/(TN + FP) (9)

where TP refers to true positives, TN refers to true negatives, FP refers to false positives, and FN refers to false negatives. Elapsed time, in either minutes or seconds, will be used for comparing the speed of the models. From now on, “r_artifact_removal” and “py_ artifact_removal” will be used to refer to the R and Python model respectively before applying deep learning while “DL_v11” will be used to refer to the Python model after applying deep learn- ing. These terms are mainly used in the comparison of the model results pre and post-deep learning. The software, which outputs additional analyses and VCF files beyond the final trained model, is still named FINDEL.

The initial version of the DL_v11 model was trained on the HCC1954 cell line dataset. Although both performance and speed results are shown in Table II, the main focus is on the difference in speed between the models written in Python vs R programming language. The elapsed time of py_artifact_removal is less than 3 minutes while the elapsed time of r_artifact_removal is 305 min- utes. This is a significant speedup of more than 100 times while maintaining similar performance. The objective function value of py_artifact_removal is 80.34 while the objective function value of r_artifact_removal is 80.67. The higher the value, the better the performance. The difference is negligible in this case. Meanwhile, DL_v11 achieves a significantly higher objective function value of 89.21 with a training and inference time of 20 and less than 3 minutes respectively. However, the performance improvement based on this dataset alone is not robust enough. As seen from the table, there is very little labeled data available. Only 214 variants are labeled with 213 of them being classified as positive SNPs and only 1 negative SNP.

From a supervised deep-learning perspective, this is an imbal- anced classification problem and the performance metrics might be biased. Even though DL_v11 correctly predicted 211 out of 213 positive SNPs, it failed to predict the only negative SNP. It can maximize the objective function value simply by predicting all SNPs to be positive as they are the majority of the labels. This poses a problem when we want to minimize false positives. On the other hand, py_artifact_removal can detect the only negative SNP but misses much more positive SNPs, achieving an overall lower objective function value. Therefore, the other labeled datasets are used to provide a more robust estimate of the model performances. The key takeaway here is the superior speed achieved by the mod- els written in Python as compared to R. The subsequent evaluation comparison will be made between the 2 python models: py_arti- fact_removal and DL_v11.

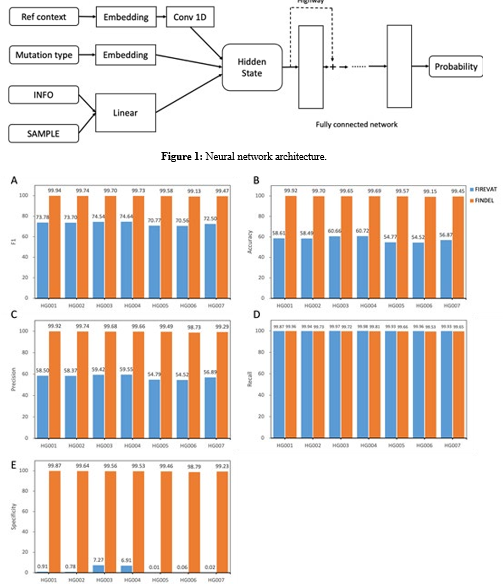

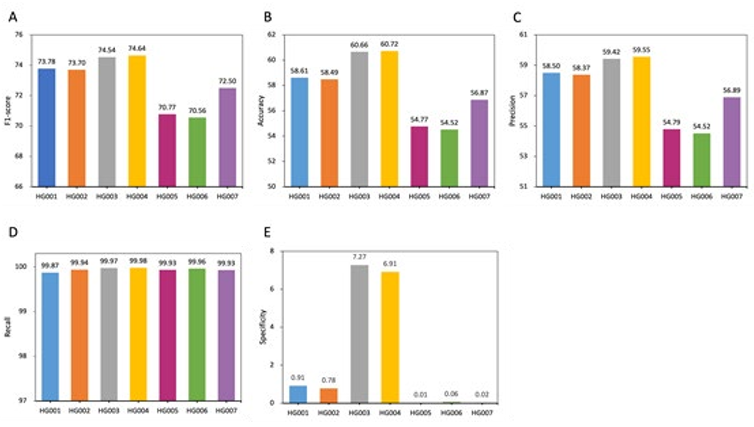

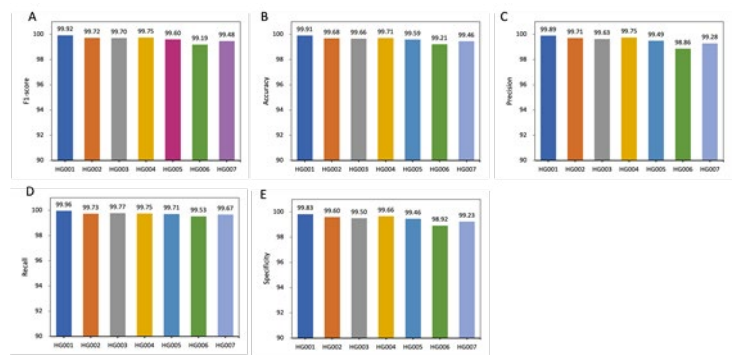

The subsequent versions of the DL_v11 models were trained on the GIAB consortium reference samples (HG001-HG007). The following results were based on the first method of training using the same VCF file for both training and testing. The performance comparison between py_artifact_removal and DL_v11 based on the 5 metrics is given in Appendix A. DL_v11 performed better on all the metrics (F1, accuracy, precision, specificity) except re- call. The performance comparison within py_artifact_removal is given in Appendix B. Generally, higher scores were achieved on the human genomes from the Ashkenazi Jewish (HG002/HG003/ HG004) ancestry than the Utah/European (HG001) and Han Chi- nese (HG005/HG006/HG007) ancestries for F1, accuracy, and pre- cision. For recall, similar scores were achieved on the human ge- nomes from all the ancestries. Results were mixed for specificity. The performance comparison within DL_v11 is given in Appendix C. Generally, higher scores were achieved on the human genomes from the Ashkenazi Jewish ancestry than the Utah/European and Han ancestries for F1, accuracy, and precision, and recall. Results were mixed for specificity.

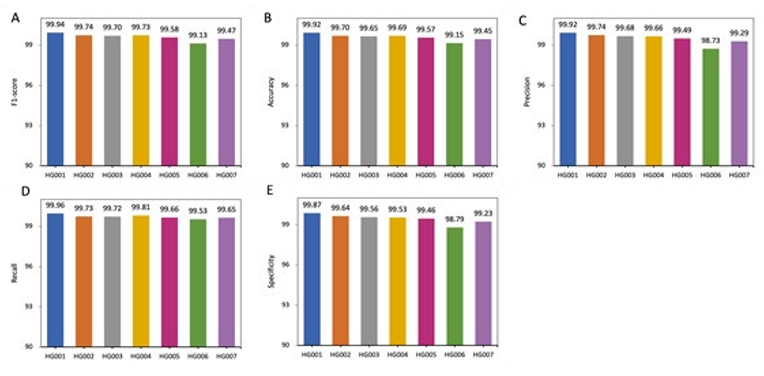

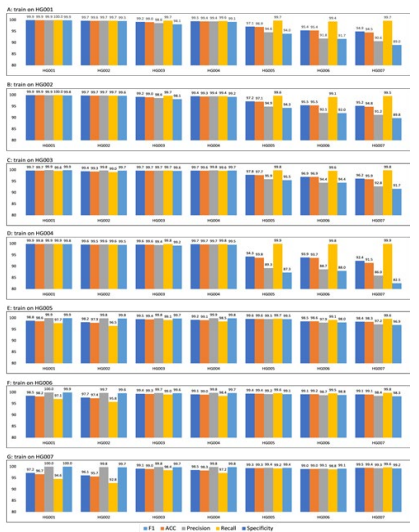

The next set of results used the second training method where the train and test set consisted of different human genomes. The F1, accuracy, and precision performance comparisons within DL_v11 for different train sets are given in Appendix D, E, and F respec- tively. Generally, higher scores were achieved on the human ge- nomes from the Ashkenazi Jewish ancestry than the Utah/Europe- an and Han Chinese ancestries regardless of the train set used. The recall performance comparison is given in Appendix G. Generally, higher scores were achieved on the human genomes from the Ash- kenazi Jewish and Utah/European ancestries than the Han Chinese ancestry regardless of the train set used. The human genome from the Utah/European ancestry (HG001) had the best recall for all the train sets. The specificity performance comparison is given in Appendix H. Generally, higher scores were achieved on the human genomes from the Ashkenazi Jewish ancestry than the Han Chi- nese ancestry regardless of the train set used.

The human genome from the Utah/European ancestry (HG001) had the worst specificity for all the train sets. The average per- formance comparison using different train sets is given in Ap- pendix I. Generally, higher average scores on all 5 metrics were achieved when the human genomes from the Han Chinese ances- try were used as the train samples. The time comparison between the 3 models is given in Appendix J. All datasets used here are from the FFPE and fresh frozen WES samples. On both datasets, SRR2911438 and SRR2911453, a significantly shorter time was used by the 2 models written in Python (py_artifact_removal and DL_v11) as compared to the model written in R (r_artifact_remov- al). The correlation between elapsed time and the number of SNPs for py_artifact_removal is given in Appendix K. All datasets used here are from the FFPE and fresh frozen WES samples and the breast cancer WES samples. A strong positive linear correlation can be observed. As the number of SNPs increases, the elapsed time taken by py_artifact_removal increases as well.

Discussion

FINDEL, a variant refinement software infused with deep learn- ing, has demonstrated its ability to efficiently remove sequencing artifacts from cancer samples using mutational signatures and other features. We have evaluated its performance and speed on high-quality open-source datasets. The performance is largely boosted by the supervised deep learning approach in addition to the use of mutational signatures. False positives are greatly re- duced through the variant refinement process. The algorithm speed is mostly due to the optimal choice of programming language and code refactoring. The overall software runtime is low as our ap- proach queries VCF files instead of BAM files where the former has a much lower memory footprint. Moreover, the software can be easily run on a local machine. FINDEL eliminates the need for users to manually inspect BAM files using some form of genomics viewer program. This saves both labor and time, increasing the turnover of cancer sample interpretation. Doctors require less time for the clinical diagnosis of patients and can increase the range of therapeutic opportunities for them. More importantly, the automa- tion and standardization of the variant refinement process increase reproducibility by different entities.

The usage of mutational signatures to partition the unrefined muta- tion set into refined and artifactual mutation subsets was possible as various artifacts had characteristic mutational signatures that were different from the true variants. These mutational signatures are constantly updated and can be found in the COSMIC data- base. This essentially removes the need for bioinformaticians to manually inspect large BAM files and search for patterns that are representative of artifacts using domain knowledge. The manual inspection process is a major bottleneck impeding the progress of the downstream analyses after the variant calling process. Elimi- nating this laborious task greatly reduces the entire bioinformatics workflow time. As the research on mutational signatures become more comprehensive in the future, we expect the algorithm to fur- ther improve in performance as well. FINDEL only requires a us- er-provided VCF file and an SBS mutational signature data file.

No manual preprocessing on the user part is required as long as the standard format is used. Subsequently, FINDEL outputs a VCF file representing the refined mutations. The infusion of supervised deep learning to FINDEL mainly aims to improve algorithmic per- formance and further reduce the number of false positives. It also utilizes the information from the VCF files beyond just the muta- tional signatures. Specifically, additional features are engineered from the “INFO” and “SAMPLE” columns of the VCF files. As seen from the results, the addition of supervised deep learning greatly improves the score of most of the metrics. The disadvan- tage is that the initial training of the neural network might take quite some time. However, subsequent usage of the model, aka inference, takes little time. The deep learning model only needs to be retrained if there is evidence of model degradation. Also, the performance of the model is affected by the quality of the data. Generally, higher scores were achieved on the human genomes from the Ashkenazi Jewish ancestry than the Utah/European and Han Chinese ancestries due to the former having higher sequenc- ing depth.

Conclusion and Further Work

We have developed a deep learning-based bioinformatics software, FINDEL, that can efficiently remove sequencing artifacts from cancer samples using mutational signatures and other features. It eliminates the laborious process of manually inspecting large BAM files by querying much smaller VCF files. The algorithm automates and standardizes the variant refinement process, reduc- ing required labor and time while increasing reproducibility. The user only needs to provide the input files and the software does the rest of the work. Within minutes, the unrefined VCF file contain- ing the artifactual variants are removed and the refined VCF file can be used for subsequent downstream analyses. The potential of FINDEL to redefine the bioinformatics workflow through its supe- rior performance and speed allows better patient management and therapeutic opportunities. As of now, the software mainly focus- es on single nucleotide polymorphisms (SNPs). In the future, the scope can be broadened to insertions and deletions, copy number variations, and even structural variations. Overall, we show that the use of mutational signatures coupled with deep learning can replace the manual inspection process, potentially setting a new standard for the variant refinement process.

Acknowledgements

Not applicable.

Figure 2: Performance comparison between py_artifact_removal (before deep learning) and DL_v11 (after deep learning) based on 5 metrics. All values are in percentages unless otherwise indicated. All datasets used here are from the GIAB consortium reference samples (HG001-HG007). The PY_ARTIFACT_REMOVAL method is based on mutation signatures, which tends to produce positive results. The supervised learning-based DL- V11 method is able to accurately identify artificial mutations. In all four metrics except re- call, dl-v11 has a huge improvement.

Figure 3: Performance comparison within py_artifact_removal (before deep learning) based on 5 metrics. All values are in percentages unless otherwise indicated. All datasets used here are from the GIAB consortium reference samples (HG001-HG007). Different races will affect the performance of the model. PY_ARTIFACT_REMOVAL has better performance in Utah/European (HG001) and Ashke- nazi Jewish (HG002/HG003/HG004) than Han Chinese (HG005/HG006/HG007) ancestries.

Figure 4: Performance comparison within DL_v11 (after deep learning) based on 5 metrics. All values are in percentages unless oth- erwise indicated. All datasets used here are from the GIAB consortium reference samples (HG001-HG007). An independent model is trained for each sample. DL_v11 achieves the best performance on HG001, with an f1-score of 99.94. Lower scores were achieved on the other human genomes with the Han Chinese(HG006) ancestry genome performing the poorest.

Figure 5: Performance comparison within DL_v11 (after deep learning) for different trainsets. The model achieves better performance when the test and training data come from the same sample. When the training and test data come from different sources, the algorithm performance will be affected. For example, in A Barplot, the training data is chromosome 1 to 19 of HG001(Utah/European), and the test data is chromosome 20 of HG001 to HG007. On HG001 all five metrics achieve a performance of 99.9, but the model predicts more false-positive mutations on HG005/HG006/HG007 (Han Chinese) of14 different ethnicities, resulting in a lower Specificity

Figure 6: Performance comparison within DL_v11 (after deep learning) for whole trainsets. Training using data from multiple samples was able to improve the robustness of the model and alleviate the effects of different sources of data on model performance. The DL_v11 model trained using chromosome 1 to 19 from HG001 to HG007, the model was able to achieve an f1-score of 99% on all samples.

References

- Watson, J. D., & Crick, F. H. (1953). The structure of DNA, Cold Spring Harbor symposia on quantitative biology.

- Mardis, E. R. (2013). Next-generation sequencing platforms. Annu Rev Anal Chem, 6(1), 287-303.

- Hood, L., & Rowen, L. (2013). The human genome project: big science transforms biology and medicine. Genome med- icine, 5(9), 1-8.

- Knoppers, B. M., Harris, J. R., Tassé, A. M., Budin-Ljøsne, I., Kaye, J., Deschênes, M., & Zawati, M. N. H. (2011). Towards a data sharing Code of Conduct for international genomic re- search. Genome Medicine, 3(7), 1-4.

- Goodwin, S., McPherson, J. D., & McCombie, W. R. (2016). Coming of age: ten years of next-generation sequencing tech- nologies. Nature Reviews Genetics, 17(6), 333-351.

- National Human Genome Research Institute. (2016). The cost of sequencing a human genome.

- Buermans, H. P. J., & Den Dunnen, J. T. (2014). Next gen- eration sequencing technology: advances and applications. Biochimica et Biophysica Acta (BBA)-Molecular Basis of Disease, 1842(10), 1932-1941.

- Kircher, M., & Kelso, J. (2010). Highâ?ÂÂthroughput DNA se- quencing–concepts and limitations. Bioessays, 32(6), 524-536.

- Li, R., Fan, W., Tian, G., Zhu, H., He, L., Cai, J., ... & Wang,J. (2010). The sequence and de novo assembly of the giant panda genome. Nature, 463(7279), 311-317.

- Ng, S. B., Buckingham, K. J., Lee, C., Bigham, A. W., Tabor,H. K., Dent, K. M., ... & Bamshad, M. J. (2010). Exome se- quencing identifies the cause of a mendelian disorder. Nature genetics, 42(1), 30-35.

- Nagalakshmi, U., Wang, Z., Waern, K., Shou, C., Raha, D., Gerstein, M., & Snyder, M. (2008). The transcriptional land- scape of the yeast genome defined by RNA sequencing. Sci- ence, 320(5881), 1344-1349.

- Guttman, M., Garber, M., Levin, J. Z., Donaghey, J., Rob- inson, J., Adiconis, X., ... & Regev, A. (2010). Ab initio re- construction of cell type–specific transcriptomes in mouse reveals the conserved multi-exonic structure of lincRNAs. Nature biotechnology, 28(5), 503-510.

- Trapnell, C., Williams, B. A., Pertea, G., Mortazavi, A., Kwan, G., Van Baren, M. J., ... & Pachter, L. (2010). Transcript as- sembly and quantification by RNA-Seq reveals unannotated transcripts and isoform switching during cell differentiation. Nature biotechnology, 28(5), 511-515.

- Liti, G., Carter, D. M., Moses, A. M., Warringer, J., Parts, L., James, S. A., ... & Louis, E. J. (2009). Population genomics of domestic and wild yeasts. Nature, 458(7236), 337-341.

- Li, Y., Vinckenbosch, N., Tian, G., Huerta-Sanchez, E., Jiang, T., Jiang, H., ... & Wang, J. (2010). Resequencing of 200 hu- man exomes identifies an excess of low-frequency non-syn- onymous coding variants. Nature genetics, 42(11), 969-972.

- 1000 Genomes Project Consortium. (2010). A map of human genome variation from population scale sequencing. Nature, 467(7319), 1061.

- Mantere, T., Kersten, S., & Hoischen, A. (2019). Long-read sequencing emerging in medical genetics. Frontiers in genet- ics, 10, 426.

- Fritz, M. H. Y., Leinonen, R., Cochrane, G., & Birney, E. (2011). Efficient storage of high throughput DNA sequencing data using reference-based compression. Genome research, 21(5), 734-740.

- Li, H., Handsaker, B., Wysoker, A., Fennell, T., Ruan, J., Ho- mer, N., ... & Durbin, R. (2009). The sequence alignment/map format and SAMtools. Bioinformatics, 25(16), 2078-2079.

- Roy, S., LaFramboise, W. A., Nikiforov, Y. E., Nikiforova, M. N., Routbort, M. J., Pfeifer, J., ... & Pantanowitz, L. (2016). Next-generation sequencing informatics: challenges and strat- egies for implementation in a clinical environment. Archives of pathology & laboratory medicine, 140(9), 958-975.

- Kadri, S. (2018). Advances in next-generation sequencing bioinformatics for clinical diagnostics: Taking precision on- cology to the next level. Advances in Molecular Pathology, 1(1), 149-166.

- Nielsen, R., Paul, J. S., Albrechtsen, A., & Song, Y. S. (2011). Genotype and SNP calling from next-generation sequencing data. Nature Reviews Genetics, 12(6), 443-451.

- Loeb, L. A. (2011). Human cancers express mutator pheno- types: origin, consequences and targeting. Nature Reviews Cancer, 11(6), 450-457.

- Glenn, T. C. (2011). Field guide to next-generation DNA se- quencers. Molecular ecology resources, 11(5), 759-769.

- Kanagawa, T. (2003). Bias and artifacts in multitemplate polymerase chain reactions (PCR). Journal of bioscience and bioengineering, 96(4), 317-323.

- Fox, E. J., Reid-Bayliss, K. S., Emond, M. J., & Loeb, L. A. (2014). Accuracy of next generation sequencing platforms. Next generation, sequencing & applications, 1.

- Lecroq, B., Lejzerowicz, F., Bachar, D., Christen, R., Esling, P., Baerlocher, L., ... & Pawlowski, J. (2011). Ultra-deep se- quencing of foraminiferal microbarcodes unveils hidden rich- ness of early monothalamous lineages in deep-sea sediments. Proceedings of the National Academy of Sciences, 108(32), 13177-13182.

- Mackelprang, R., Waldrop, M. P., DeAngelis, K. M., David,M. M., Chavarria, K. L., Blazewicz, S. J., ... & Jansson, J. K. (2011). Metagenomic analysis of a permafrost microbial com- munity reveals a rapid response to thaw. Nature, 480(7377), 368-371.

- Druley, T. E., Vallania, F. L., Wegner, D. J., Varley, K. E.,Knowles, O. L., Bonds, J. A., ... & Mitra, R. D. (2009). Quan- tification of rare allelic variants from pooled genomic DNA. Nature methods, 6(4), 263-265.

- Out, A. A., van Minderhout, I. J., Goeman, J. J., Ariyurek, Y., Ossowski, S., Schneeberger, K., ... & Hes, F. J. (2009). Deep sequencing to reveal new variants in pooled DNA samples. Human mutation, 30(12), 1703-1712.

- Beck, J., Urnovitz, H. B., Mitchell, W. M., & Schütz, E. (2010). Next Generation Sequencing of Serum Circulating Nucleic Acids from Patients with Invasive Ductal Breast Can- cer Reveals Differences to Healthy and Nonmalignant Con- trolsNext Generation Sequencing of CNAs in Breast Cancer. Molecular cancer research, 8(3), 335-342.

- Leary, R. J., Kinde, I., Diehl, F., Schmidt, K., Clouser, C., Duncan, C., ... & Velculescu, V. E. (2010). Development of personalized tumor biomarkers using massively parallel se-quencing. Science translational medicine, 2(20), 20ra14- 20ra14.

- Fan, H. C., Blumenfeld, Y. J., Chitkara, U., Hudgins, L., & Quake, S. R. (2008). Noninvasive diagnosis of fetal aneu- ploidy by shotgun sequencing DNA from maternal blood. Proceedings of the National Academy of Sciences, 105(42), 16266-16271.

- Chiu, R. W., Akolekar, R., Zheng, Y. W., Leung, T. Y., Sun, H., Chan, K. A., ... & Lo, Y. D. (2011). Non-invasive prenatal as- sessment of trisomy 21 by multiplexed maternal plasma DNA sequencing: large scale validity study. Bmj, 342.

- Barnell, E. K., Ronning, P., Campbell, K. M., Krysiak, K., Ainscough, B. J., Sheta, L. M., ... & Griffith, O. L. (2019). Standard operating procedure for somatic variant refinement of sequencing data with paired tumor and normal samples. Genetics in Medicine, 21(4), 972-981.

- Roy, S., Coldren, C., Karunamurthy, A., Kip, N. S., Klee, E. W., Lincoln, S. E., ... & Carter, A. B. (2018). Standards and guidelines for validating next-generation sequencing bioin- formatics pipelines: a joint recommendation of the Associa- tion for Molecular Pathology and the College of American Pa- thologists. The Journal of Molecular Diagnostics, 20(1), 4-27.

- Li, M. M., Datto, M., Duncavage, E. J., Kulkarni, S., Linde-man, N. I., Roy, S., ... & Nikiforova, M. N. (2017). Standards and guidelines for the interpretation and reporting of sequence variants in cancer: a joint consensus recommendation of the Association for Molecular Pathology, American Society of Clinical Oncology, and College of American Pathologists. The Journal of molecular diagnostics, 19(1), 4-23.

- Cibulskis, K., Lawrence, M. S., Carter, S. L., Sivachenko, A., Jaffe, D., Sougnez, C., ... & Getz, G. (2013). Sensitive detec- tion of somatic point mutations in impure and heterogeneous cancer samples. Nature biotechnology, 31(3), 213-219.

- Larson, D. E., Harris, C. C., Chen, K., Koboldt, D. C., Abbott,T. E., Dooling, D. J., ... & Ding, L. (2012). SomaticSniper: identification of somatic point mutations in whole genome se- quencing data. Bioinformatics, 28(3), 311-317.

- Saunders, C. T., Wong, W. S., Swamy, S., Becq, J., Murray,L. J., & Cheetham, R. K. (2012). Strelka: accurate somatic small-variant calling from sequenced tumor–normal sample pairs. Bioinformatics, 28(14), 1811-1817.

- Koboldt, D. C., Zhang, Q., Larson, D. E., Shen, D., McLellan,M. D., Lin, L., ... & Wilson, R. K. (2012). VarScan 2: somatic mutation and copy number alteration discovery in cancer by exome sequencing. Genome research, 22(3), 568-576.

- Mardis, E. R. (2010). The $1,000 genome, the $100,000 anal- ysis?. Genome medicine, 2(11), 1-3.

- Strom, S. P. (2016). Current practices and guidelines for clini- cal next-generation sequencing oncology testing. Cancer biol- ogy & medicine, 13(1), 3.

- Kim, J., Park, W. Y., Kim, N. K., Jang, S. J., Chun, S. M.,Sung, C. O., ... & Won, J. K. (2017). Good laboratory stan- dards for clinical next-generation sequencing cancer panel tests. Journal of pathology and translational medicine, 51(3),191-204.

- Govindan, R., Ding, L., Griffith, M., Subramanian, J., Dees,N. D., Kanchi, K. L., ... & Wilson, R. K. (2012). Genomic landscape of non-small cell lung cancer in smokers and nev- er-smokers. Cell, 150(6), 1121-1134.

- Ott, P. A., Hu, Z., Keskin, D. B., Shukla, S. A., Sun, J., Bo- zym, D. J., ... & Wu, C. J. (2017). An immunogenic person- al neoantigen vaccine for patients with melanoma. Nature, 547(7662), 217-221.

- Rheinbay, E., Parasuraman, P., Grimsby, J., Tiao, G., Engreitz,J. M., Kim, J., ... & Getz, G. (2017). Recurrent and functional regulatory mutations in breast cancer. Nature, 547(7661), 55- 60.

- Giannakis, M., Hodis, E., Jasmine Mu, X., Yamauchi, M., Rosenbluh, J., Cibulskis, K., ... & Garraway, L. A. (2014). RNF43 is frequently mutated in colorectal and endometrial cancers. Nature genetics, 46(12), 1264-1266.

- Sandmann, S., De Graaf, A. O., Karimi, M., Van Der Reij- den, B. A., Hellström-Lindberg, E., Jansen, J. H., & Dugas,M. (2017). Evaluating variant calling tools for non-matched next-generation sequencing data. Scientific reports, 7(1), 1-12.

- Robinson, J. T., Thorvaldsdóttir, H., Wenger, A. M., Zehir, A., & Mesirov, J. P. (2017). Variant review with the integrative genomics viewer. Cancer research, 77(21), e31-e34.

- Thorvaldsdóttir, H., Robinson, J. T., & Mesirov, J. P. (2013). Integrative Genomics Viewer (IGV): high-performance ge- nomics data visualization and exploration. Briefings in bioin- formatics, 14(2), 178-192.

- Fiume, M., Williams, V., Brook, A., & Brudno, M. (2010). Sa- vant: genome browser for high-throughput sequencing data. Bioinformatics, 26(16), 1938-1944.

- Goecks, J., Coraor, N., Team, T. G., Nekrutenko, A., & Taylor,J. (2012). NGS analyses by visualization with Trackster. Na- ture biotechnology, 30(11), 1036-1039.

- Carver, T., Harris, S. R., Otto, T. D., Berriman, M., Parkh- ill, J., & McQuillan, J. A. (2013). BamView: visualizing and interpretation of next-generation sequencing read alignments. Briefings in bioinformatics, 14(2), 203-212.

- Pritt, J., & Langmead, B. (2016). Boiler: lossy compression of RNA-seq alignments using coverage vectors. Nucleic acids research, 44(16), e133-e133.

- Adler, A. J., Wiley, G. B., & Gaffney, P. M. (2013). Infini- um assay for large-scale SNP genotyping applications. JoVE (Journal of Visualized Experiments), (81), e50683.

- Alexandrov, L. B., & Stratton, M. R. (2014). Mutational sig- natures: the patterns of somatic mutations hidden in cancer genomes. Current opinion in genetics & development, 24, 52- 60.

- ICGC/TCGA Pan-Cancer Analysis of Whole Genomes Con- sortium. (2020). Pan-cancer analysis of whole genomes. Na- ture, 578(7793), 82-93.

- Alexandrov, L. B., Kim, J., Haradhvala, N. J., Huang, M. N., Tian Ng, A. W., Wu, Y., ... & Stratton, M. R. (2020). The repertoire of mutational signatures in human cancer. Nature, 578(7793), 94-101.

- Forbes, S. A., Beare, D., Boutselakis, H., Bamford, S., Bindal, N., Tate, J., ... & Campbell, P. J. (2017). COSMIC: somat- ic cancer genetics at high-resolution. Nucleic acids research, 45(D1), D777-D783.

- 1000 Genomes Project Consortium. (2015). A global refer- ence for human genetic variation. Nature, 526(7571), 68.

- Devilee, P., & Rookus, M. A. (2010). A tiny step closer to personalized risk prediction for breast cancer. New England Journal of Medicine, 362(11), 1043-1045.

- Landau, D. A., Tausch, E., Taylor-Weiner, A. N., Stewart, C., Reiter, J. G., Bahlo, J., ... & Wu, C. J. (2015). Mutations driv- ing CLL and their evolution in progression and relapse. Na- ture, 526(7574), 525-530.

- Landau, D. A., Carter, S. L., Stojanov, P., McKenna, A., Ste- venson, K., Lawrence, M. S., ... & Wu, C. J. (2013). Evolution and impact of subclonal mutations in chronic lymphocytic leukemia. Cell, 152(4), 714-726.

- Schuh, A., Becq, J., Humphray, S., Alexa, A., Burns, A., Clif- ford, R., ... & Bentley, D. (2012). Monitoring chronic lym- phocytic leukemia progression by whole genome sequencing reveals heterogeneous clonal evolution patterns. Blood, The Journal of the American Society of Hematology, 120(20), 4191-4196.

- Hallek, M., Fischer, K., Fingerle-Rowson, G., Fink, A. M., Busch, R., Mayer, J., ... & German Chronic Lymphocytic Leu- kaemia Study Group. (2010). Addition of rituximab to fludar- abine and cyclophosphamide in patients with chronic lympho- cytic leukaemia: a randomised, open-label, phase 3 trial. The Lancet, 376(9747), 1164-1174.

- Edelmann, J., Holzmann, K., Miller, F., Winkler, D., Bühler, A., Zenz, T., ... & Döhner, H. (2012). High-resolution genom- ic profiling of chronic lymphocytic leukemia reveals new re- current genomic alterations. Blood, The Journal of the Ameri- can Society of Hematology, 120(24), 4783-4794.

- De Paoli, L., Cerri, M., Monti, S., Rasi, S., Spina, V., Bruscag- gin, A., ... & Rossi, D. (2013). MGA, a suppressor of MYC, is recurrently inactivated in high risk chronic lymphocytic leu- kemia. Leukemia & lymphoma, 54(5), 1087-1090.

- Oldridge, D. A., Wood, A. C., Weichert-Leahey, N., Crim- mins, I., Sussman, R., Winter, C., ... & Maris, J. M. (2015). Genetic predisposition to neuroblastoma mediated by a LMO1 super-enhancer polymorphism. Nature, 528(7582), 418-421.

- He, C., Tu, H., Sun, L., Xu, Q., Gong, Y., Jing, J., ... & Yuan,Y. (2015). SNP interactions of Helicobacter pylori-related host genes PGC, PTPN11, IL1B, and TLR4 in susceptibility to gastric carcinogenesis. Oncotarget, 6(22), 19017.

- Xu, Q., Liu, J. W., & Yuan, Y. (2015). Comprehensive assess- ment of the association between miRNA polymorphisms and gastric cancer risk. Mutation Research/Reviews in Mutation Research, 763, 148-160.

- Liu, J., He, C., Xing, C., & Yuan, Y. (2014). Nucleotide exci- sion repair related gene polymorphisms and genetic suscep- tibility, chemotherapeutic sensitivity and prognosis of gas- tric cancer. Mutation Research/Fundamental and Molecular Mechanisms of Mutagenesis, 765, 11-21.

- Zhang, Y., Sun, L. P., Xing, C. Z., Xu, Q., He, C. Y., Li, P., ...& Yuan, Y. (2012). Interaction between GSTP1 Val allele and H. pylori infection, smoking and alcohol consumption and risk of gastric cancer among the Chinese population.

- Xu, Q., Dong, Q., He, C., Liu, W., Sun, L., Liu, J., ... & Yuan,Y. (2014). A new polymorphism biomarker rs629367 associ- ated with increased risk and poor survival of gastric cancer in chinese by up-regulated miRNA-let-7a expression. PloS one, 9(4), e95249.

- Liu, J. W., He, C. Y., Sun, L. P., Xu, Q., Xing, C. Z., & Yuan,Y. (2013). The DNA repair gene ERCC6 rs1917799 polymor- phism is associated with gastric cancer risk in Chinese. Asian Pacific Journal of Cancer Prevention, 14(10), 6103-6108.

- He, C., Tu, H., Sun, L., Xu, Q., Li, P., Gong, Y., ... & Yuan, Y.(2013). Helicobacter pylori-related host gene polymorphisms associated with susceptibility of gastric carcinogenesis: a two- stage case-control study in Chinese. Carcinogenesis, 34(7), 1450-1457.

- Peng, T., Sun, Y., Lv, Z., Zhang, Z., Su, Q., Wu, H., ... & Mi,Y. (2021). Effects of FGFR4 G388R, V10I polymorphisms on the likelihood of cancer. Scientific reports, 11(1), 1-12.

- Jing, J. J., Li, M., & Yuan, Y. (2012). Toll-like receptor 4 Asp- 299Gly and Thr399Ile polymorphisms in cancer: a meta-anal- ysis. Gene, 499(2), 237-242.

- Ulaganathan, V. K., Sperl, B., Rapp, U. R., & Ullrich, A. (2015). Germline variant FGFR4 p. G388R exposes a mem- brane-proximal STAT3 binding site. Nature, 528(7583), 570- 574.

- Bergstrom, E. N., Huang, M. N., Mahto, U., Barnes, M., Strat- ton, M. R., Rozen, S. G., & Alexandrov, L. B. (2019). SigPro- filerMatrixGenerator: a tool for visualizing and exploring pat- terns of small mutational events. BMC genomics, 20(1), 1-12.

- Gazdar, A. F., Kurvari, V., Virmani, A., Gollahon, L., Sakagu- chi, M., Westerfield, M., ... & Shay, J. W. (1998). Character- ization of paired tumor and non-tumor cell lines established from patients with breast cancer. International journal of can- cer, 78(6), 766-774.

- Zhang, J., Bajari, R., Andric, D., Gerthoffert, F., Lepsa, A., Nahal-Bose, H., ... & Ferretti, V. (2019). The international cancer genome consortium data portal. Nature biotechnology, 37(4), 367-369.

- Leinonen, R., Sugawara, H., Shumway, M., & International Nucleotide Sequence Database Collaboration. (2010). The sequence read archive. Nucleic acids research, 39(suppl_1), D19-D21.

- Li, H., & Durbin, R. (2009). Fast and accurate short read alignment with Burrows–Wheeler transform. bioinformatics, 25(14), 1754-1760.