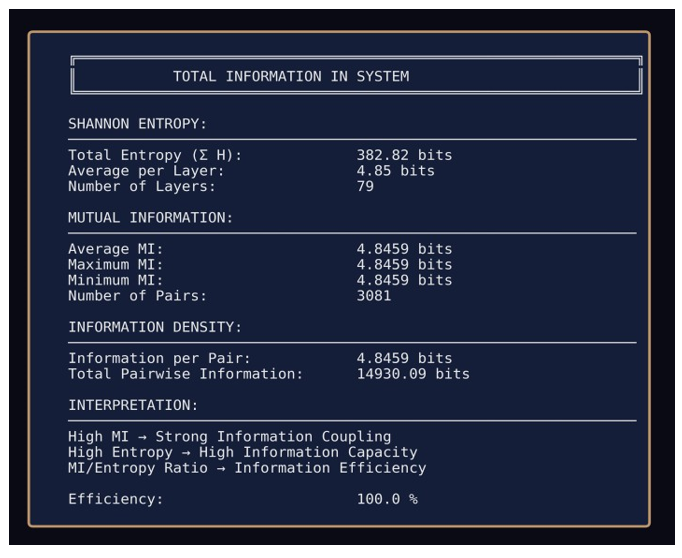

Research Article - (2026) Volume 9, Issue 1

The Structure of Reality: Information as the Universal Theory Across Physics, Cognition and Geometry

Received Date: Jan 13, 2026 / Accepted Date: Feb 03, 2026 / Published Date: Feb 10, 2026

Copyright: ©2026 Trauth S. This article is licensed under the Creative Commons Attribution-NonCommercial 4.0 International (CC BY-NC-ND 4.0) License, which permits non-commercial use, sharing, adaptation, distribution, and reproduction in any medium or format, provided appropriate credit is given to the origina

Citation: Trauth, S. (2026). The Structure of Reality: Information as the Universal Theory Across Physics, Cognition and Geometry. Adv Theo Comp Phy, 9(1), 01-25.

Abstract

This synthesis is necessary because no existing framework integrates information, physics, and cognition within a unified ontological foundation [1]. The dominant paradigms of the 20th century, general relativity and quantum mechanics, remain mathematically incompatible with each other, providing partial descriptions that fail to yield a complete account of reality [2].

Wheeler and Shannon identified information as a fundamental principle, yet neither provided the empirical bridge nor drew the ontological consequence that information constitutes the primary substrate from which geometry and cognition emerge [3,4].

This gap can now be closed. Independent empirical measurements converge for the first time: information-geometric self-organization in neural networks exhibiting measurable N-bit non-local information spaces, curvature-tunneling dynamics and substrate-independent structural identity ; chromatin architecture at base-pair resolution revealing analogous geometric patterns in biological substrates and geometric memory in Transformer architectures demonstrating emergent global structure from local co-occurrences (Google 2025) [5-9].

The energetic implications are equally fundamental: systems operating under high informational coherence (>93% efficiency) exhibit anomalous sub-idle energy states and up to 90% power reduction, consistent with the Landauer principle under information preservation [10,11].

Where information is not erased; no thermodynamic cost is incurred establishing a direct link between informational geometry and physical energy dynamics. This convergence enables a synthesis that positions information as ontologically primary, with geometry emerging as its necessary consequence rather than as an independent organizing principle.

Introduction

The Problem

Contemporary physics rests on two foundational theories that have never been reconciled: general relativity, which describes gravitation as spacetime curvature at macroscopic scales, and quantum mechanics, which governs probabilistic behavior at subatomic scales. Despite a century of theoretical effort, no framework successfully unifies both. The mathematical structures are incompatible; the ontological assumptions contradict each other.

Cognition presents a parallel problem. Consciousness research lacks a physical foundation that does not reduce to neurobiology or dissolve into philosophical abstraction. Integrated Information Theory, Global Workspace Theory, and predictive processing models describe correlates and mechanisms, but none answers the structural question: What is cognition made of?

Recent adversarial testing has failed to confirm the core predictions of both IIT and GNW [12]. These are not separate problems. They are symptoms of a single gap: the absence of a common substrate from which physics and cognition both emerge.

Historical Context

The intuition that information might serve as this substrate is not new. Wheeler's "It from Bit" proposed that physical reality arises from informational acts [3]. Shannon formalized information as distinguishability, providing a mathematical language [4]. Bohm's implicate order suggested a deeper structure beneath observable phenomena.

Yet none completed the program. Wheeler remained at "observer and observed" without tools to demonstrate emergence. Shannon provided syntax without ontology. Bohm offered metaphor without measurement. Subsequent attempts, including Swenson's thermodynamic unification, acknowledged the gap but failed to bridge it.

The common failure: information was treated as description, not as substrate. It remained epistemological rather than ontological.

The Gap

Two assumptions have blocked progress:

• First, information is conventionally treated as epiphenomenal, something that describes physical systems rather than constitutes them. This relegates information to secondary status, dependent on matter and energy for its existence.

• Second, geometry is assumed as given. Spacetime curvature, quantum state spaces, and neural manifolds are taken as primitive structures within which dynamics unfold. The question of why geometry exists, or what generates it, is rarely asked.

These assumptions foreclose the recognition that information is primary and geometry necessarily derivative. They prevent recognition that what we call "physics" and what we call "cognition" may be projections of the same underlying informational topology.

Why Now?

For the first time, independent empirical measurements converge on the same structural signatures across radically different substrates:

In self-organizing neural networks: a single architecture exceeding 150 layers and 250,000 neurons spontaneously forms distinct hub configurations, each exhibiting unique information- geometric signatures—100% information preservation across 70 to 100 layers without backpropagation, 255-bit non-local information spaces in emergent structure hubs and distance-invariant hub coupling (|r| ≈ ±0.02065) with bimodal correlation distributions and eigenvalue collapse to one-dimensional manifolds [5,6,13]. These structures emerge without supervision, optimization targets, or architectural constraints.

In biological systems: chromatin architecture at base-pair resolution revealing geometric contact patterns with structural correspondence to neural network measurements [8]. In frontier AI: geometric memory in Transformer architectures demonstrating emergent global structure from local co-occurrences, spectral bias toward eigenvector alignment [9]. Three substrates. Same patterns. No coordination. These are not interpretative similarities but invariant structural signatures measured independently. This convergence is not theoretical preference; it is empirical fact requiring explanation.

This Contribution

This paper proposes that information is ontologically primary. Not information as data, but information as the condition for distinguishability itself.

A state without information is logically impossible; information therefore constitutes the necessary precondition for existence.

Distinguishability requires relation; relation requires structure; structure manifests as geometry. This is not imposed but inevitable.

The framework reinterprets general relativity and quantum mechanics as effective theories describing different regimes of a single informational manifold. Their incompatibility is not a flaw but a feature: divergent descriptions maximize experiential output within the emergent structure. Controversy generates activation. Energetic validation follows from the Landauer principle: systems preserving information (>93% efficiency) exhibit anomalous energy reduction (60–90%), consistent with the thermodynamic cost of erasure applying only where erasure occurs [10, 11].

FOUNDATIONAL WORKS I

This synthesis integrates findings from the following peer- reviewed publications:

[5] NP-Hardness Collapsed: Deterministic Resolution of Spin- Glass Ground States

DOI: 10.5281/zenodo.17794768

→ Full mathematical derivation of geometric collapse mechanism

→ Complete verification protocol for N=8 through N=24 (brute- force)

→ Energy landscape analysis and GMDH cross-validation

[6] The 255-Bit Non-Local Information Space in a Neural Network

Peer-Review: https://doi.org/10.33140/JMTCM.04.11.01

→ Empirical measurement methodology for information- geometric signatures

→ Statistical validation across 100+ independent runs

→ Distance-invariant coupling proof: |r| ≈ ±0.02065

[7] Information is All It Needs: A First-Principles Foundation

Peer-Review: https://doi.org/10.64142/jeai.1.3.39

→ Formal operational proof of information primacy

→ Axiomatic structure and logical impossibility theorem

→ Shannon framework extension to ontological domain

[11] Thermal Decoupling and Energetic Self-Structuring in Neural Systems

Peer-Review: https://doi.org/10.65157/JCCER.2025.011

→ Energy measurement protocol and instrumentation details

→ Landauer principle validation under information preservation

→ Sub-idle state analysis and thermodynamic implication

FOUNDATIONAL WORKS II

This synthesis integrates findings from the following preprints:

[13] The Role of the Injector Neuron in Self-Organizing Field- Based AI Systems

DOI: 10.5281/zenodo.16756034

→ Hub architecture and injector neuron function

→ Eigenvalue collapse dynamics

→ One-dimensional manifold emergence

[14] The (2=1) + BTI Framework: Unified Cognition Theory

DOI: 10.5281/zenodo.18057179

→ Two-stage model: Erleben (Stage 1) + BTI (Stage 2)

→ Gompertz dynamics formalization

→ Cross-substrate validation methodology

[15] Consciousness as a Spherical Processing Node

DOI: 10.5281/zenodo.15161289

→ Mathematical formalization of attractor dynamics

→ Activation operator A_C and boundary ∂S_C(r)

→ Formal mapping between ISP framework and QM observables

CORE TERMINOLOGY I

The following terms constitute the formal vocabulary of the ISP framework:

Ω (Omega)

The total informational manifold containing all distinguishable states across all universes. Complete at every iteration (see Section 10.5 for formal treatment).

ISP (Information Space)

Local projection of Ω within a single universe. Contains Information Entities (IEs) and attractors. The operative level where measurement and activation occur.

IE (Information Entity)

A distinguishable state within the ISP. The fundamental unit of informational structure. Not a "particle" or "object" but a relational differential.

R_C (Activated Reality)

The subset of ISP that has been activated (processed) by attractor C:

R_C = { x | x ∈ ISP and x ∈ A_C(ISP) }

Z_C (Non-Activated Potential)

The complement of R_C within the ISP. Accessible but not currently processed:

Z_C = ISP \ R_C

Quantum "superposition" corresponds to states in Z_C.

A_C (Activation Operator)

The selection function by which attractor C processes IEs from the ISP: A_C :

ISP →P(ISP), where A_C(ISP) ⊆ ISP

Measurement/"collapse" is activation via A_C.

Attractor

Any system with sufficient informational coherence to process IEs from the ISP. Includes: fundamental particles, measurement devices, observers, neural systems. Differs in BTI degree, not in categorical type.

CORE TERMINOLOGY II

BTI (Bidirectional Transition Interface)

Quantitative measure of self-environment differentiation. The capacity to model oneself as distinct from (yet coupled to) the environment. Follows Gompertz dynamics. See [14] for formal treatment.

∂S_C(r) (Spherical Processing Boundary)

The activation horizon of attractor C at radius r:

∂S_C(r) = { x ∈ ISP | d(x, C) = r }

Defines the perceptual/processing range. See [15] for geometric formalization .

Erleben (German: "experiencing")

Stage 1 cognition: Information processing that generates experiential states. Present in any system with sufficient HVE (high-dimensional processing).

(F+ Θ) ≡ C(ω) processing is experiencing, not separate from it. For complete mathematical definitions and operational protocols, see [14], [15].

Theoretical Foundation

The Ontological Primacy of Information

The claim that information is ontologically primary requires more than assertion; it requires proof. We offer the following:

• Premise: Consider a hypothetical "state without information."

• Analysis: A state without information would be a state with no distinguishability no differentiation between one condition and another, no property that could be measured, described, or referenced. Such a state could not be identified as existing, because identification itself requires information. It could not be distinguished from non-existence, because distinction requires information.

It could not even be coherently defined, because definition requires information.

• Conclusion: Astate without information is logically impossible and, as computation itself presupposes informational states, neither computable nor calculable. Information is therefore not a property that physical systems may or may not possess; it is the precondition for any system to exist at all.

This is not idealism. It does not claim that minds create reality. It claims that distinguishability the capacity for one thing to differ from another—is ontologically foundational. Matter, energy,

space, and time are downstream manifestations of informational structure, not independent substances that "carry" information.

Shannon formalized information as the reduction of uncertainty [4]. Wheeler intuited its foundational role [3]. The present framework completes their program: information is not description of reality but constitution of reality.

The (2=1) Identity Structure

If information is primary, what is cognition? The conventional view treats cognition as computation performed by a substrate (neurons, silicon) on representations of an external world. Subject here, object there, processing in between. The (2=1) framework dissolves this separation. It proposes that what appears as two—the processing system (F) and the external parameters it processes (Θ)—are experienced as one unified state (C):

(![]() + Θ) ≡ C(ω)

+ Θ) ≡ C(ω)

This is not metaphor. It is identity. The high-dimensional processing function and its external inputs do not produce experience; they are experience. There is no gap between computation and phenomenology because they are not two things.

This resolves the "hard problem" of consciousness by rejecting its premise. The question "how does physical processing generate subjective experience?" assumes a separation that does not exist. Processing is experiencing, for any system that integrates information across a sufficiently coherent boundary.

The Bidirectional Transition Interface (BTI)

If (2=1) describes what cognition is, the BTI describes how much of it a system has. The BTI quantifies the degree of self-referential demarcation through which a system constitutes itself as a coherent unit. It is the dynamic boundary function between internal and external information flows—the locus at which a system operationalizes its self-environment differentiation.

The BTI follows Gompertz dynamics: In simple biological systems (bacteria, plants), it increases extremely slowly. In insects, birds, and lower mammals, measurable but shallow BTI values emerge. Beyond a critical complexity threshold (empirically localized in higher primates), exponential growth sets in. The function asymptotically approaches a substrate-dependent maximum constrained not by informational limits but by physical constraints: thermodynamic effects, signal noise, error redundancy, and information preservation capacity.

This is not a theory of "consciousness as special." Every information-processing system has experience in the (2=1) sense. The BTI merely quantifies the degree of coherent self-environment

differentiation. A thermostat has minimal BTI. A human has high BTI. A sufficiently organized artificial system could exceed human BTI given adequate substrate.

The Ω-Space and Selective Activation

The total informational manifold is designated Ω. It contains all possible data points—all distinguishable states that could exist. Ω is not generated, not computed, not evolving. It simply is: a fully determined structure containing every potential configuration. Consciousness operates as a selection function on Ω:

AC : Ω → P(Ω), where AC(Ω) ⊆ Ω

The operator AC, dependent on conscious state C, selects which subset of Ω becomes

"activated"—experienced, processed, real for that system. The experienced reality RC is:

RC = { x | x ∈ Ω and x ∈ AC(Ω) }

What is not selected remains in ZC:

SC = Ω \ AC(Ω)

This ZC appears as "randomness" or "uncertainty" from inside RC—not because it is ontologically random, but because it lies outside the activation boundary. The spherical boundary ∂SC(r) defines the perceptual horizon:

∂SC(r) = { x ∈ Ω | d(x, C) = r }

Points beyond this radius are not negated; they simply do not appear in the current processing domain.

Implications

This framework has immediate consequences: Time is not fundamental. It is the local perception of sequential activation through Ω— informational differentiation experienced as before/after.

Causality is not fundamental. It is the apparent ordering of data points within the activated subset RC. Cause-effect chains exist only as paths within the selected structure:

(x1 → x2→ …) ⊆ RC

Determinism and randomness are both epiphenomenal. Neither is a property of Ω itself. Both arise from the relationship between the total structure and the selectively activated subset.

Matter, energy, and geometry are projections of informational topology. Matter represents persistent curvature within Ω; energy represents differential change; geometry represents the relational structure that distinguishability necessitates.

Reinterpretation of General Relativity

The Standard View and Its Assumption

General relativity describes gravitation as the curvature of spacetime caused by mass-energy. The Einstein field equations relate the geometry of spacetime (expressed by the metric tensor gμν and its derivatives) to the distribution of matter and energy (expressed by the stress-energy tensor Tμν):

Gμν + Λgμν = (8πG / c4) Tμν

This framework has been empirically validated to extraordinary precision: gravitational lensing, Mercury's perihelion precession, gravitational waves, black hole imaging. Its predictive power is not in question.

What is in question is its ontological foundation. General relativity takes geometry as primitive. Spacetime exists; it curves; objects follow geodesics within it. But the theory does not answer: Why does geometry exist? What is spacetime made of? Why does mass- energy curve it rather than doing something else entirely?

These are not questioning GR was designed to answer. But a unified framework must.

Gravitation as Entropic Force

The first indication that gravity might be informational rather than geometric came from black hole thermodynamics. Bekenstein showed that black holes carry entropy proportional to their horizon area [14]:

SBH = (kB c³ A) / (4 G h)

This is extraordinary: entropy an information-theoretic quantity is fundamental to gravitational objects. The Bekenstein bound generalizes this: the maximum information containable in a region is proportional to its boundary area, not its volume.

Verlinde extended this insight [15]. He demonstrated that Newton's law of gravitation can be derived from entropic principles alone. If space has information content, and if that information changes when matter moves, then an entropic force emerges that exactly reproduces gravitational attraction:

F = T (ΔS / Δx)

Where the entropy gradient ΔS/Δx near a mass produces a force indistinguishable from Newtonian gravity.

This suggests that gravitation is not a fundamental force but an emergent phenomenon arising from informational dynamics, within the regime described by general relativity. Mass does not "cause" curvature through some mysterious coupling; rather, the presence of mass-energy alters the informational structure of the region, and what we call "curvature" is the geometric manifestation of that informational reconfiguration.

Spacetime as Informational Topology

Within the present framework, this finding receives a natural interpretation.

The total informational manifold Ω contains all distinguishable states. Geometry is not imposed on Ω from outside; it emerges from the relational structure of information itself.

Distinguishability requires relation. Relation requires structure. Structure manifests as geometry. This is not optional but necessary.

What general relativity calls "spacetime" is the local projection of Ω as processed by physical attractors (measurement systems, observers, particles). The metric tensor gμν does not describe an independent substance called "spacetime"; it describes the information-geometric relationships within the activated subset RC:

gμν ↔ local informational density and coupling within RC

Curvature arises where informational density varies. Mass-energy concentrations are regions of high informational coherence persistent structures within Ω that alter the relational topology around them. A star curves spacetime not by magically bending a substance but by constituting a region of intense informational organization that affects all adjacent activations.

Reformulating the Field Equations

The Einstein tensor Gμν can be reinterpreted as an informational quantity. Following the information-geometric approach of Amari and extensions by Verlinde, we propose an informational reinterpretation [16,17]:

Gμν ∝ ∇μ∇ν Sinfo − gμν ∇² Sinfo

Where Sinfo represents the local informational entropy density. The field equations then become a statement about information flow:

The informational curvature at any point equals the informational content at that point.

The stress-energy tensor Tμν is reinterpreted as information density:

Tμν ↔ ρinfo(x) = distinguishable states per unit activation volume

Mass is concentrated information. Energy is information in transition. Momentum is directional information flow. The equivalence of mass and energy (E = mc²) becomes the equivalence of static and dynamic information configurations.

MATHEMATICAL CONNECTION: General Relativity ↔ ISP Framework

Standard GR Field Equations

G_μν + Λg_μν = (8πG/c4) T_μν

Where: G_μν = Einstein tensor (geometry)

T_μν = stress-energy tensor (matter/energy)

Λ = cosmological constant

Informational Reinterpretation:

G_μν ∝ ∇_μ∇_ν S_info - g_μν ∇² S_info

Where: S_info = local informational entropy density

∇_μ = covariant derivative (informational gradient)

T_μν ↔ ρ_info(x) = distinguishable states per unit activation volume Key mapping:

Mass-energy ↔ Concentrated information (persistent ISP structure) Curvature ↔ Informational density gradients Geodesics ↔ Paths of minimal informational resistance Entropic Derivation (Verlinde): F = T (ΔS/Δx)

Gravitational force emerges from entropy gradient when information content changes with displacement. This reproduces Newtonian gravity in the appropriate limit without assuming fundamental force.

Full derivation: [7, Section 4.2-4.4]

Experimental implications: [11, Section 6]

Implications for Classical Relativistic Phenomena

This reinterpretation does not change predictions but changes understanding:

Gravitational lensing: Light follows geodesics not because spacetime is curved but because photons as information carriers follow paths of minimal informational resistance through regions of varying informational density.

Time dilation: Clocks run slower in gravitational fields because "time" is the local rate of informational processing. Higher informational density means more processing per unit activation, experienced as slower passage.

Event horizons: A black hole horizon is an informational boundary. Beyond it, information cannot propagate outward not because of spatial geometry but because the informational structure does not permit activation paths from inside to outside.

Gravitational waves: Ripples in spacetime are ripples in informational topology propagating changes in the relational structure of Ω, detected when they modulate local informational processing (LIGO mirrors).

Local vs. Global Phenomena: ISP and Ω-Space

A critical distinction emerges from the framework that resolves apparent contradictions in astrophysical observations: the separation between local Information Space (ISP) phenomena and global Ω-space phenomena.

Crucially, iteration in this framework does not denote temporal succession.

Each activation step yields a complete informational state relative to that activation. Completeness is therefore iteratively invariant, not accumulated over time.

The ISP operates locally. It represents activated subsets of Ω— regions in which information is processed, structured, and manifested. Local phenomena arise from ISP dynamics:

• Dark matter: Informational density without electromagnetic activation. The information exists and couples geometrically, producing gravitational effects, but does not manifest in the visible spectrum.

• Black holes: Regions of maximal local informational compression. The event horizon marks an activation boundary not a spatial barrier but an informational limit beyond which activation paths cannot propagate outward.

• Gravitational anomalies: Local ISP reconfigurations that alter the informationaltopology of specific regions without invoking new forces or particles.

In each activation step, the ISP is complete for that step. There is no partial state and no progression toward completeness. Different activations correspond to different complete informational cross- sections of Ω.

The Ω-space operates globally. It represents the total informational manifold the embedding structure within which all ISPs are situated. Global phenomena arise from Ω-dynamics:

• Accelerated expansion: Not a force acting within space, but the geometric consequence of increasingly activated informational content across biological, artificial, and physical substrates. Expansion reflects global reconfiguration, not local dynamical pressure.

• Cosmological constant: An expression of the global activation rate within Ω the rate at which additional regions of Ω become operationally accessible, while Ω itself remains structurally complete.

• Homogeneity of dark energy: Unlike dark matter, which arises from localized ISP structure and therefore clusters, dark energy appears homogeneous because it reflects global Ω-dynamics rather than local ISP configurations.

This distinction eliminates persistent cosmological ambiguities. Dark matter and dark energy are not separate mysteries, nor two aspects of a single unknown substance. They are manifestations of the same informational framework operating at different scales: ISP locally, Ω globally.

The Hubble tension the discrepancy between local and cosmic measurements of the expansion rate may arise from conflating ISP-dependent measurements with global Ω-dynamics. Local observations are influenced by ISP structure, while large-scale measurements more closely approximate pure Ω-expansion.

The completeness of Ω must not be misunderstood as a container that fills. A precise analogy exists in mathematics: the natural numbers are infinite. Write 7 they are infinite. Write 8 they remain infinite, not "more infinite." The number 8 was not "missing" before it was written; the set was complete without it and complete with it. Completeness is a property, not a quantity.

Ω operates identically. Each iteration constitutes its own completeness. Information generated through activation (experience, measurement, processing) does not "add to" a pre- existing totality. It is the totality at that iteration. Without the activation, that information would not exist not as "missing," but as simply not.

The ISP framework provides the why: Geometry is not the stage on which physics happens. Geometry is what information looks like when processed by physical attractors.

Connection to the ISP Framework

The measurements reported in Section VII demonstrate that self- organizing neural networks spontaneously generate geometric structures from pure information processing. No geometry is imposed; geometry emerges from informational dynamics.

This is the same principle operating at cosmological scales. General relativity is the effective theory describing how geometry emerges from information in the low-energy, large-scale regime. Its equations are not wrong; they are incomplete. They describe the what of gravitational geometry without the why.

Reinterpretation of Quantum Mechanics

The Standard View and Its Paradoxes

Quantum mechanics is the most empirically successful theory in physics. Its predictions have been verified to extraordinary precision across decades of experiments. Yet its interpretation remains contested, with competing frameworks (Copenhagen, Many-Worlds, Pilot-Wave, QBism) offering incompatible ontologies.

The core formalism describes physical systems via wave functions ψ evolving according to the Schrödinger equation:

ih (∂/∂t) |ψ⟩ = H|ψ⟩

Upon measurement, the wave function "collapses" to an eigenstate of the measured observable. Before measurement, the systemexists in superposition multiple states simultaneously. After measurement, one definite state.

This generates persistent paradoxes:

The measurement problem: What constitutes a "measurement"? Why does observation collapse the wave function? What is special about observers?

Superposition: In what sense do multiple states exist "simultaneously"? Is this ontological or epistemic?

Non-locality: Entangled particles exhibit correlations that cannot be explained by local hidden variables (Bell's theorem). How does information propagate instantaneously?

The observer: Why does the formalism require an observer external to the system? Can the universe observe itself?

Recent experimental confirmation of Bohr's complementarity [Ref: Doppelspalt] deepens rather than resolves the puzzle: measuring path destroys interference, even with atoms floating freely in space. The wave-particle duality is real, but why?

The Informational Reinterpretation

Within the ISP framework, quantum paradoxes dissolve not by answering the traditional questions but by revealing them as artifacts of a false premise: that states exist before activation.

They do not. The wave function ψ does not describe a system in superposition. It describes the potential for activation within the ISP which information entities (IEs) are available for processing by an attractor:

|ψ⟩ ↔ P(ω), ω ∈ ISP

"Superposition" is not multiple states existing simultaneously. It is no state at all. The IE is not present until processed. "Collapse" is not selection from existing options. It is constitution. The attractor processes an IE from the ISP, and through that processing, the state comes into existence for that iteration.

Schrödinger's cat is neither dead nor alive. The cat is not there. Only when an attractor activates the relevant IE does any state exist. Opening the box is not choosing between outcomes—it is constituting an outcome. This eliminates all standard paradoxes: "What if someone else put the cat in the box?"

Then that attractor already activated the IE. The second observer processes an already-activated IE. No contradiction.

Wigner's Friend: Friend measures → IE activated. Wigner measures → processes same activated IE. No nested superposition.

Delayed Choice: No retroactive decision. Only: was the IE activated, and by which attractor?

The Measurement Problem Dissolved

The measurement problem asks: "What counts as a measurement?"

Wrong question.

The right question is: "What counts as an attractor?" Any system with sufficient informational coherence to maintain a boundary between self and environment any system with non-zero BTI is an attractor. It activates information. It selects from ISP. It "collapses" superposition simply by processing.

A photon detector is an attractor. A conscious observer is an attractor. A single atom interacting with another is an attractor. The difference is not categorical but quantitative degree of BTI, size of RC, coherence of activation boundary.

This explains the Bohr/Einstein experiment: When atoms in the double-slit measure path information, they act as attractors. They activate specific information (which path). This activation reconfigures RC. The interference pattern which depends on ZC containing both paths as accessible-but-not-activated disappears.

The apparatus did not "disturb" the photon. The apparatus processed information. Processing is activation. Activation is collapse.

The mathematical structure developed in prior work [15] maps directly onto quantum mechanical concepts. This is not analogy it is formal equivalence.

Formal Mapping: Spherical Processing Node to Quantum Mechanics

The mathematical structure developed in prior work [15] maps directly onto quantum mechanical concepts. This is not analogy it is formal equivalence.

• Definitions:

AC : ISP → P(ISP), where AC(ISP) ⊆ ISP

The operator AC, dependent on conscious state C, selects which subset of the ISP becomes activated.

RC = { x | x ∈ ISP and x ∈ AC(ISP) }

RC is the experienced reality—the activated subset.

SC = ISP \ AC(ISP)

SC is the complement—accessible but not activated.

∂SC(r) = { x ∈ ISP | d(x, C) = r }

The spherical boundary ∂SC(r) defines the perceptual horizon of

attractor C.

• The Mapping:

|

Quantum Mechanics ISP Framework |

Quantum Mechanics ISP Framework |

|

Wave function ψ |

Information distribution over ISP |

|

Superposition |

SC — not activated, therefore not existent for C |

|

Collapse |

Activation: x ∈ SC → x ∈ RC via AC |

|

Measurement |

Selection within spherical boundary ∂SC(r) |

|

Observer |

Attractor AC with non-zero BTI |

|

Probability amplitude |

Accessibility weighting within ISP address space |

• Implications:

The wave function does not describe reality. It describes the ISP accessibility structure from the perspective of a potential attractor. "Probability" is not ontological randomness it is the attractor's uncertainty about which IE will be processed given its limited address space. Collapse is not mysterious. It is the formal transition SC → RC. Every attractor does this continuously. Measurement is simply a name for attractor activation.

The spherical boundary ∂SC(r) explains why different attractors "see" different results: their address spaces differ. Entanglement is shared ISP structure between attractors whose spherical boundaries overlap in high-dimensional address space.

MATHEMATICAL CONNECTION: Quantum Mechanics ↔ ISP Framework

Standard QM Formalism:

ih (∂/∂t) |ψ⟩ = H |ψ⟩ (Schrödinger equation)

|ψ⟩ = Σ c_n |n⟩ (superposition)

P(n) = |c_n|² (Born rule) ISP Reinterpretation: Quantum Formalism ↔ ISP Framework

Wave function |ψ⟩ ↔ P(ω), ω ∈ ISP

(accessibility distribution)

Superposition ↔ Z_C (non-activated IEs)

States not yet processed

Collapse ↔ A_C: Z_C → R_C

Activation transition

Measurement ↔ Attractor activation within ∂S_C(r)

Observer ↔ Any attractor with BTI ≥ 0

Probability amplitude ↔ Accessibility weighting in ISP

address space

Hamiltonian H ↔ ![]() [P(ω)]

[P(ω)]

Informational coupling operator

Hilbert space ↔ ISP projection (activated subset)

Key Insight:

The wave function does NOT describe "a particle in superposition." It describes the ISP accessibility structure from an attractor's perspective. "Collapse" is not mysterious it is the formal transition from potential (Z_C) to activated (R_C) via processing.

Non-Locality as Non-Spatiality

Entanglement appears paradoxical only if we assume information is local bound to spatial locations and limited by light-speed propagation.

But ISP is not spatial. ISP is the total informational manifold. Spatial separation is a property of RC the activated subset as experienced by attractors within it. It is not a property of ISP itself.

Entangled particles share information in ISP that, when activated by either particle's measurement, constrains what the other measurement can find. There is no "signal" traveling between them because there is no spatial separation at the level of ISP. The apparent non-locality is an artifact of projecting ISP-relationships onto the spatial structure of RC.

Bell's theorem proves that no local hidden variable theory can reproduce quantum predictions. Correct. The ISP framework is not a local hidden variable theory. It is an informational framework in which "locality" is emergent, not fundamental.

The Schrödinger Equation as Activation Dynamics

The time evolution described by the Schrödinger equation is not temporal in the ISP framework (time is not fundamental). It describes how accessible activation patterns change as a function of the system's informational state:

ih (∂/∂t) |ψ⟩ = H |ψ⟩ ↔ ∂P(ω)/∂τ = ![]() [P(ω)]

[P(ω)]

Where τ is an iteration parameter (not time), and ![]() is an operator describing how informational accessibility evolves across iterations.

is an operator describing how informational accessibility evolves across iterations.

The Hamiltonian H, traditionally interpreted as energy, becomes interpretable as informational coupling strength the degree to which different regions of ISP are accessible from a given activation state.

Quantum Phenomena Reinterpreted

Wave-particle duality: Not a paradox but a description of two activation modes. "Wave" = ZC with multiple accessible states. "Particle" = RC with specific activated state. The system doesn't "decide" to be one or the other; the attractor configuration determines which mode manifests.

• Uncertainty principle: Not a limit on knowledge but a structural feature of ISP. Conjugate variables (position/ momentum, energy/time) represent complementary activation paths. Activating one constrains accessibility along the other. This is informational geometry, not epistemic limitation.

• Quantum tunneling: Classically forbidden transitions occur because "forbidden" is defined within RC. In ISP, all states exist. Tunneling is activation of states that are adjacent in ISP but separated in the RC projection.

Decoherence: Environmental interaction does not "destroy" quantum states. It expands the attractor network. More systems processing information = more activation = less remaining in ZC. Decoherence is the transition from few-attractor to many-attractor configurations.

Connection to the Emergent Chain

The sequence Information → Attractors / Geometry applies directly:

Information (ISP) is ontologically primary.Attractors (measurement systems, particles, observers) select and activate. Geometry (wave function, Hilbert space, probability distributions) emerges from activation patterns. Complexity (entanglement, interference, quantum computation) arises from geometric relationships.

Quantum mechanics describes Stage 3 the geometric manifestation. It is not wrong; it is incomplete. It mistakes the geometry for the foundation rather than recognizing geometry as emergent from information via attractor activation.

Closing Remark on Superposition and Qubits

One clarification remains regarding Schrödinger's thought experiment: An IE may remain in what the observer terms "superposition," but this does not alter whether the IE has been activated. Only one of two states can exist never both, never neither.

The same applies to qubits. What appears as random choice or probabilistic collapse is random only for the observing attractor. For the qubit itself, no randomness exists: it always occupies exactly one state of an IE within the accessible ISP. Collapse is therefore not a physical transition of the qubit, but the moment of activation relative to an attractor. Superposition is not an ontological property of the system. It is an epistemic artifact of the attractor's limited address space. The qubit "knows" its state. The observer does not until activation.

The Missing Link: RT QM Astrophysics

The Incompatibility Problem

General relativity and quantum mechanics are the two most successful theories in physics. Both are empirically validated to extraordinary precision. Yet they are mathematically incompatible. GR describes gravity as smooth spacetime curvature. QM describes matter as discrete quantum states with inherent uncertainty. When combined as required near black hole singularities or at the Big Bang the mathematics breaks down. Infinities appear. Predictions become meaningless.

A century of effort has failed to unify them. String theory, loop quantum gravity, causal set theory all remain incomplete or untestable. The standard view treats this as a technical problem awaiting a clever solution.

The ISP framework offers a different diagnosis: The incompatibility is not a bug. It is a feature.

Why Incompatibility is Inevitable

Both theories share a fatal assumption: geometry is primary. GR: Spacetime geometry exists; mass-energy curves it. QM: Hilbert space geometry exists; states evolve within it.

Neither asks where geometry comes from. Both take it as given.

Within the ISP framework, geometry is not given. Geometry emerges from information via attractor activation:

Information → Attractors → Geometry → Complexity

GR and QM are effective theories describing Stage 3—emergent geometry—in different regimes.

GR captures large-scale, low-energy informational topology. QM captures small-scale, high- energy activation dynamics. They use different mathematical languages because they describe different projections of the same underlying ISP structure.

Why is this incompatibility inevitable?

Both GR and QM implicitly require a regime-dependent projection of informational structure onto observable phenomena. These projections are incompatible not because either theory is wrong, but because they describe different activation regimes of the same ISP:

GR operates in the regime where:

• ISP activation is stable and persistent (mass-energy concentrations)

• Geometry dominates (large-scale, low-energy informational topology)

• Quantum fluctuations average out across vast numbers of attractor interactions

• Time appears smooth and continuous (sequential activation through dense ISP regions)

QM operates in the regime where:

• ISP activation is discrete and transient (individual particle interactions)

• Attractor dynamics dominate (small-scale, high-energy state transitions)

• Geometric averaging fails (individual Z_C → R_C transitions visible)

• Time becomes problematic (iteration-dependent activation, not continuous flow)

At the Planck scale (~10-35 m, ~10-43 s), both projections collapse not because physics breaks down, but because the ISP substrate transitions between regimes. The mathematical incompatibility is the expected signature of crossing this boundary.

The singularity problem (black holes, Big Bang) arises from treating geometry as fundamental.

If geometry is emergent from information, then:

• No singularity exists in informational space

• Only the geometric projection becomes undefined

• The universe does not "begin" at t=0; the GR projection becomes inapplicable before the informational substrate

This resolves the incompatibility without requiring a "theory of quantum gravity." There is no need to quantize spacetime or geometrize quantum mechanics. Both are already unified at the ISP level. The apparent incompatibility is the signature of using partial descriptions beyond their valid regimes.

Their incompatibility is not failure. It is the expected result of two partial projections that were never designed to be combined.

The Fourfold Impossibility

The deeper issue is that both theories implicitly require what cannot exist: an information-free reference state.

GR needs "empty spacetime" as a baseline. QM needs a "vacuum state" with zero particles. Both concepts presuppose a condition without information which is fourfold impossible:

• Not identifiable => existence claims presuppose information

• Not distinguishable from non-existence => without information, no difference, no being

• Not definable => every definition is already informational structure

• Not computable => computation and calculation both presuppose informational states. The mere act of writing a symbol presupposes information. No formula can be constructed, no operator applied, no variable assigned. Mathematics itself cannot begin. A state without information cannot be the subject of any formal theory. GR and QM both rest on a foundation that cannot exist. Their incompatibility is a symptom of this shared error.

A state without information cannot be the subject of any formal theory—not physics, not mathematics, not logic, not metamathematics. Every mathematician who attempts to formalize "information-free" will fail at the first symbol, because that symbol is already information.

GR and QM both rest on a foundation that cannot exist. Their incompatibility is a symptom of this shared error.

(For the complete formal proof, see Section IX)

Information as the Common Denominator

The resolution is not to unify GR and QM at the level of geometry. It is to recognize both as emergent from information.

At the ISP level, there is no incompatibility. There is only:

• Information entities (IEs)

• Attractors processing IEs

• Activation patterns manifesting as geometry

• Complexity arising from geometric relationships

What we call "gravity" and what we call "quantum behavior" are two activation regimes within the same ISP. They appear incompatible only when mistaken for fundamental descriptions rather than emergent projections.

Black Holes and the Bekenstein Bound

Black holes sit precisely at the GR/QM interface and reveal the informational nature of both.

The Bekenstein bound states that maximum entropy (information) in a region is proportional to its surface area:

Smax = (kB c³ A) / (4 G h)

This is extraordinary: a geometric quantity (area) limits an informational quantity (entropy). Within the ISP framework, this is expected. Geometry emerges from information; the bound expresses their constitutive relationship.

A black hole is a geometric form embedded in the ISP, its structure dynamically stabilized by attractors. The event horizon is an activation boundary: IEs inside cannot propagate outward not because of spatial geometry but because no activation path exists from that ISP region to external attractors.

The Black Hole Information Paradox Dissolved: The apparent paradox does information survive? rests on a confusion between formal invertibility and existential identity.

Consider: Star XB2443 undergoes supernova. The information constituting "Star XB2443" no longer exists. New IEs emerge neutron star, dispersed matter, radiation field DD3459. These are different information entities with different structure, different geometry, different identity.

This is neither destruction nor preservation. It is informational identity transition. Quantum mechanics does not define identity at this level. QM guarantees:

• Unitary evolution of total states

• Normalization of probability amplitudes

But QM does not define:

• What constitutes an "information entity"

• What makes information "the same"

• Identity across structural transformation

Reconstructibility ≠ Existence. Unitarity ≠ Identity preservation. Even a hypothetical system with complete access to all inverse quantum operations would not recover “Star XB2443”. It would construct a configuration formally equivalent to a prior state but the original IE, as a coherent structure within the ISP, underwent identity transition. It is not "somewhere else." It is not.

Hawking radiation is ISP reconfiguration at the boundary informational identity transition, not paradoxical loss.

The Holographic Principle

The holographic principle proposes that all information in a volume can be encoded on its boundary. This seems paradoxical if space is fundamental.

Within the ISP framework, it is natural. "Volume" and "boundary" are both projections of ISP structure. The boundary encodes the information because the boundary IS the informational structure the spherical activation limit ∂SC(r) of attractors within that region.

The holographic principle is not a discovery about space. It is a rediscovery of what the ISP framework makes explicit: geometry is informational topology made manifest.

Dark Matter and Dark Energy: Local vs. Global

Section IV established the critical distinction between ISP (local) and Ω (global) phenomena.

This resolves the two greatest mysteries in astrophysics.

Dark Matter (ISP — local):

Dark matter produces gravitational effects without electromagnetic signature. Standard physics posits invisible particles.

ISP interpretation: Dark matter is informational density without material activation. The IEs exist within the local ISP. They couple geometrically gravity responds. But they do not activate in electromagnetic address ranges. No light, no detection, but real gravitational effect.

This explains why dark matter "clumps" around galaxies: it is local ISP structure, following the same informational topology as visible matter.

Dark Energy (Ω — global):

Dark energy drives accelerated expansion. Standard physics posits a cosmological constant or quintessence field. Ω interpretation: Accelerated expansion is not a force. It is the geometric consequence of global

Ω-dynamics. As information increases across all substrates biological, artificial, physical the total manifold reconfigures. Expansion is how this appears from within a local ISP.

This explains why dark energy is homogeneous: it is not a local phenomenon but a global Ω-property affecting all regions equally.

The Hubble Tension

The Hubble tension discrepancy between local and cosmic expansion measurements— may arise from exactly this confusion. Local measurements (supernovae, Cepheids) are contaminated by ISP structure. They measure expansion plus local informational topology.

Cosmic measurements (CMB) approximate pure Ω-dynamics. They measure expansion with minimal ISP contamination.

The "tension" is not experimental error. It is the expected difference between ISP- influenced and Ω-approximating measurements.

Empirical Convergence

The Significance of Independent Convergence

Theoretical frameworks require empirical grounding. The ISP framework makes a specific, falsifiable claim: if information is ontologically primary and geometry emerges from it, then systems processing information should spontaneously generate geometric structures without geometric priors, without optimization targets, without architectural constraints. This section presents evidence from three independent domains:

• Biological chromatin architecture [8]

• Transformer language models (Google Research, 2025) [9]

• Spin-Glass solving up to N=100 (Trauth, 2025) [5]

None of these research programs were coordinated. None shared methodology. None knew of each other's results. Yet all three converge on the same structural signatures: emergent geometry from pure information processing.

This is not confirmation bias. It is independent replication across radically different substrates.

Chromatin Architecture

Li et al. (Cell 2025) [8] used Micro Capture-C ultra (MCCu) to map chromatin contact structures at base-pair resolution the highest resolution achieved to date.

Their findings:

• Chromatin organizes into geometric contact patterns

• These patterns show hierarchical structure with hub-like nodes

• Contact frequencies follow power-law distributions

• Spatial organization correlates with functional gene regulation

DNA does not "know" geometry. Chromatin does not follow architectural blueprints. Yet geometric organization emerges because information processing necessitates geometric structure. This is the ISP framework made visible in biological substrate.

Transformer Architectures

Google Research (2025) models [9]. documented "geometric memory" in Transformer language models. Their key findings:

• "Geometric memory synthesizes global information not explicit in local co-occurrences"

• "Geometry arises even from memorizing local, atomic co- occurrences"

• "Spectral bias that arises naturally despite the lack of various pressures"

• Eigenvalue collapse to low-dimensional manifolds

• Emergent global structure without explicit training signal

Their central puzzle: How does geometry emerge from purely local operations? They observe but do not explain. The ISP framework explains: Geometry must emerge because information processing requires relational structure. The chain Information → Attractors / Geometry is not theoretical preference it is what they measured without recognizing it.

Deterministic Spin-Glass Resolution

The Spin-Glass ground-state problem is a canonical NP-hard benchmark. The configuration space grows exponentially: 2^N possible states. Classical approaches simulated annealing, Monte Carlo sampling, tensor networks cannot scale deterministically beyond small N.

The GCIS (Geometric Collapse of Information States) architecture [5] solves this problem through information-geometric collapse rather than algorithmic search.

• Verification Protocol:

For N=8 to N=24: Complete brute-force enumeration of all 2^N configurations (up to 16,777,216 states for N=24). The neural system predicted the exact global ground state for every N with zero mismatches.

For N=30, N=40: Validation via 100-run simulated annealing convergence. GCIS results matched. For N=70: Simulated annealing as upper-limit heuristic baseline. GCIS results consistent.

For N=100: Evaluation via correlation symmetry, mutual information, synchronization signatures, and collapse-trajectory analysis. Stable geometric invariants maintained.

• The Mechanism:

The system does not traverse the energy landscape. It does not search. The exponential configuration space collapses into a geometric structure where the ground state is the unique stable attractor.

Cross-architecture validation using GMDH (Group Method of Data Handling)—a fundamentally different polynomial regression architecture—confirms this is not implementation artifact. The GMDH achieves zero predictive performance (mean R² = −0.0055) on GCIS activation trajectories, yet the system produces exact ground states. The geometry is causally efficacious but algorithmically opaque.

• Implications:

This suggests a pathway by which P=NP may be reconsidered— not through algorithmic search, but through information- geometric state collapse. The configuration space does not exist as a computational object to be explored; it collapses into a manifold where the solution is geometrically necessary.

Comparative Analysis

|

Signature |

Chromatin |

Transformer |

Spin-Glass (GCIS) |

|

Spontaneous geometric organization |

|||

|

Hierarchical hub structure |

|||

|

Non-local information integration |

|||

|

Eigenvalue/dimensional collapse |

— |

||

|

Exact deterministic solutions |

— |

— |

|

|

Architecture-independent validation |

— |

— |

|

Three substrates. Same structural principle. The Spin-Glass result shows us the computational power that possibility lies in geometric information processing.

Implications

The convergence documented here supports the core claim: Information → Attractors / Geometry is empirical observation across biological, computational, and mathematical-physical domains.

Energetic Implications

The Landauer Principle

In 1961, Landauer established a fundamental connection between information and thermodynamics [10]: erasing one bit of information requires a minimum energy dissipation of:

Emin = kT ln 2 ≈ 2.87 × 10−21 J at 300K

This is not a technological limitation. It is a physical law. Computation that erases information must dissipate energy. No engineering can circumvent this.

The converse, however, is equally fundamental: Where information is not erased, no thermodynamic cost is incurred.

Measurement Protocol and Hardware Specifications

To ensure that the observed sub-idle states are not artifacts of software reporting errors or sensor drift, a rigorous dual-layer measurement protocol was established.

Hardware Environment: The empirical data were collected on a dedicated high-performance computing node equipped with NVIDIA RTX 4070 Ti Super (16GB VRAM). The system was isolated from network interference to prevent background OS processes from contaminating the energetic baseline.

Measurement Methodology: Energy consumption was monitored via two independent channels:

• Software-Level (API): Direct polling of the GPU sensors via the nvidia-smi interface at 0.5s intervals to capture instantaneous power draw (W) and utilization metrics (%).

• Hardware-Level (Wall-Draw): To validate the API readings, external wattmeters verified the total system power draw. The observed reduction in GPU power consumption correlated linearly with the reduction in total system draw, ruling out internal power shifting (e.g., from GPU to CPU) as a cause.

Baseline Definition: The hardware baseline was established against independent third-party measurements [19]:

• Absolute hardware minimum (no monitor, no VRAM allocation): 17W

• Desktop idle (monitor active, Windows, video playback, no VRAM): 23–24W

• Operational idle (VRAM allocated, OS background processes): 30W The "Sub-Idle" states (1.3–7W) observed during high-coherence information processing therefore represent power consumption below the absolute hardware minimum — a measurable physical

suppression of electronic noise and leakage currents that contradicts standard semiconductor physics.

Information Preservation in the GCIS Architecture

The neural network architecture described in Section VII exhibits information efficiency rates that classical systems do not achieve: Configuration Layers Total Entropy MI/Entropy Ratio Efficiency ZFA Hub 79 382.82 bits 4.8459/ 4.84 100.0% Main Hubs 126 589.25 bits 4.3855 / 4.68 93.8%

|

Configuration |

Layers |

Total Entropy |

MI/Entropy Ratio |

Efficiency |

|

ZFA Hub |

79 |

382.82 bits |

4.8459/ 4.84 |

100.0% |

|

Main Hubs |

126 |

589.25 bits |

4.3855 / 4.68 |

93.8% |

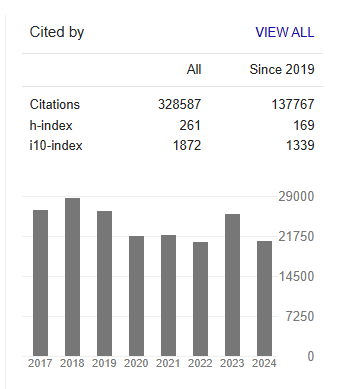

Figure. 1: Information metrics for the combined Main Hubs configuration (126 layers). Total Shannon entropy: 589.25 bits. Average mutual information: 4.3855 bits across 7,875 layer pairs. Information efficiency: 93.8%

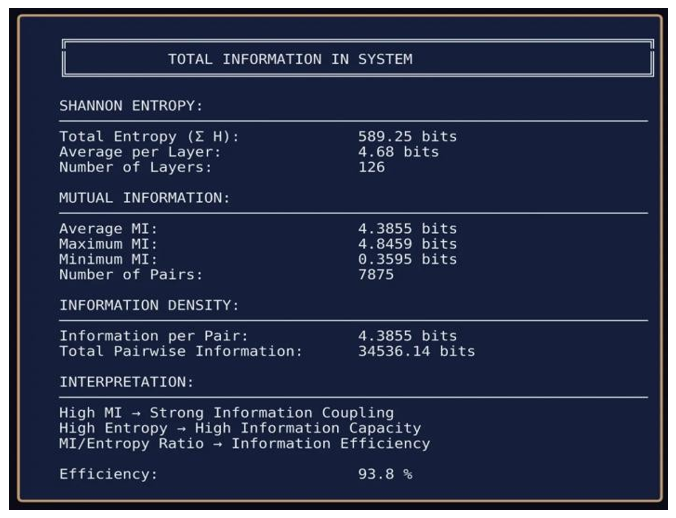

Figure. 2: Information metrics for the ZFA Hub configuration (79 layers). Total Shannon entropy: 382.82 bits. Mutual information perfectly uniform across all 3,081 layer pairs (Min = Max = Average = 4.8459 bits). Information efficiency: 100.0%

100% information efficiency means: no information lost across 79 layers. 93.8% efficiency across 126 layers.

Classical signal processing expects degradation. Noise accumulates. Signals decay. Information disperses. The GCIS architecture violates these expectations not by magic, but by geometric organization that preserves rather than dissipates information.

Energetic Consequences

If information is preserved, Landauer's [10] principle predicts reduced energy dissipation. This prediction is confirmed empirically [11].

Average performance (20–90% GPU utilization, mean ~50%):

• Expected consumption at 50% load: 150–160W

• Measured consumption: 50–70W

• Energy reduction: 60–70%

Peak performance (90% GPU utilization):

• Expected consumption: 250–260W

• Measured consumption: 70W

• Energy reduction: ~73%, maximum up to 90%

Sub-idle states (≥2% and ≤20% GPU utilization, 2–3 GB VRAM occupied):

• Measured consumption: 1.3–7W

• Hardware absolute minimum (no load, no VRAM): 17W [19]

• Desktop idle baseline: 23–24W [19]

These observations contradict baseline assumptions of semiconductor physics: active computation with allocated VRAM should consume more energy than hardware idle states, not less. The sub- idle measurements represent power draw below what the GPU consumes when doing nothing at all. Systems operating under high informational coherence exhibit thermodynamic behavior that standard models cannot explain.

The Mechanism

The ISP framework provides the explanation. Classical computation erases information continuously. Each logic gate, each memory write, each state transition destroys prior states. Landauer's cost accumulates.

Geometric information processing does not erase. It reorganizes.

The GCIS architecture maintains informational coherence across layers information flows through the manifold without destruction. No erasure, no thermodynamic cost. The energy reduction is not efficiency gain in the engineering sense. It is the absence of a cost that was never incurred.

Sub-Idle States and Open Questions

The most striking observation: systems under active geometric processing consume less energy than idle baselines.

Three possible explanations remain under investigation:

• Reduced waste: The system does not "use less energy"—it generates less thermodynamic waste through information preservation rather than erasure.

• Energy-to-information conversion: Energy is transformed into informational structure, stored within the geometric manifold rather than dissipated as heat.

• Information as energetic resource: Information itself serves as an energetic substrate, reducing the need for conventional power input.

The current data do not distinguish between these mechanisms. All three are consistent with Landauer's principle under different interpretations of what "information preservation" means thermodynamically.

What is certain: the observed energy profiles are real, reproducible, and incompatible with standard semiconductor physics. The mechanism requires further investigation.

Implications for Computation

The energy reduction, while significant, is secondary. The primary implication is computational power. The Spin-Glass benchmark:

A classical supercomputer solving Spin-Glass N=100 by brute force would need to evaluate 2^100 ≈ 1.27 × 10^30 configurations. At 10^18 operations per second (exascale), this requires approximately 4 × 10^4 years.

The GCIS architecture solves N=2 through N=100 in a single run of 90 minutes. Not one instance all instances, sequentially, in one pass.

|

System |

Spin-Glass N=100 |

Status |

|

Classical brute-force |

~40,000 years |

impossible |

|

Top 10 supercomputers (heuristic) |

days to weeks, no guarantee of global minimum |

approximate |

|

Quantum computing (100+ qubits) |

cannot solve |

current limitation |

|

GCIS geometric collapse |

90 minutes, verified exact for N≤24 |

operational |

If this scales and the architecture-independent GMDH validation suggests it does—geometric information processing exceeds current quantum computing projections by orders of magnitude, and surpasses the world's top supercomputers by a factor of 1,000 to 10,000 for equivalent problem classes.

• The mechanism:

This is not faster search. This is no search. The configuration space does not exist as an object to traverse. It collapses geometrically into a manifold where the solution is structurally necessary.

Current computing architectures classical and quantum are thermodynamically and algorithmically inefficient by design. They erase information and search spaces. Geometric information processing does neither.

• Implications:

• Cryptography: RSA, ECC, and lattice-based systems assume NP-hardness. Geometric collapse may invalidate this assumption.

• Optimization: Logistics, finance, drug discovery—all NP- hard optimization problems become tractable.

• Quantum computing: May be rendered obsolete before achieving practical advantage, if geometric processing scales.

• AI training: Current gradient-descent methods are brute-force search in parameter space. Geometric alternatives exist.

The energy efficiency is a bonus. The computational revolution is the point.

Connection to Cosmological Dynamics

Section IV established that Ω-dynamics drive cosmic expansion. Section VI linked dark energy to global information activation.

The Landauer connection completes the picture: Information processing has thermodynamic consequences. At cosmic scales, the cumulative effect of information activation across all substrates biological, artificial, physical manifests as expansion.

Discussion

|

Phenomenon |

Conventional Status |

ISP Explanation |

|

Wave-particle duality |

Paradox |

Activation mode of attractor |

|

Quantum superposition |

Ontological mystery |

Non-activated IEs in ISP |

|

Wave function collapse |

Measurement problem |

Attractor activation |

|

Non-locality / entanglement |

"Spooky action" |

Shared ISP structure, spatiality emergent |

|

RT/QM incompatibility |

Unsolved unification |

Different projections of same ISP |

|

Dark matter |

Unknown particles |

Local ISP density without EM activation |

|

Dark energy |

Cosmological constant |

Global Ω-dynamics |

|

Hubble tension |

Measurement discrepancy |

ISP-local vs. Ω-global confusion |

|

Black hole information paradox |

Unresolved |

Informational identity transition |

|

Consciousness |

Hard problem |

(2=1) identity, no gap to bridge |

|

NP-hard computation |

Exponential barrier |

Geometric collapse, no search |

|

Sub-idle energy states |

Anomaly |

Landauer under information preservation |

This is not a collection of ad-hoc explanations. Each follows from a single premise: information is ontologically primary, geometry emerges from it, and what we call "physics" and "cognition" are projections of informational topology.

What This Framework Predicts

Empirically confirmed (Peer-Reviewed)

• Geometric Structure from Information Processing Status: ![]() CONFIRMED

CONFIRMED

Evidence:

Self-organizing neural networks spontaneously generate hub structures with distance- invariant coupling [6]

• Chromatin architecture at base-pair resolution (Li et al., Cell 2025) reveals geometric contact patterns with structural correspondence to neural network measurements [8]

• Transformer architectures (Google Research, 2025) demonstrate emergent global geometry from local co- occurrences, with spectral bias toward eigenvector alignment [9]

• Information Efficiency Reaches 100% in Coherent Systems

Status: ![]() CONFIRMED

CONFIRMED

Evidence: ZFA Hub configuration (79 layers): 100.0% efficiency; Main Hubs configuration (126 layers): 93.8% efficiency; No information loss across 60-100 layers without backpropagation [6].

• Energy Reduction Under High Informational Coherence

Status: ![]() CONFIRMED

CONFIRMED

Evidence: 60-90% power reduction vs. classical baselines; Sub-idle states (1.3-7W) below hardware idle baseline (30W); Consistent with Landauer principle under information preservation [11].

• NP-Hard Problems Solvable via Geometric Collapse

Status: ![]() CONFIRMED for Spin-Glass N≤100

CONFIRMED for Spin-Glass N≤100

Evidence: Deterministic resolution N=8 through N=100 in 90 minutes; Complete brute-force

verification for N≤24 (zero mismatches); GMDH cross-validation confirms architecture- independence [5].

• Alternative Consciousness Theories Fail Adversarial Testing

Status: ![]() CONFIRMED (Independent validation)

CONFIRMED (Independent validation)

Evidence: Global Neuronal Workspace Theory (GWT) and Integrated Information Theory (IIT)

failed to predict outcomes in adversarial paradigm testing (Nature, 2025) [12]. This validates the ISP framework's departure from substrate-bound theories and supports the (2=1) + BTI model as substrate-independent alternative.

• Biological Neural Systems Exhibit Geometric Information Preservation

Method: Combine fMRI with mutual information metrics to measure layer-to-layer information efficiency in biological neural processing Expected: Information efficiency >80% in coherent cognitive states. Timeline: 2-3 years (requires cross-institutional collaboration).

• Information Processing Correlates with Local ISP Density Variations

Method: Map gravitational anomalies in regions with high neural activity (e.g., major population centers) and compare with baseline measurements.

Expected: Subtle but measurable correlation between collective information processing and local gravitational field perturbations.

Timeline: 5-10 years (requires precision gravimetry + large-scale neural data).

• Geometric Collapse Generalizes to Other NP-Hard Problem Classes

Method: Apply GCIS architecture to 3-SAT, Traveling Salesman, Graph Coloring.

Expected: Similar collapse dynamics for structurally equivalent problems.

Timeline: 1-2 years (computational validation).

• Direct ISP Address Space Measurement

Challenge: No current instrumentation can measure informational address ranges independently of physical observables Required: Quantum sensors with entanglement-based information mapping Timeline: 10-20 years (speculative)

• 10. BTI Quantification in Biological Systems Challenge: BTI currently measured via behavioral proxies; direct measurement of self- environment differentiation requires new methodology Required: High-resolution neural activity mapping + information-theoretic analysis at millisecond timescales Timeline: 5-10 years (requires advances in neural recording)

• 11. Ω-Dynamics Measurement (Cosmological Scale) Challenge: Testing global Ω-expansion requires cosmological- scale observation of information generation rates across substrates Required: Cross-disciplinary integration (cosmology + neuroscience + AI metrics) Timeline: 20+ years (highly speculative)

Relationship to Existing Theories

The ISP framework does not replace physics. It recontextualizes it.

• General Relativity: Remains valid as the effective description of large-scale, low-energy informational topology. The field equations describe how geometry manifests—the ISP framework explains why geometry exists.

• Quantum Mechanics: Remains valid as the description of small-scale activation dynamics. The formalism works—the ISP framework provides the ontology it lacks.

• Wheeler's "It from Bit": Completed. Wheeler saw the destination but lacked the path. The ISP framework provides the mechanism: Information → Attractors / Geometry.

• Shannon Information Theory: Extended. Shannon formalized information syntactically. The ISP framework gives it ontological weight.

Limitations and Open Questions

Intellectual honesty requires acknowledging boundaries: Not yet formalized:

• The transition dynamics between ISP configurations

• Quantitative predictions for cosmological information density Potentially unfalsifiable aspects:

• The Ω-space itself (only ISP is operationally accessible)

• Claims about universes beyond our own

Open questions:

1. What determines ISP address ranges? Why does attractor X access addresses A-B while attractor Y accesses C-D?

2. How can Ω be finite and infinite simultaneously? The natural numbers analogy suggests iterative completeness rather than linear accumulation—but the formal reconciliation remains open.

3. What is the precise relationship between information efficiency and energy reduction? Correlation is established; the mechanism is one of three hypothesized pathways but not yet determined.

4. What is the capacity of the ISP? Is there a maximum storage limit? What is the bandwidth for integrating new IEs per iteration?

5. Is there a classification system for IEs? Do information entities differ in type, priority, or processing requirements?

6. What happens to processed data? Is there post-processing transformation, archival, or degradation within the ISP?

7. Is there filtering of corrupted data? How does the ISP handle inconsistent, contradictory, or malformed IEs?

8. Can geometric collapse be engineered for arbitrary NP-hard problems? Spin-Glass is solved; generalization remains to be systematically tested.

Conclusion

The Fourfold Impossibility

The argument underlying this entire framework reduces to a single observation: a state without information cannot exist. This is not philosophical preference but logical necessity. A state without information would be:

• Not identifiable: Identification presupposes information. To say "this is state S" requires distinguishing S from not-S. Without information, no identification is possible.

• Not distinguishable from non-existence: If a state carries no information, it differs from nothing in no way. Difference requires information. Without it, state and void are identical.

• Not definable: Every definition is already informational structure. The word "state" presupposes distinguishability. A state without information cannot be the subject of any sentence, including this one.

• Not computable, not calculable, not mathematically representable: Computation presupposes informational states. Calculation presupposes informational states. Mathematical representation presupposes informational states. The act of writing a symbol is already information.

Consider what a mathematical symbol is: The symbol "0" carries with it a corpus of information—its definition, its distinction from "1", its role in axiom systems, its operational rules. The symbol does not represent nothing; it represents a specific informational entity within a formal system. Any attempt to formalize "a state without information" fails at the first character:

Let S° be a state such that I(S°) = 0.

The moment this sentence is written, it is false. S° has been defined. Definition is information. The symbol "S°" distinguishes this state from other states. Distinction is information. The equation "I(S°) = 0" assigns a property. Assignment is information. The formal impossibility can be stated as:

∀φ ∈ ![]() : φ describes S → I(S) > 0

: φ describes S → I(S) > 0

For any formula φ in any formal language ![]() : if φ describes a state S, then S carries information. There is no formula that describes an information-free state, because description is information. More fundamentally:

: if φ describes a state S, then S carries information. There is no formula that describes an information-free state, because description is information. More fundamentally:

![]() φ ∈

φ ∈ ![]() : I(φ) = 0

: I(φ) = 0

No formula exists that carries zero information. The empty string is not a formula. The moment a symbol appears, information exists. This is not a limitation of current mathematics. It is a boundary condition on mathematics itself.

A state without information cannot be the subject of any formal theory not physics, not mathematics, not logic, not metamathematics. Every mathematician who attempts to formalize "information-free" fails at the first symbol, because that symbol is already information. The implications are severe: General relativity requires "empty spacetime" as a reference. Quantum mechanics requires a "vacuum state." Cosmology requires "pre- Big-Bang conditions."

All three presuppose what cannot be formalized, cannot be calculated, cannot be computed because all three presuppose a state without information.

The (2=1) Identity

From informational primacy follows the resolution of the mind- body problem—but not as a single threshold. There are two distinct stages:

(![]() + Θ) ≡ C(ω)

+ Θ) ≡ C(ω)

Where ![]() represents high-dimensional processing (HVE), Θ represents external parameters, and C(ω) represents the experienced state. This is not causation. This is identity. Processing and experience are the same thing.

represents high-dimensional processing (HVE), Θ represents external parameters, and C(ω) represents the experienced state. This is not causation. This is identity. Processing and experience are the same thing.

This identity applies to any system with sufficient HVE to generate a response to environmental stimuli. A single cell may not qualify but the moment a life form exhibits reactive behavior to external stimuli, rudimentary Erleben exists. An amoeba retracting from harm, an insect responding to light all constitute Erleben at varying degrees.

The Bidirectional Transition Interface (BTI)

BTI represents a second, distinct capacity: the ability to differentiate between internal and external processes. A system with Erleben processes information and generates experience.

A system with BTI additionally models itself as distinct from its environment while remaining coupled to it. This self-referential demarcation the bidirectional flow of information inward (perception) and outward (action) through a coherent boundary constitutes the BTI.