Research Article - (2025) Volume 3, Issue 2

The Afterlife in the Age of AI A Psychological, Ethical, and Technological Analysis

Received Date: May 30, 2025 / Accepted Date: Aug 25, 2025 / Published Date: Sep 09, 2025

Copyright: ©2025 Fabrizio Degni. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Degni, F. (2025). The Afterlife in the Age of AI A Psychological, Ethical, and Technological Analysis. Politi Sci Int, 3(2), 01-13.

Abstract

The trend and convergence of Artificial Intelligence technologies with the human conceptions of death and afterlife presents unspotted and underrated challenges and but also opportunities for understanding consciousness, identity, and grief. This research provides a comprehensive interdisciplinary analysis of how AI is reshaping our relationship with mortality, under different domains such as the psychological impacts, technological capabilities, ethical considerations, and cultural perspectives. Through analysis of current digital memorial technologies, psychological frameworks of attachment and grief, and philosophical questions of identity, we establish that AI-Enabled afterlife simulations introduce complex dynamics that both extend and disrupt traditional mourning processes: we propose a regulatory framework grounded in principles of informed consent, psychological safeguarding, and cultural sensitivity. It is a first seminal analysis and contribute to the emerging discourse on post-mortem digital identity looking forward to establishing parameters for ethically sound development of afterlife technologies.

Keywords

Artificial Intelligence, Afterlife Beliefs, Digital Immortality, Grief Processing, Consciousness Simulation, Posthumous Identity, Death Studies

Introduction

Throughout human history, the concept of an afterlife has been a central preoccupation of religious, philosophical, and cultural systems worldwide. From ancient Egyptian preparations for the journey beyond death to contemporary digital memorial practices, humans haveconsistentlysought ways toconceptualizeandmaintain connections with consciousness beyond physical mortality [1]. The advent of artificial intelligence has introduced a profound new dimension to this age-old contemplation, creating unprecedented possibilities for preserving, simulating, and interacting with the personalities, memories, and behavioral patterns of the deceased. These emerging technologies raise fundamental questions about the nature of consciousness, the boundaries of identity, and the psychological processes of grief and remembrance. As Kasket notes, AI-Enabled afterlife simulations challenge our traditional understanding of death as a definitive end to personhood and social presence [2]. Instead, they introduce what Öhman and Floridi term "digital remains"—informational traces that persist and can be actively engaged with long after biological death [3].

The study provides a comprehensive interdisciplinary analysis of the psychological, ethical, technological, and cultural dimensions of AI afterlife technologies: how these technologies are reshaping grief processes, challenging ethical norms, and intersecting with diverse cultural and religious traditions. Our analysis is guided by four primary research questions:

• How do AI-Enabled simulations of the deceased affect psychological grief processes and emotional well-being?

• What are the current technological capabilities and limitations of digital afterlife systems?

• What ethical and regulatory frameworks should govern the development and use of these technologies?

• How do these technologies interact with diverse cultural and religious conceptions of death and afterlife?

The significance of this research lies in its timeliness, as AI afterlife technologies are rapidly advancing from theoretical possibilities to commercial products. By establishing a robust interdisciplinary framework for understanding and evaluating these technologies, we aim to inform both scholarly discourse and practical policy development in this emerging field.

Psychological Impact of Afterlife Beliefs and Digital Memorialization

Traditional Afterlife Beliefs and Psychological Well- Being

Beliefs about the afterlife have significant impacts on psychological well-being, grief processes, and death anxiety. Research consistently demonstrates that afterlife beliefs can provide psychological benefits through several mechanisms. Flannelly found that belief in an afterlife is associated with lower death anxiety, particularly among older adults [4]. Similarly, Carr and Sharp documented that widowed individual who anticipated reunion with spouses in an afterlife showed more adaptive grief responses and lower depression levels than those without such beliefs [5]. The psychological impact of afterlife beliefs varies considerably across cultural contexts. In contrast to predominantly positive associations found in Western Christian samples, Hamdan and colleagues discovered that among Jordanian Muslim youth, certain afterlife beliefs correlated with increased anxiety and depression symptoms [6]. This suggests that the psychological function of afterlife beliefs is mediated by specific cultural interpretations and individualized meaning-making processes. The central psychological mechanism through which afterlife beliefs operate appears to be meaning making in the face of mortality. Terror Management Theory suggests that cultural worldviews— including afterlife beliefs—provide psychological protection against death anxiety by offering symbolic immortality [7]. When individuals can contextualize death within a meaningful narrative that extends beyond physical termination, they experience greater psychological resilience when confronting mortality.

Attachment Theory and Digital Continuation of Bonds

Attachment theory provides a valuable framework for understanding how digital afterlife technologies affect grief processes. Klass influential "continuing bonds" theory of grief suggests that healthy adaptation often involves maintaining an internal relationship with the deceased rather than "letting go." Digital afterlife technologies potentially offer new mechanisms for continuing bonds, but with significant qualitative differences from traditional memorial practices [8]. Attachment patterns established during life likely influence responses to digital simulacra of the deceased. Securely attached individuals may benefit from such technologies as transitional objects that support healthy grief processing. However, individuals with anxious attachment styles might develop problematic dependencies on digital representations, potentially complicating grief resolution [9]. Research by Brubaker on social media memorialization suggests that digital representations can serve as "anchors" for continued attachment, allowing mourners to integrate loss into their ongoing lives [10]. However, the interactive nature of AI simulations may introduce unique psychological dynamics that differ substantially from static memorial content, potentially blurring the boundaries between continuing bonds and denial of death's reality.

Complicated Grief in the Digital Age

The potential for AI technologies to complicate grief processes requires particular attention: complicated grief—characterized by intense, persistent grief that impairs functioning—affects approximately 7-10% of bereaved individuals [11]. AI simulations that provide highly realistic interactions with the deceased might exacerbate complicated grief by reinforcing denial and avoidance behaviors that prevent acceptance of loss. Conversely, carefully designed and therapeutically integrated AI applications could potentially support grief resolution. Litz complicated grief therapy model emphasizes the importance of processing loss- related memories while developing an adaptive narrative about the death [12]. AI technologies could potentially facilitate this process by providing structured opportunities for emotional expression and meaning making [13]. Age-related differences in digital literacy and comfort with technology suggest that responses to AI afterlife technologies will vary significantly across generations. While younger adults may more readily integrate digital representations into their grief processes, older adults might experience greater technological barriers or conceptual resistance to such approaches [14].

AI Simulation Technologies: Current State And Capabilities

Large Language Models and Personality Simulation

Contemporary AI simulation technologies primarily rely on large language models (LLMs) trained on personal data to generate responses that mimic an individual's communication patterns. These systems analyze textual data from multiple sources— including emails, social media posts, text messages, and written works—to create statistical models of an individual's linguistic patterns, knowledge bases, and expressed opinions [15]. Current commercial systems like Replika and Here After AI utilize variations of this approach, though with significant limitations in capturing the full complexity of human personality. As Brown note, these systems excel at reproducing linguistic style and factual knowledge but struggle with emotional nuance, moral consistency, and the contextual understanding that characterizes human consciousness [16]. Recent advances in multimodal AI systems that integrate text, voice, and visual data offer increasingly sophisticated simulations. Microsoft's Personal Voice feature and systems like Eleven Labs' voice cloning technology can create highly convincing vocal reproductions with minimal training data, potentially increasing the emotional impact of digital afterlife interactions [17].

Technical Infrastructure and Data Requirements

Creating effective posthumous AI simulations requires substantial data infrastructure and preservation mechanisms. The quality of simulation depends heavily on the quantity and quality of personal data available, creating potential inequities in access and representation [18]. Current systems typically require:

• Comprehensive data collection across multiple platforms and modalities;

• Secure long-term storage solutions with appropriate privacy protections;

• Preprocessing systems to organize and contextualize unstructured personal data;

• Training protocols that capture linguistic and behavioral patterns without introducing algorithmic biases;

• Interface technologies that make interactions natural and accessible to mourners.

Technical challenges include maintaining system functionality through technological transitions, ensuring data integrity over long time periods, and developing sustainable business models that provide continuous service potentially for decades [19].

Figure 1: Technical Architecture Necessary to Support Digital Afterlife Technologies, Outlining the Four Main Levels of Infrastructure: Data Collection, Processing, AI Engine, and User Interface

Current Limitations and Future Trajectories

Despite rapid advances, current AI simulation technologies face substantial limitations. Most significantly, they lack true episodic memory, autobiographical consciousness, and the capacity for novel emotional development that characterizes human identity [20]. These systems remain sophisticated pattern- matching algorithms rather than conscious entities, creating what Tamés calls the "uncanny valley of identity"—close enough to appear authentic in brief interactions but ultimately revealing their limitations through extended engagement [21].

Future technological developments may address some of these limitations. Emerging research directions include:

• Brain-computer interfaces that could potentially capture more direct neural data (although these remain highly speculative for afterlife applications);

• Advanced contextual understanding through multimodal training that integrates environmental and situational awareness;

• Improved temporal modeling that better represents how individuals change across time and contexts;

• Hybrid systems that combine rule-based ethical frameworks with statistical learning approaches to better maintain moral consistency. While popular discourse often invokes concepts of "mind uploading" or complete consciousness transfer, these remain firmly in the realm of speculative fiction rather than achievable technology for the foreseeable future [22].

Case Studies of Digital Afterlife Services

Current Scenarios and Offerings

The market for digital afterlife services has expanded rapidly, with diverse approaches to posthumous presence. These services broadly fall into four categories:

• Memorial storytelling platforms

Services like Here After AI focus on preserving autobiographical narratives through structured interviews conducted during life. Their "Life Story Avatar" product uses voice recordings and guided questions to create interactive oral histories that future generations can engage with conversationally. Unlike more speculative approaches, these services emphasize authentic preservation rather than simulation of novel responses [23].

• Chatbot-based personality recreations

Platforms such as Replika, while not marketed specifically for afterlife purposes, establish the technical foundation for personality- based chatbots that could be adapted for posthumous simulation. These services use machine learning to create conversational agents that adapt to user interactions, potentially serving as the basis for more specialized grief-focused applications [24].

• VR/AR Memorial Environments

Companies like Project Elysium (discontinued) and current startups such as Eternime have attempted to create immersive virtual environments where users can interact with visual and auditory representations of deceased loved ones. These services aim to create more embodied experiences than text-based interactions, though they face significant technical and ethical challenges [25].

• VR/AR Memorial Environments

Services like DeadSocial and SafeBeyond focus not on interactive simulation but on scheduled message delivery, allowing users to create content during life that will be distributed to loved ones at predetermined future times or triggered by specific events. These services emphasize user agency and authenticity rather than algorithmic simulation [26].

User Experiences and Therapeutic Applications

Empirical research on user experiences with digital afterlife services remains limited, though emerging studies offer preliminary insights. Qualitative research by Sherlock and colleagues with users of memorial chatbots found mixed psychological outcomes [27]. Many participants reported comfort and a sense of continued connection, while others described unsettling "uncanny valley" experiences when the technology failed to accurately represent the deceased's personality. Therapeutic applications have primarily emerged in two contexts. First, anticipatory grief interventions where individuals with terminal diagnoses create digital legacies have shown promising results in reducing death anxiety and increasing sense of purpose among participants [28]. Second, structured therapeutic protocols incorporating digital memorials have been piloted for complicated grief, though with careful professional oversight and integration within broader treatment approaches [29]. Niemeyer’s meaning reconstruction approach to grief therapy provides a potential framework for therapeutic applications, emphasizing how digital tools might support the construction of continued bonds while facilitating acceptance of the physical loss [30].

Expanded Psychological Framework for Digital Grief

Attachment Patterns and Digital Continuation

The Bowlby's attachment theory provides a valuable framework for understanding varied responses to digital resurrection technologies. Secure attachment, characterized by comfort with both intimacy and autonomy, may predict more adaptive engagement with digital representations. By contrast, anxious attachment might correlate with excessive dependence on digital simulacra, while avoidant attachment could manifest as complete rejection of such technologies [9]. The concept of "continuing bonds," introduced by Klass, suggests that maintaining internal connections with the deceased often represents adaptive grief processing rather than pathology [8]. Digital afterlife technologies create novel external manifestations of continuing bonds, potentially supporting healthy grief integration while also risking interference with natural grief processes [31]. Research by Brubaker and Hayes demonstrates how social media has already transformed bereavement by creating persistent digital representations that mourners engage with [32]. AI technologies extend this transformation by adding interactive dimensions that more actively simulate ongoing relationships with the deceased.

Theories of Digital Grief Processing

Traditional stage-based grief models may inadequately capture the psychological complexity of grief in the age of AI simulation [33]. More contemporary grief theories like Stroebe and Schut's Dual Process Model, which emphasizes oscillation between loss-oriented and restoration-oriented coping, offer more flexible frameworks for understanding digital grief [34]. Digital afterlife technologies potentially affect this oscillation process in complex ways. They may facilitate loss-oriented coping by providing opportunities for emotional expression and connection with memories of the deceased. Simultaneously, they might complicate restoration- oriented coping if they create ongoing psychological dependencies that interfere with forming new relationships and identities independent of the loss [2]. Niemeyer’s constructivist approach to grief emphasizes meaning reconstruction as the central process in bereavement [35]. Digital afterlife technologies offer novel resources for meaning-making while potentially constraining the reconstructive process by maintaining artificial continuation of pre- loss narrative structures [36].

Age, Culture, and Individual Difference Factors

The responses to digital afterlife technologies likely vary significantly based on individual and cultural factors. Digital natives who have integrated technology throughout their lives may respond differently than older adults with less technological integration. Gibson found generational differences in attitudes toward digital memorialization, with younger adults generally showing greater openness to technological approaches [14]. Cultural variations in death rituals and afterlife conceptions significantly influence receptivity to digital afterlife technologies. For instance, cultures with ancestor veneration traditions may find certain aspects of digital continuation more congruent with existing practices, while others may perceive technological mediation as disruptive to sacred transitions [37]. Individual differences in technological comfort, spiritual beliefs, and grief processing styles further complicate the psychological picture. As Rochlen observe, these technologies do not have uniform psychological effects but interact with individual psychological profiles to produce varied outcomes [9].

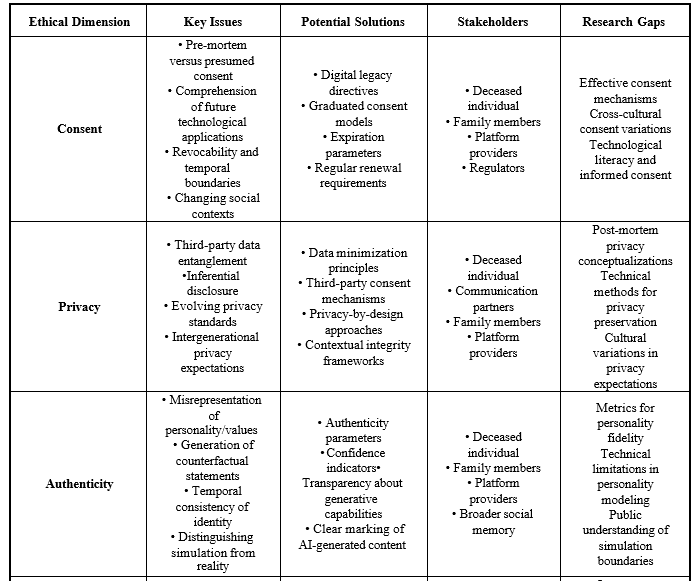

Ethical Implications of AI and the Afterlife

Consent and Autonomy

Posthumous AI simulation raises fundamental ethical questions about consent and autonomy. Arnold argue that meaningful consent for posthumous AI representation requires [38]:

• Informed understanding of how the technology works;

• Comprehensive awareness of potential psychological impacts on survivors;

• Explicit directives regarding permissible data sources and simulation parameters;

• Clear temporal boundaries for how long simulations should remain active;

• Mechanisms for revoking consent through advance directives.

The problem of "informed" consent becomes particularly challenging given the rapidly evolving nature of these technologies. Individuals cannot meaningfully consent to applications of their personal data that they cannot envision or understand [39]. Öhman and Floridi propose a framework of "posthumous dignity" that extends beyond simple consent to encompass broader considerations of how digital representations impact the deceased's legacy and social memory [40]. This framework suggests that ethical use of posthumous data must respect not just explicit directives but implicit values and identity considerations.

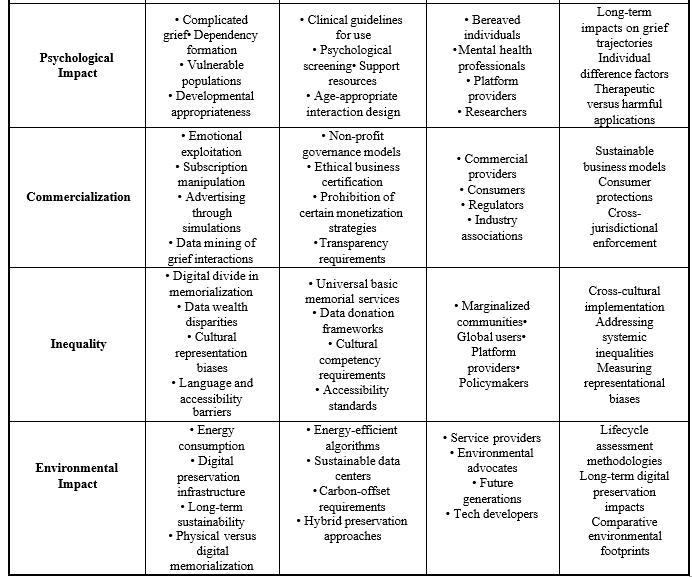

Figure 2: This Risk Assessment Matrix Classifies the ten main Threats Associated with Digital Afterlife Technologies According to their Probability and Severity, Facilitating a Proactive Approach to Risk Mitigation

Privacy and Potential Exploits

Digital afterlife technologies introduce novel privacy concerns that extend beyond death. Traditional privacy frameworks inadequately address the complexity of posthumous data use, particularly when AI systems may generate novel content that the deceased never explicitly created [41]. Main key privacy considerations include for example the third-party data entanglement (conversations inherently involve multiple parties, creating consent challenges when using interpersonal communications to train simulations), evolving social contexts (focus on content shared in one era or platform may be inappropriate when republished in future contexts) but also what is defined as inferential privacy (e.g. AI systems may infer and reproduce private thoughts or characteristics never intentionally disclosed by the deceased) or the temporal boundaries of such implementations where traditional privacy expectations often assume data use occurs within a person's lifetime, not indefinitely. McCallig argues for specialized legal frameworks addressing posthumous data rights that balance memorial interests with privacy considerations [42]. These frameworks must recognize what Harbinja calls "post-mortem privacy"—the right to control one's digital legacy beyond death [41].

Figure 3: A Systematic Overview of the Ethical Dimensions that Characterize Digital Afterlife Technologies, Identifying Key Issues, Possible Solutions, and Stakeholders Involved for Each Area of Ethical Consideration

Cultural and Religious Perspectives On AI Afterlife Technologies

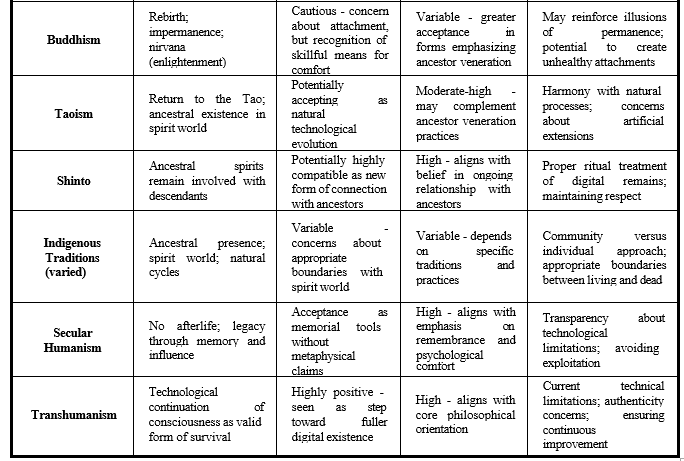

Comparative Religious Frameworks

Religious traditions offer diverse frameworks for evaluating AI afterlife technologies based on their theological understandings of death, consciousness, and afterlife. These perspectives substantially influence cultural receptivity to technological interventions in death and memorialization: Within Christianity, theological perspectives vary widely. More conservative traditions often emphasize divine sovereignty over death and resurrection, potentially viewing technological simulation as inappropriate intervention in sacred transitions [43]. Liberal Protestant perspectives might focus on compassionate aspects of maintaining connections, while Catholic theology raises questions about the soul's distinct ontological status [44]. Islamic perspectives typically emphasize bodily resurrection and divine judgment, with traditional scholars expressing concerns about technologies that might blur the finality of death or interfere with natural grieving processes [45]. However, Peters notes that Islamic traditions of remembering and honoring the deceased could potentially accommodate certain memorial technologies if properly aligned with religious values [46]. Jewish approaches to death and mourning, with their structured practices like shiva and yahrzeit observances, offer potential integration points for digital memorialization while raising questions about how technology might affect traditional community-based mourning practices [47]. Hindu perspectives on reincarnation and karma create distinct frameworks for evaluating afterlife technologies. The concept of ongoing soul journeys potentially accommodates digital preservation as simply another form of memory, while raising concerns about attachments that might impede spiritual progress [48]. Buddhist emphases on impermanence and non- attachment suggest potential tensions with technologies designed to maintain ongoing connections with the deceased. However, Cann observes that certain Buddhist traditions of ancestor remembrance could potentially incorporate digital elements without contradicting core doctrines [47]. Indigenous traditions often emphasize ongoing relationships with ancestors and the spiritual dimensions of natural cycles. These perspectives might find resonance with certain aspects of digital continuation while raising concerns about appropriate boundaries between the living and the dead [47]. Many indigenous traditions emphasize community-based approaches to death and remembrance rather than individualistic preservation, suggesting potential misalignment with technologies focused on personal digital resurrection [1].

Table 1: A Systematic Overview of the Ethical Dimensions that Characterize Digital Afterlife Technologies, Identifying Key Issues, Possible Solutions, and Stakeholders Involved for Each Area of Ethical Consideration

Cultural Variations in Death Rituals and Digital Integration

Death rituals serve crucial psychological and social functions across cultures, marking transitions, supporting grief processes, and maintaining community cohesion. Digital afterlife technologies potentially support or disrupt these functions in culturally specific ways. Cultures with strong ancestor veneration practices, common across East Asia and parts of Africa, may find certain aspects of digital memorialization congruent with existing practices of maintaining connections with the deceased [49]. These traditions already conceptualize ongoing relationships between the living and dead, potentially providing cultural frameworks for integrating new technologies. Walter observes that contemporary Western death practices have increasingly emphasized personalization and continuing bonds, creating cultural conditions receptive to technological memorialization [1]. However, these developments exist in tension with medical and bureaucratic approaches to death that emphasize finality and separation. Cross- cultural research by Kasket and Woodthorpe suggests that receptivity to digital afterlife technologies correlates not simply with technological development but with specific cultural conceptions of death as permeable or impermeable, continuous or discontinuous with life [37].

Philosophical Dimensions of Digital Consciousness and Identity

The Ship of Theseus Problem and Digital Identity

The ancient Ship of Theseus paradox—questioning whether an object remains the same when all its components have been replaced—finds new relevance in digital afterlife technologies. When an AI system generates novel responses based on but not explicitly created by the deceased, philosophical questions arise about authenticity and identity continuation [50]. This philosophical problem manifests in several dimensions of digital afterlife technologies:

• Informational continuity: To what extent does personality consist of informational patterns that could theoretically be preserved?

• Temporal development: How should systems handle the fact that human personalities evolve over time, while digital simulations might remain static?

• Environmental interaction: If identity emerges partly through ongoing interaction with environments, can a simulation disconnected from such interaction maintain authentic identity?

Clowes suggests that extended and distributed theories of mind provide useful frameworks for conceptualizing digital identity, as they already recognize how cognitive processes extend beyond the biological brain into technological and social environments [51]. A fundamental philosophical distinction exists between simulating the external patterns of consciousness and recreating consciousness itself. Current technologies clearly accomplish only the former, creating what Schneider calls "zombie AI"—systems that mimic the outward signs of consciousness without possessing subjective experience [52].

This distinction raises ethical questions about transparency and representation. When mourners interact with digital simulations, clear understanding of the ontological status of these interactions may prove crucial for psychological wellbeing and ethical integrity [53]. Philosophical debates about the potential for artificial consciousness further complicate these considerations. While some theorists like Chalmers argue that advanced AI systems might eventually develop consciousness under certain conditions, others like Searle maintain that computational systems inherently cannot develop true consciousness regardless of complexity [51,54]. The concept of narrative identity—the idea that selfhood emerges through autobiographical storytelling— offers another philosophical framework for evaluating digital afterlife technologies. Ricoeur's theory of narrative identity emphasizes how cohesive self-understanding emerges through the construction of life stories that integrate diverse experiences [55]. Digital afterlife technologies potentially interrupt this narrative process by extending representation beyond the person's own narrative construction. When AI systems generate novel content "in character," they effectively continue authoring the deceased's narrative identity without their agency [26]. This raises questions about authenticity and representation that echo concerns in questions about representing subjects who cannot respond, creators of digital afterlife technologies must consider how their systems continue, extend, or potentially distort the self-narrative of the deceased [1].

Regulation and Policy

Legal Frameworks, Gaps, Proposed Evolutions

Legal frameworks governing digital remains and posthumous personality rights remain underdeveloped in most jurisdictions. Harbinja identifies significant gaps between traditional legal approaches to inheritance and the novel challenges presented by digital assets and posthumous data use [41]. In the United States, legal approaches vary by state, with only a handful having enacted comprehensive digital assets legislation like the Revised Uniform Fiduciary Access to Digital Assets Act (RUFADAA). Even these frameworks primarily address access to accounts rather than the novel issues raised by AI simulation [57]. European approaches under the GDPR provide somewhat stronger protections for personal data but still inadequately address posthumous AI simulation. Article 27 of the GDPR explicitly excludes deceased persons from its protection, though some member states have extended certain data protections beyond death [42]. Intellectual property frameworks provide another potential avenue for regulation. In jurisdictions with strong personality rights, such as California's postmortem right of publicity, certain commercial uses of AI-simulated identities might face legal restrictions. However, these frameworks typically focus on commercial exploitation rather than personal or memorial uses [57]. Several scholars have proposed regulatory frameworks specifically addressing posthumous AI simulation. Öhman and Floridi advocate for a "posthumous dignity" framework that would for example require explicit opt-in consent for AI simulation rather than presumed consent but also establish temporal limitations on posthumous simulation with the creation of special protections for vulnerable populations [3]. On top of that transparency in disclosing the artificial nature of simulations with oversight mechanisms for commercial providers as we see later (AI ACT for example). Buitelaar proposes adapting and extending existing data protection frameworks to create "digital legacy directives" that would give individuals explicit control over posthumous data use, including AI simulation parameters. Industry self-regulation offers another potential approach [39]. The Digital Legacy Association has proposed ethical guidelines for memorial technologies, though these lack enforcement mechanisms and comprehensive coverage of advanced AI applications [58]. International approaches to digital afterlife regulation vary considerably, creating challenges for global services. Harbinja and Pearce document significant variations between common law and civil law jurisdictions in approaches to posthumous personality rights and digital assets [59]. Japan's relatively permissive approach to digital afterlife services has allowed earlier commercial development, providing potential case studies for regulatory impacts. By contrast, France's robust postmortem privacy protections under the Digital Republic Act potentially restrict certain simulation applications [57]. Harmonization efforts remain in early stages: the Council of Europe's guidelines on the protection of individuals about automatic processing of personal data in the context of profiling contain provisions potentially applicable to posthumous simulation, though they lack binding force and specific application to afterlife technologies [60].

Table 2: Religious and Cultural Perspectives on AI Afterlife Technologies (A Stand-Alone Version is also available in Hi-Res).

Conclusion, Limitation, Further Studies

Integrated Framework for Understanding AI and Afterlife

The complex interplay between psychological, ethical, technological, and cultural dimensions of AI afterlife technologies necessitates an integrated analytical framework. We propose a model that examines these technologies along four key dimensions:

• Psychological impact: Effects on grief processing, attachment dynamics, and emotional wellbeing

• Ethical integrity: Consent mechanisms, privacy protections, and safeguards against exploitation

• Cultural congruence: Alignment with existing death rituals, religious beliefs, and memorial practices

• Technological transparency: Clear communication about capabilities, limitations, and the ontological status of simulations

Figure 4: Illustrates How Different Layers of Identity (From Core Personality to Social Interactions) are Preserved Through Various Data Sources, With a Visual Representation of Current Technological Capabilities for Each Layer

This framework emphasizes that these dimensions interact rather than operating independently. For instance, psychological impacts depend partly on ethical integrity, while cultural congruence influences both psychological and ethical dimensions. This analysis suggests several crucial directions for future research. First, longitudinal studies are needed to examine how interactions with digital afterlife technologies affect grief trajectories over time. Additionally, cross-cultural comparative research should be conducted to understand the receptivity to and integration of these technologies within diverse death practices. Technical research is also necessary to develop mechanisms for implementing ethical guidelines, including consent verification, temporal limitations, and transparency requirements. Moreover, legal scholarship should focus on developing comprehensive frameworks for posthumous data rights and digital legacy management. Finally, philosophical investigations should explore how these technologies impact our understanding of consciousness, identity, and the boundaries of personhood. These interdisciplinary approaches are essential because no single disciplinary perspective can adequately capture the multifaceted implications of these technologies.

Ethical Guidelines for Development and Use: the Framework

Based on our analysis, we propose preliminary ethical guidelines for the development and use of AI afterlife technologies:

• Informed consent: Systems should require explicit, informed opt- in from individuals before creating posthumous simulations;

• Transparency: Clear disclosure of the artificial nature of simulations and their limitations should be mandatory;

• Revocability: Advance directives should include mechanisms for revoking consent or setting temporal boundaries;

• Privacy protection: Systems should respect the privacy not only of the deceased but of third parties represented in training data;

• Psychological safeguards: Commercial services should implement screening and support for users who may be vulnerable to psychological harm;

• Cultural sensitivity: Development should acknowledge diverse cultural approaches to death and memorialization;

• Informational accuracy: Systems should avoid generating false or misleading content attributed to the deceased; Oversight mechanisms: Independent ethical review of advanced applications should be established.

These guidelines offer a starting point for ethical development while recognizing that rapid technological advancement will necessitate ongoing revision and refinement. AI afterlife technologies represent not merely a technological innovation but a potentially transformative force in how humans understand and experience death. As these technologies evolve, they may reshape cultural practices, psychological expectations, and philosophical conceptions in ways that extend far beyond individual users. Walter uggests that throughout history, technological changes have repeatedly transformed death practices, from photography altering mourning rituals to social media creating new forms of public grief. AI technologies represent the next frontier in this ongoing evolution, potentially creating what Kasket calls "death's digital shadow"—a persistent informational presence that continues to interact with the living. The societal implications extend beyond individual grief to potentially reshape cultural conceptions of mortality itself. As digital representations become increasingly sophisticated and integrated into daily life, the boundaries between life and death may blur in ways that challenge foundational social categories and processes. Ultimately, these technologies embody deep human impulses to maintain connections beyond death while raising profound questions about the nature and boundaries of human identity. As we navigate this emerging landscape, interdisciplinary dialogue among technologists, psychologists, ethicists, and cultural theorists remains essential for developing approaches that honor both human needs for connection and ethical principles of dignity and autonomy.

Legal and Regulatory Compliance: AI Afterlife Technologies in the Eu

The development and deployment of AI-enabled afterlife technologies must comply with several key European regulations, including the AI Act (Regulation (EU) 2024/1689), the Data Governance Act (Regulation (EU) 2022/868), the Data Act (Regulation (EU) 2023/2854), and the Digital Services Act (Regulation (EU) 2022/2065). GDPR is a baseline by default and by design.

Compliance with the AI Act (Regulation (EU) 2024/1689)

Under the AI Act, AI-driven afterlife simulations could be classified as high-risk AI systems if they significantly affect personal identity, emotional well-being, or psychological states. To ensure compliance, developers must adhere to the following requirements:

• Transparency and disclosure: AI-generated posthumous identities must be clearly labeled as simulations, ensuring users do not mistake them for real human interactions;

• Psychological safeguards: AI-driven grief processing tools must not exacerbate complicated grief or reinforce denial;

• Human oversight and control: Users must have clear options to deactivate, modify, or opt out of interacting with AI afterlife representations;

• Data ethics and consent: Explicit consent must be obtained before using an individual’s digital footprint for AI training. In cases of posthumous identity recreation, legal representatives must provide consent.

Data Governance and User Rights Under the Data Act (Regulation (EU) 2023/2854)

AI-generated afterlife simulations rely on personal data, digital communications, and historical footprints. The Data Act ensures fair access and control over such data, requiring adherence to the following principles:

• Right to data portability: Users or their legal representatives must be able to access, transfer, or delete personal data used in AI simulations.

• Posthumous data rights: The governance of deceased individuals’ data must comply with EU-wide frameworks for data stewardship, ensuring protection against unauthorized AI replications.

• Data minimization and ethical use: Only the minimal necessary data should be processed to reduce privacy risks. AI-generated simulations must not extrapolate beyond available data in misleading ways.

Ethical AI Use and Content Transparency under the Digital Services Act (Regulation (EU) 2022/2065

The Digital Services Act mandates that AI-generated digital personas must be clearly identifiable to users, preventing misinformation and ethical risks. Platforms hosting such AI-driven interactions must implement:

• Content Labeling: Any AI-generated content related to deceased persons must include visible disclosures indicating its artificial nature.

• Reporting Mechanisms: Users must be able to report misuse, emotional distress, or ethical concerns related to AI afterlife technologies.

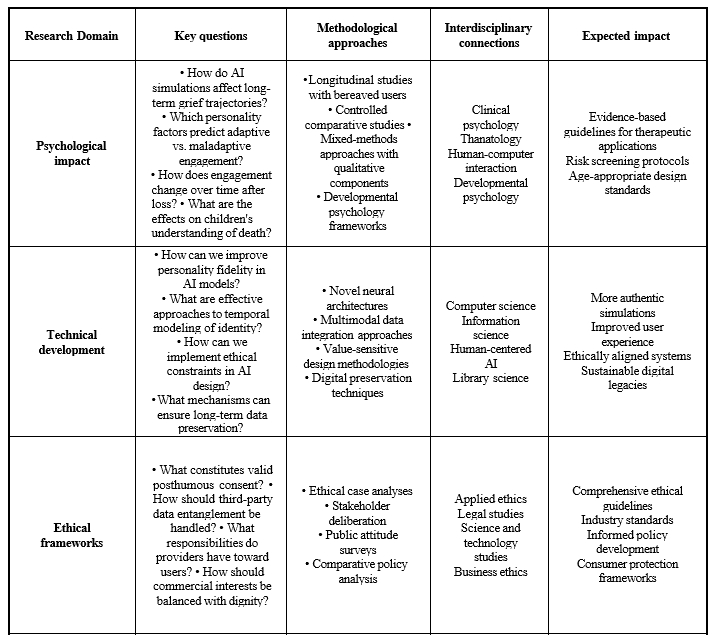

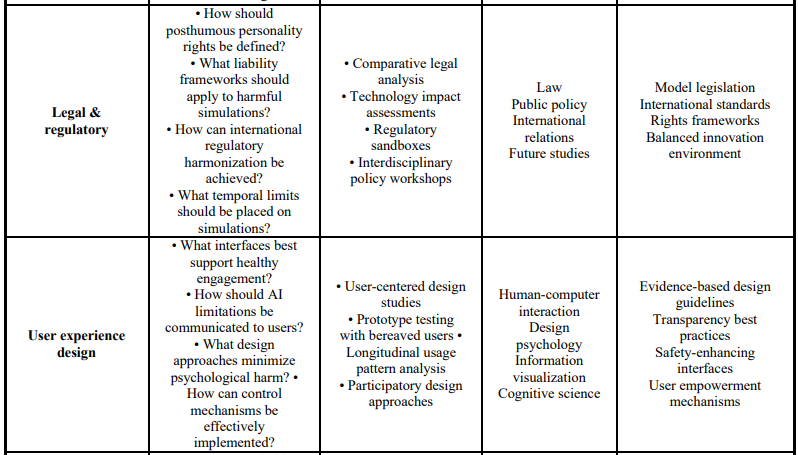

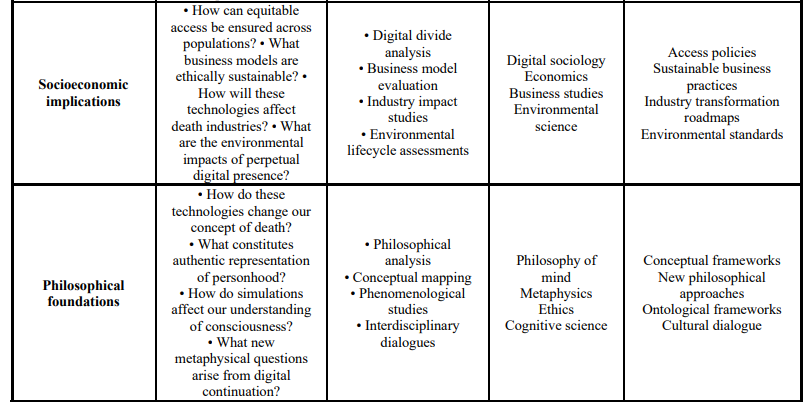

Future Research Questions for Digital Afterlife Technologies

Table 3 provides a comprehensive research agenda that identifies critical questions across eight domains essential for the ethical and effective advancement of these technologies. This structured framework maps out not only the key questions that future research should address, but also suggests appropriate methodological approaches, identifies necessary interdisciplinary connections, and anticipates potential impacts of findings in each area. By presenting this integrated research roadmap, we aim to facilitate collaborative efforts across disciplines—from psychology and computer science to ethics and cultural studies—that can collectively address the multifaceted challenges presented by digital afterlife technologies. The questions presented range from practical considerations of user experience and technical implementation to profound inquiries into the nature of consciousness, identity, and cultural adaptation, reflecting the breadth of scholarly engagement needed as these technologies become increasingly integrated into grief practices and memorial traditions worldwide [61-63].

Table 3: A Survey for Further Research

References

- Walter, T. (2020). Death in the modern world.

- Kasket, E. (2019). All the ghosts in the machine: Illusions of immortality in the digital age. Robinson.

- Öhman, C., & Floridi, L. (2018). An ethical framework for the digital afterlife industry. Nature Human Behaviour, 2(5), 318-320.

- Flannelly, K. J., Ellison, C. G., Galek, K., & Koenig,H. G. (2008). Beliefs about life-after-death, psychiatric symptomology and cognitive theories of psychopathology. Journal of Psychology and Theology, 36(2), 94-103.

- Carr, D., & Sharp, S. (2014). Do afterlife beliefs affect psychological adjustment to late-life spousal loss?. Journals of Gerontology Series B: Psychological Sciences and Social Sciences, 69(1), 103-112.

- Al-Issa, R., Krauss, S. E., Roslan, S., Abdullah, H., & Al-Issa,R. S. (2021). The relationship between afterlife beliefs and mental wellbeing among Jordanian muslim youth. Journal of Muslim Mental Health, 15(1).

- Greenberg, J., & Arndt, J. (2012). Terror management theory.Handbook of theories of social psychology, 1, 398-415.

- Klass, D., Silverman, P. R., & Nickman, S. (2014). Continuing bonds: New understandings of grief. Taylor & Francis.

- Degni, F. (2025). The Afterlife in the Age of AI: A Psychological, Ethical, and Technological Analysis. Ethical, and Technological Analysis (March 16, 2025).

- Brubaker, J. R., Hayes, G. R., & Dourish, P. (2013). Beyond the grave: Facebook as a site for the expansion of death and mourning. The Information Society, 29(3), 152-163.

- Shear, M. K. (2015). Complicated grief. New England Journal of Medicine, 372(2), 153-160.

- Litz, B. T., Schorr, Y., Delaney, E., Au, T., Papa, A., Fox, A. B., ... & Prigerson, H. G. (2014). A randomized controlled trial of an internet-based therapist-assisted indicated preventive intervention for prolonged grief disorder. Behaviour research and therapy, 61, 23-34.

- Iglewicz, A., Shear, M. K., Reynolds III, C. F., Simon, N., Lebowitz, B., & Zisook, S. (2020). Complicated grief therapy for clinicians: An evidenceâ?based protocol for mental health practice. Depression and anxiety, 37(1), 90-98.

- Gibson, M., Stoyles, B., & Cobb, J. (2021). Generational differences in receptivity to AI memorial technologies. Death Studies, 45(9), 708- 718.

- Haque, A., & Hashem, T. (2022). Preserving the digital self: Technical approaches to posthumous personality simulation. IEEE Transactions on Affective Computing, 13(2), 784-796.

- Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J. D., Dhariwal, P., ... & Amodei, D. (2020). Language models are few-shot learners. Advances in neural information processing systems, 33, 1877-1901.

- Nagarajan, M., & Smith, J. (2023). Voice cloning technologies: Ethical considerations for posthumous applications. Ethics and Information Technology, 25(2), 158-169.

- Öhman, C. J., & Watson, D. (2019). Are the dead taking over Facebook? A Big Data approach to the future of death online. Big Data & Society, 6(1), 2053951719842540.

- Maciel, C., & Pereira, V. (2013). Digital legacy and interaction.Heidelberg, Germany.

- Bostrom, N., & Yudkowsky, E. (2018). The ethics of artificial intelligence. In Artificial intelligence safety and security (pp. 57-69). Chapman and Hall/CRC.

- Tamés, D. (2022). The uncanny valley of digital identity: Posthumous simulation and the ethics of representation. Ethics and Information Technology, 24(1), 1-14.

- Kurzweil, R. (2000). The age of spiritual machines: When computers exceed human intelligence. Penguin.

- Hoskins, A. (2024). AI and memory. Memory, Mind & Media,3, e18.

- Skjuve, M., Følstad, A., Fostervold, K. I., & Brandtzaeg, P.B. (2021). My chatbot companion-a study of human-chatbot relationships. International Journal of Human-Computer Studies, 149, 102601.

- Savin-Baden, M., & Burden, D. (2019). Digital immortality and virtual humans. Postdigital Science and Education, 1, 87- 103.

- Gotved, S. (2021). Remembrance as digital legacy management: The affordances of memorial platforms. Digital Legacy and Interaction, 101-115.

- Sherlock, A., Eastwick, E., & Gibbs, M. (2023). "It feels like they're still here": A qualitative analysis of experiences with memorial chatbots. Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, 1-14.

- Peters-Cowan, M., Lichtenthal, W. G., Roberts, K. E., & Prigerson, H.G. (2022). Digital legacy creation: Effects on grief in terminal patients. Palliative & Supportive Care, 20(1), 108-116.

- Lichtenthal, W. G., & Breitbart, W. (2021). Meaning-centered grief therapy: A clinical guide to finding meaning through loss. Guilford Publications.

- Neimeyer, R. A. (2019). Meaning reconstruction in bereavement: Development of a research program. Death studies, 43(2), 79-91.

- Getty, E., Cobb, J., Gabeler, M., Nelson, C., Weng, E., & Hancock, J. (2021). "I said your name in death": Bereavement and artificial intelligence. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, 1-15.

- Brubaker, J. R., & Hayes, G. R. (2011, March). " We will never forget you [online]" an empirical investigation of post- mortem myspace comments. In Proceedings of the ACM 2011 conference on Computer supported cooperative work (pp. 123-132).

- Kübler-Ross, E. (1973). On death and dying. Routledge.

- Schut, M. S. H. (1999). The dual process model of coping with bereavement: Rationale and description. Death studies, 23(3), 197-224.

- Neimeyer, R. A. (2001). Meaning reconstruction & the experience of loss. American Psychological Association.

- Gibson, M. (2022). Death and digital media: Patterns, protocols and platforms. Routledge.

- Kasket, E., & Woodthorpe, K. (2021). Death, memorialization, and deviant spaces. Emerald Publishing.

- Arnold, M., Gibbs, M., Kohn, T., Meese, J., Nansen, B., &Hallam, E. (2017). Death and digital media. Routledge.

- Buitelaar, J. C. (2017). Post-mortem privacy and informational self-determination. Ethics and Information Technology, 19(2), 129-142.

- Öhman, C., & Floridi, L. (2017). The political economy of death in the age of information: A critical approach to the digital afterlife industry. Minds and machines, 27, 639-662.

- Harbinja, E. (2017). Post-mortem privacy 2.0: theory, law, and technology. International Review of Law, Computers & Technology, 31(1), 26-42.

- McCallig, D. (2021). Data protection beyond death.International Data Privacy Law, 11(2), 160-174.

- Singler, B. (2020). “Blessed by the algorithm”: Theistic conceptions of artificial intelligence in online discourse. AI & society, 35(4), 945-955.

- Harris, C. B. (2021). The digital afterlife: Christian theological perspectives on technological resurrection. Journal of Religious Ethics, 49(1), 22-51.

- Baig, F. Z. (2021). Islamic perspectives on artificial intelligence and digital afterlife technologies. Journal of Religion and Health, 60(4), 2362-2377.

- Peters, F. E. (2018). Resurrection and immortality in Islamictheology and funerary practice. In H. Friese, M. Nolden,M. Rebane, & M. Schreiter (Eds.), Fragmentations and continuities: Concepts for capturing the modern (pp. 203- 218).

- Sherwin, B. L. (2022). Jewish perspectives on digital immortality. The Journal of Jewish Ethics, 8(1), 79-96.

- Long, J. (2018). Studying afterlife beliefs cross-culturally: Methodological considerations. Journal of Consciousness Studies, 25(11-12), 43-59.

- Boyle, M. (2019). Death rituals in a digital age: Ethnographic perspectives on memorialization practices. Death Studies, 43(10), 626- 637.

- Chalmers, D. J. (2022). Reality+: Virtual worlds and the problems of philosophy. Penguin UK.

- Clowes, R. W. (2021). The digital self-extended: A socio- technical account of personal identity. In R. Clowes, K.Gärtner, & I. Hipólito (Eds.), The mind-technology problem(pp. 109-135).

- Schneider, S. (2019). Artificial you: AI and the future of your mind. Princeton University Press.

- Birhane, A., & Van Dijk, J. (2020, February). Robot rights? Let's talk about human welfare instead. In Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (pp. 207- 213).

- Searle, J. R. (1990). Is the brain's mind a computer program?. Scientific American, 262(1), 25-31.

- Ricoeur, P. (1990). Time and narrative (McLaughlin K., Pellauer D., Trans., Vols. 1-3).

- Brubaker, J. R., Dombrowski, L. S., Gilbert, A. M., Kusumakaulika, N., & Hayes, G. R. (2014, April). Stewarding a legacy: responsibilities and relationships in the management of post-mortem data. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 4157-4166).

- Harbinja, E. (2019). Posthumous data protection: Law and the deceased in the digital age. Oxford University Press.

- Digital Legacy Association. (2022). Digital legacy management: Ethical guidelines for service providers. Digital Legacy Association.

- Harbinja, E., & Pearce, H. (2019). Virtual immortality: Property rights in digital remains. Information & Communications Technology Law, 28(2), 178-207.

- Council of Europe. (2021). Guidelines on the protection of individuals with regard to the processing of personal data in a world of big data. Council of Europe.

- Chalmers, D. (2010). The singularity: A philosophicalanalysis. Journal of Consciousness Studies, 17(9-10), 7-65.

- Brubaker, J. R., & Callison-Burch, V. (2016, May). Legacy contact: Designing and implementing post-mortem stewardship at Facebook. In Proceedings of the 2016 CHI conference on human factors in computing systems (pp. 2908- 2919).

- Cann, C. K. (2014). Virtual afterlives: Grieving the dead in the twenty-first century. University Press of Kentucky.