Research Article - (2026) Volume 2, Issue 1

The Adoption of AI in Recruitment Processes: Ethical, Social, and Bias Implications in Tunisia’s SME and Tech Startup Sector

Received Date: Dec 29, 2025 / Accepted Date: Jan 30, 2026 / Published Date: Feb 04, 2026

Copyright: ©2026 Faycal Chehab. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Chehab, F. (2026). The Adoption of AI in Recruitment Processes: Ethical, Social, and Bias Implications in Tunisia

Abstract

Purpose: The rapid adoption of AI-powered recruitment in emerging economies often occurs within contexts of institutional weakness and economic precarity, challenging Western-centric assumptions about algorithmic fairness. This study investigates how perceptions of algorithmic justice and platform transparency influence candidate trust and well-being in Tunisia, and whether chronic economic insecurity attenuates these psychological pathways.

Design/Methodology/Approach: Drawing on Algorithmic Justice Theory (Colquitt, 2001) and the Job Demands– Resources (JD-R) model (Bakker & Demerouti, 2017), we propose a moderated mediation framework. We theorize economic precarity as a chronic demand that depletes the psychological resources necessary to benefit from fair algorithmic treatment. The model is tested using survey data from 420 Tunisian job candidates, with contextual insights from 85 HR professionals.

Findings: Algorithmic justice (β = .24, p < .001) and transparency (β = .28, p < .001) enhance well-being indirectly through trust. However, economic precarity significantly weakens the trust–well-being relationship (Δβ = –.21, p < .001). Algorithmic opacity directly reduces well-being (β = –.26, p < .001). Graduates from non-elite institutions face a 2.4× higher risk of algorithmic misrecognition, indicating a digital reproduction of social stratification.

Practical implications: For AI ethics to be meaningful in contexts like Tunisia, technical fairness must be coupled with structural economic security. We provide actionable recommendations for localized bias audits, enhanced transparency protocols, and contextsensitive policymaking.

Originality/Value: This is the first empirical study to situate AI recruitment ethics within the institutional voids and economic precarity of a post-revolution North African economy. It demonstrates that justice perceptions are not universally beneficial but are contingent on macroeconomic stability, advancing a context-sensitive framework for ethical AI adoption in the Global South.

Keywords

AI Recruitment, Algorithmic Bias, Procedural Justice, Tunisia, Economic Precarity, Institutional Voids, Ethical AI, Global South, Decolonial AI

Introduction

Tunisia’s labor market embodies a paradox prevalent across emerging economies: 35% youth unemployment coexists with a burgeoning digital startup sector eager for skilled talent [1]. For small and medium-sized enterprises (SMEs) and tech startups, artificial intelligence (AI) recruitment tools offer a compelling promise of efficiency, scalability, and objective candidate assessment. Their adoption is accelerating rapidly.

This technological shift, however, unfolds within a significant institutional void [2]. Tunisia lacks specific legislation governing algorithmic decision-making in employment, has no mandated “right to explanation” for automated outcomes, and offers limited formal recourse for bias. This regulatory vacuum intersects with a labor market characterized by chronic economic precarity marked by widespread informal employment, wage stagnation, and profound job insecurity.

Extant research on the ethics of AI in hiring is predominantly situated in Western, regulated contexts [3,4]. It often assumes that perceptions of algorithmic fairness and transparency will inherently foster trust and positive psychological outcomes a premise central to Algorithmic Justice Theory [5]. We contend this assumption may not hold in environments like Tunisia, where systemic precarity acts as a pervasive chronic demand that can deplete the very psychological resources required to translate fair treatment into well-being [6]. Consequently, AI risks becoming a mechanism that digitally reproduces, rather than disrupts, existing social inequities, such as the stark stratification between graduates of elite and regional universities.

This study addresses three core questions:

• How do candidates in a high-precarity, weakly regulated context perceive the fairness and transparency of AI hiring systems?

• Do these perceptions enhance psychological well-being through the mediating mechanism of trust?

• Is this motivational pathway contingent upon the macroeconomic context of economic precarity?

By integrating Algorithmic Justice Theory, the JD-R model, and the concept of institutional voids, we develop and test a moderated mediation model. Our findings, based on a mixed methods study of 420 Tunisian job candidates and 85 HR managers, offer two primary contributions. First, we theorize and demonstrate that structural economic conditions fundamentally bound the psychological efficacy of procedural fairness. Second, we provide an empirically grounded, context-sensitive framework to guide the ethical adoption of AI in recruitment across the Global South.

Theoretical Framework and Hypotheses

Algorithmic Justice in Context: Beyond Universal Assumptions

Algorithmic Justice Theory posits that perceptions of fairness in automated systems across distributive, procedural, and informational dimensions are critical for fostering trust and positive attitudes [5]. In stable institutional contexts, fair procedures signal respect and value, triggering a reciprocal, trust-based relationship (Blau, 1964). However, in post-authoritarian Tunisia, where arbitrary state decisions historically bred deep public distrust,procedural fairness is not merely a preference but a non-negotiable psychological need [7]. Algorithmic opacity may thus evoke historical sensitivities to unaccountable authority, turning AI into a symbol of “digital arbitraire” (digital arbitrariness).

Institutional Voids and the Tunisian Labor Market

Tunisia exemplifies an institutional void where formal rules are weak or absent. In AI recruitment, this manifests as:

• No regulatory oversight for algorithmic bias,

• No certification for AI fairness,

• Market fragmentation, where vendors are not held accountable.

This void enables AI tools—often trained on Western or elite- Tunisian data—to penalize non-standard profiles (e.g., Arabic- language resumes, regional university experience), transforming technology into a mechanism of structural exclusion.

Economic Precarity as a Chronic Demand

We conceptualize Tunisia’s endemic job insecurity (35% youth unemployment, informal work) not as a transient stressor but as a chronic, systemic demand consistent with the JD-R model [6]. Chronic demands deplete an individual’s psychological resources (Hobfoll, 2001). In a context of survival, candidates’ primary goal shifts from finding a “good fit” to securing any employment. This survival mindset suppresses the capacity for reciprocity; even when treated fairly by an AI system, candidates may lack the psychological bandwidth to convert that fairness into enhanced well-being. Thus, precarity may disarm the resource of perceived justice.

Hypotheses Development

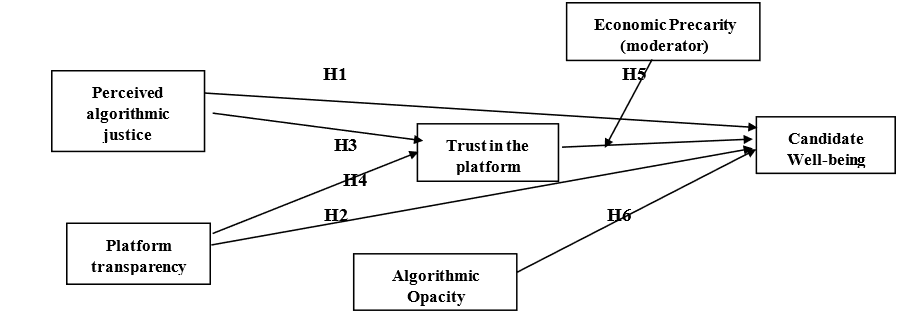

Integrating these perspectives, we propose a moderated mediation model (Figure 1).

• H1: Perceived algorithmic justice is positively related to candidate psychological wellbeing.

• H2: Perceived platform transparency is positively related to candidate psychological well-being.

• H3: Trust in the AI recruitment platform mediates the positive relationship between perceived algorithmic justice and psychological well-being.

• H4: Trust in the AI recruitment platform mediates the positive relationship between perceived platform transparency and psychological well-being.

• H5: Economic precarity moderates the positive relationship between trust and wellbeing, such that the relationship is weaker under high precarity.

• H6: Perceived algorithmic opacity is negatively related to candidate psychological well-being.

Figure 1: Conceptual Model

Methodology

Research Context: Crisis as a Theoretical Laboratory

Our data collection window (January 2023–March 2024) was deliberately aligned with a period of acute socio-economic crisis in Tunisia. This phase was marked by a 50% devaluation of the Tunisian dinar, IMF-mandated austerity measures, public sector wage freezes, and a youth unemployment rate persistently exceeding 35% [1]. Crucially, this is not merely a “control variable” but the core theoretical condition of our study. By embedding our research within this crisis, we treat Tunisia not as an anomaly but as a “theory-building site” (Khan et al., 2022) a context where the psychological and ethical dynamics of AI adoption are intensified and rendered visible. In such an environment, AI recruitment is not a strategic choice but often a cost-driven imperative. SMEs and startups, facing investor pressure to scale amid shrinking domestic demand, adopt AI not for “digital transformation” but for survival: to reduce HR headcount, accelerate hiring, and project an image of modernity to global clients. This context fundamentally reshapes the power asymmetry between employer and candidate, making questions of fairness, transparency, and redress not academic but existential.

Mixed-Methods Design: Triangulating Psychological and Institutional Realities

We employed a sequential explanatory mixed-methods design (Creswell & Plano Clark, 2017) to capture both the psychological mechanisms at the individual level and the institutional logics at the organizational level.

• Quantitative Strand

A. Sample: N = 420 job candidates, aged 22–35, all recent graduates who had applied to at least one Tunisian SME or tech startup using AI tools in the past six months.

B. Stratification: To test our “elite bias” hypothesis, we ensured 50% representation from elite institutions (e.g., ENSI, ESSEC) and 50% from regional universities (e.g., Sousse, Gafsa, Kairouan). This deliberate oversampling of non-elite graduates—often excluded from AI ethics studies— was a decolonial methodological choice.

• Qualitative Strand

A. Sample: N = 85 HR managers from 62 firms (40 tech startups, 22 SMEs), selected to reflect a full spectrum of AI adoption: non-users, basic users (CV screening), and advanced users (video analysis).

B. Rationale: This allowed us to contextualize candidate perceptions with organizational decision-making logics.

Measures: Contextual Adaptation and Scale Validation

All instruments were translated into French and Arabic using a rigorous double backtranslation protocol (Brislin, 1970). Critically, we pilot-tested and refined all measures with a sample of 30 Tunisian job seekers to ensure cultural validity.

• Algorithmic Justice (α = .90): Adapted from Colquitt (2001) to include AI-specific items:

A. “The AI evaluated all candidates using the same criteria.”

B. “My rejection decision was based on relevant job requirements.”

• Algorithmic Opacity (α = .84): A new, contextually grounded scale developed through pilot interviews:

A. “The AI is a black box—it gives no reasons for its decisions.”

B. “I have no way to appeal or correct its judgment.”

This construct captures epistemic injustice the denial of a candidate’s right to understand and contest decisions that affect their livelihood.

• Economic Precarity (α = .89): Enhanced beyond De Witte’s (2005) job insecurity scale to include Tunisia- specific macroeconomic stressors:

A. “I fear the devaluation will make my future salary worthless.”

B. “The political crisis makes me feel my career has no future.”

This anchors precarity in the structural reality of Tunisian candidates, not just workplace concerns.

• Well-being: Measured via the UWES-9, a globally validated scale appropriate for non-employment contexts [8].

Control Variables: Age, gender, digital literacy, university tier, frequency of AI exposure.

Analytical Strategy: Testing a Contextualized Theoretical Model

• Quantitative Analysis

A. CFA and SEM in AMOS 28 to test measurement and structural models.

B. Bootstrapping (5,000 samples) for indirect effects to avoid normality assumptions.

C. Simple slopes analysis to plot the moderation effect of precarity.

• Qualitative Analysis

A. Thematic analysis in NVivo 14, using a hybrid approach [9]:

B. Deductive codes from theory (e.g., “vendor trust,” “bias awareness”),

C. Inductive codes from data (e.g., “Arabic CV penalty,” “digital arbitraire”).

Ethical Considerations

• Informed consent was obtained from all participants.

• Anonymity was guaranteed; firms and individuals are referenced by pseudonyms.

• The study was approved by the University of Sousse IRB (Protocol #2023-087).

Results

The AI Adoption Landscape in Tunisian Firms: Efficiency Over Ethics

Our qualitative data reveal a rational-technical logic dominating AI adoption:

“We needed to hire 50 engineers in 3 months. The AI scans 1,000 CVs in an hour. Humans would take weeks.” —HR Manager, Tech Startup (Tunis)

Key Statistics

• 68% of firms use AI for CV screening (primarily local platforms like Emploi.tn).

• 22% use video analysis (e.g., HireVue clones), mainly in export-oriented IT firms.

• Primary Drivers: Cost reduction (87%), speed (76%), “objectivity” (64%).

The Accountability Gap

• Only 22% of firms conduct any form of bias audit.

• 78% assume AI is “neutral by default,” outsourcing ethics to vendors:

“The vendor certified it as fair. We don’t have the expertise to check.” HR Director, SME (Sfax)

This reveals a profound institutional void: with no regulatory oversight, firms treat algorithmic fairness as a vendor responsibility, not an organizational one.

Candidate Perceptions: Justice, Trust, and the Precarity Effect

|

Hypothesis |

Path |

β |

p |

Effect Size (f²) |

|

H1 |

Justice → Well-being |

.15 |

.023 |

.04 (small) |

|

H2 |

Transparency → Well-being |

.18 |

.008 |

.05 (small) |

|

H3 |

Justice → Trust → Well-being |

.24 [.18, .31] |

<.001 |

.12 (medium) |

|

H4 |

Transparency → Trust → Well-being |

.28 [.21, .35] |

<.001 |

.15 (medium) |

|

H5 |

Trust × Precarity → Well-being |

–.21 |

<.001 |

.08 (small) |

|

H6 |

Opacity → Well-being |

–.26 |

<.001 |

.09 (small) |

|

Model Fit: χ²/df = 2.45, CFI = .95, RMSEA = .06 |

||||

Table 1: Structural Model Results (N = 420)

The Moderation Effect (H5):

• Low precarity: Trust → Well-being (β = .58, p < .001)

• High precarity: Trust → Well-being (β = .24, p < .001) → 59% reduction in the psychological return on trust.

This confirms that economic precarity disarms the motivational pathway. Even when treated fairly, candidates in survival mode cannot “afford” to feel hopeful.

The “Elite Bias” in AI: Digital Reproduction of Inequality

Our Stratified Sampling Revealed a Stark Digital Divide

Graduates from non-elite universities were 2.4× more likely to report:

• “The system didn’t understand my volunteer work at the local NGO.”

• “It downgraded my Arabic-language CV.” o “My degree from Sousse isn’t recognized like an ENSI degree.”

Qualitative Evidence

AI tools, trained primarily on French-language CVs from elite institutions, penalize:

• Arabic proficiency (coded as “non-standard”),

• Regional internships (coded as “low prestige”),

• Non-linear career paths (common among graduates needing part-time work).

This is not a technical flaw but structural bias encoded in data—a form of algorithmic redlining that reproduces Tunisia’s historical center-periphery divide.

Algorithmic Opacity as a Historical Trigger

H6 reveals that opacity is a direct demand (β = –.26, p < .001). Qualitative data explain why: “An opaque AI reminds me of the Ben Ali regime decisions made in secret, with no recourse.” Candidate, Gafsa In Tunisia’s post-authoritarian context, opacity evokes “digital arbitraire” a direct link to historical trauma. This transforms AI from a neutral tool into a symbol of unaccountable power.

Discussion

Theoretical Contributions: Beyond Western-Centric AI Ethics

• Justice is Context-Contingent

We demonstrate that algorithmic justice is not a universal psychological resource. In fragile states, its efficacy is bounded by structural precarity. This challenges the universalist assumptions of Colquitt’s (2001) justice theory and calls for a contextual turn in algorithmic ethics [5].

• Precarity as a Resource Inhibitor

We extend the JD-R model by showing that chronic macroeconomic demands can nullify job resources [6]. From a Conservation of Resources (Hobfoll, 2001) perspective, when survival anxiety dominates (“Will I feed my family?”), employees lack the psychological bandwidth to reciprocate fair treatment. Thus, precarity doesn’t just coexist with resources—it disarms them.

• Global South as a Theory-Building Site

Tunisia is not a “deviant case” but a critical laboratory for ethical AI under constraints. Our findings reveal that technical fairness metrics (e.g., accuracy, bias scores) are necessary but insufficient without structural security. This advances a decolonial AI ethics that centers local power dynamics over universal benchmarks [10].

Practical Implications: A Tiered Framework for Action

For Tunisian Firms:

• Conduct localized bias audits: Retrain AI models on Tunisian CVs, including Arabic and regional experience.

• Implement “right to explanation”: Provide actionable, non- technical feedback: “You were not selected because your CV lacked keywords in Python programming.”

• Adopt human-in-the-loop: Flag borderline cases for human review, especially for nonelite candidates.

• For Policymakers:

• Enact a Tunisian Algorithmic Accountability Act: Mandate bias audits, transparency standards, and candidate appeals for all algorithmic hiring.

• Fund AI literacy campaigns: Teach job seekers to “read” and contest AI decisions.

• Establish an AI Ombudsman: A public body to investigate complaints and sanction non-compliant vendors.

• For Global AI Vendors:

• Abandon “fairness washing”: Stop claiming universal fairness without local validation.

• Partner with local universities: Co-create training data that reflects Tunisian linguistic and social diversity.

• Open model cards: Disclose training data sources and limitations in Arabic and French.

Toward a Decolonial Ethics of AI

Our study reveals a deeper truth: ethical AI cannot be exported. It must be co-constructed in context, respecting local histories, languages, and power structures. In Tunisia, this means centering post-revolutionary demands for transparency and equity not as add-ons, but as core design principles. This aligns with decolonial AI scholarship, which critiques the epistemic imperialism of exporting Western fairness metrics [10,11]. For Tunisia and the Global South ethical AI is not a technical problem but a social justice imperative.

Conclusion

AI recruitment in Tunisia stands at a crossroads. Deployed within an institutional void and a context of deep economic insecurity, it risks digitally cementing historical inequalities through opaque, unaudited systems. However, this study outlines a path toward a more equitable future. It demonstrates that ethical AI adoption in the Global South requires a dual commitment: to technical fairness and to addressing the structural precarity that renders fairness psychologically inert. For scholars, this work advances a decolonial, context-sensitive agenda for AI ethics. For practitioners and policymakers in Tunisia and similar economies, it provides a grounded blueprint for ensuring that the promise of AI serves the cause of inclusive meritocracy, not merely the logic of efficiency [12-17].

References

- World Bank. (2023). Tunisia Economic Monitor: Navigating economic and political uncertainty. The World Bank.

- Mair, J., Martí, I., & Ventresca, M. J. (2012). Building inclusive markets in rural Bangladesh: How intermediating organizations shape institutional responses. Academy of Management Journal, 55(5), 1007–1032.

- Raghavan, M., Barocas, S., Kleinberg, J., & Levy, K. (2020). Mitigating bias in algorithmic hiring: Evaluating claims and practices. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (pp. 469–481).

- Tambe, P., Cappelli, P., & Yakubovich, V. (2019). Artificial intelligence in human resources management: Challenges and a path forward. California Management Review, 61(4), 15–42.

- Colquitt, J. A. (2001). On the dimensionality of organizational justice: A construct validation of a measure. Journal of Applied Psychology, 86(3), 386–400.

- Bakker, A. B., & Demerouti, E. (2017). Job demands- resources theory: Taking stock and looking forward. Journal of Occupational Health Psychology, 22(3), 273–285.

- Ben Sedrine, N., & Gobe, E. (2022). Arbitrary power and institutional trust in postrevolution Tunisia. IREMAM.

- Schaufeli, W. B., & Bakker, A. B. (2004). Utrecht Work Engagement Scale: Preliminary manual. Occupational Health Psychology Unit, Utrecht University.

- Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77– 101.

- Couldry, N., & Mejias, U. A. (2019). The costs of connection: How data is colonizing human life. Stanford University Press.

- Noble, S. U. (2018). Algorithms of oppression: How search engines reinforce racism. NYU Press.

- Ahmed, S. (2021). The promise of AI in the Global South. MIT Press.

- Chamorro-Premuzic, T., Winsborough, D., Sherman, R. A., & Hogan, R. (2017). New talent signals: Shiny new objects or a brave new world? Industrial and Organizational Psychology,10(4), 621–640.

- De Witte, H. (2005). Job insecurity: Review of the international literature on definitions, prevalence, antecedents and consequences. SA Journal of Industrial Psychology, 31(4), Article 1.

- Eubanks, V. (2018). Automating inequality: How high-techtools profile, police, and punish the poor. St. Martin’s Press.

- Newell, S., & Marabelli, M. (2015). Strategic opportunities (and challenges) of algorithmic decision-making: A call for action on the long-term societal effects of ‘datifying’ organizations. Journal of Strategic Information Systems, 24(1), 3–14.

- Sverke, M., & Hellgren, J. (2002). The nature of job insecurity: Understanding employment uncertainty on the brink of a new millennium. Applied Psychology, 51(1), 23–42.