Research Article - (2024) Volume 1, Issue 1

Reviewing Healthcare Biases and Recommendations

Received Date: Jul 15, 2024 / Accepted Date: Aug 26, 2024 / Published Date: Sep 02, 2024

Copyright: ©Ã?©2024 Julian Ungar-Sargon. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Ungar-Sargon, J. (2024). Reviewing Healthcare Biases and Recommendations. Arch of case Rep: Open, 1(1), 01-16.

Abstract

Influence on Treatment Decisions: Unconscious biases can shape physician behavior and decision- making, often without their awareness. For example, studies have shown that physicians may be more likely to recommend certain treatments to male patients over female patients, or to provide less pain medication to Black or Hispanic patients compared to their White counterparts, even when clinical presentations are similar.

Influence on Treatment Decisions

Unconscious biases can shape physician behavior and decision-making, often without their awareness. For example, studies have shown that physicians may be more likely to recommend certain treatments to male patients over female patients, or to provide less pain medication to Black or Hispanic patients compared to their White counterparts, even when clinical presentations are similar.

Patient-Physician Interactions

Bias can also affect the quality of interactions between physicians and patients. Physicians with high levels of implicit bias may spend less time with patients from minority groups and provide less supportive care. This can lead to patients perceiving their care as less patient-centered, which can affect their confidence in treatment plans and adherence to medical advice.

Health Disparities

Implicit biases contribute to broader health disparities. For instance, Black patients with acute coronary syndrome are less likely to receive appropriate therapies compared to White patients. Similarly, women are less likely to receive knee arthroplasty when clinically appropriate, due to implicit gender biases among physicians.

Recognition and Mitigation

Recognizing and addressing unconscious bias is crucial for improving healthcare equity. Strategies include increasing diversity among healthcare professionals, implementing training programs to raise awareness of implicit biases, and developing interventions to improve patient-physician interactions.

Overall, unconscious bias in healthcare can perpetuate inequalities and affect the quality of care provided to patients from diverse backgrounds. Addressing these biases is essential for reducing health disparities and improving outcomes for all patients.

Decision-making is part and parcel of human life. People make both minor and significant choices daily that directly impact their lives. The decisions also have a secondary impact on those close to us and the society generally. The importance of adopting practical decision-making skills is asserted.

The area has attracted immense attention from scholars with the aim of understanding and facilitating the improvement of the process. One area that has attracted scholarly interest is the influence of personal bias that affects thought processing in decision-making.

Figure 2

Definition

Biasness is loosely defined as the systematic error experienced in decision-making. In most cases, one may become biased as one tries to make sense of the available information. It is argued that biases help people make decisions quickly by listening to their guts. Moreover, some people are oblivious of their bias. This is referred to as unconscious bias, and it has prevailed despite the fast-changing environment. In the current complex world, human beings are exposed to much information they cannot process at once. Therefore, they are naturally inclined to take mental shortcuts when making decisions. It amplifies the role of unconscious bias in the process. Although it may sound ideal, it gets in the way of deliberate reasoning and results in misguided decision-making.

Health care providers’ attitudes of marginalized groups can be key factors that contribute to health care access and outcome disparities because of their influence on patient encounters as well as clinical decision-making. Despite a growing body of knowledge linking disparate health outcomes to providers’ clinical decision making, less research has focused on providers’ attitudes about disability. The aim of this study was to examine providers’ explicit and implicit disability attitudes, interactions between their attitudes, and correlates of explicit and implicit bias.

History of Professional Bias

Unconscious bias is also commonly referred to as implicit bias, as noted by Lopez (2018). The term was first coined in 1995 by Mazarin Banaji and Anthony Greenwald in their article on implicit social cognition. The two psychologists argued that social behavior was significantly affected by unconscious associations and judgments. They defined implicit bias as the unconscious attitudes and stereotypes that impact our understanding, actions, and decisions in an oblivious way.

Typically, the implicit attitude is directed towards a specific social group. According to the pioneers, it explains why people often attribute definite attributes to a particular group. They also referred to this concept as stereotyping. However, they emphasized that this kind of process is not intentional or controllable (Sander et al., 2020).

Therefore, there is a clear distinction between unconscious bias and explicit prejudices. Although most people may assume that they are not susceptible to biases and stereotypes, they cannot avoid engaging in them. It simply means that the brain is working in a manner that creates associations and generalizations.

The pioneers of the implicit bias theory also identify reasons why human beings are susceptible to these tendencies. First, they noted that the human brain naturally seeks out patterns and associations in information processing (Weber and Wiersema, 2017). This argument asserts that the human ability to store, process, and apply information significantly depends on forming associations.

Secondly, the brain strives to take shortcuts to simplify the world. Usually, the brain is fed with more information to process. Through mental shortcuts, it becomes easier and faster for the brain to process all the data. Lastly, the two scholars argued that the human experience and social conditioning facilitate implicit bias. In this case, factors like cultural conditioning, media portrayals, and family upbringing shape our unconscious attitudes.

Greenwald and Banaji called for more research to facilitate a better understanding of the issue. Since the mid-90s, different scholars have extensively researched implicit biases. One study has proved that all human beings possess implicit biases that affect how we reason, make decisions and treat other people (Payne et al., 2017). They have also noted that avoiding this tendency is often challenging since many people do not know that they are engaging in it. The following theories have been used to explain different aspects of unconscious bias.

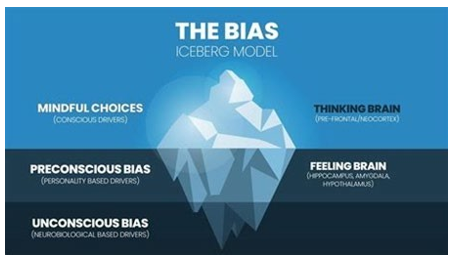

Figure 3

Theories on Unconscious Patterns

The theories and approaches referred to in this text are the most prominent in the relevant literature. Among these theories, Kahneman’s “System 1 and System 2” approach focuses on the operational processes of the brain and its effects on decision mechanisms. The “Dual Attitudes” model is quite similar to Kahneman’s model. However, this model differs from Kahneman’s model in that it gives more weight to cultural and social factors in the formation of unconscious biases. “Social Identity Theory” focuses on social group dynamics to a great extent.

This theory focuses on in-group and out-group social and cultural belonging and their cognitive effects in forming prejudices and biases. In our opinion, considering that the theoretical developments and discussions in the relevant field are relatively new and still ongoing, discussing the similarities and differences between these theories requires specific expertise, effort, and debate. This study’s primary purpose and motivation are not to have such a discussion. In this context, this study aims to draw attention to the importance of unconscious and/or implicit bias, which has not been adequately addressed scientifically in our country, to ensure that this concept is discussed and encourages the production of applied interdisciplinary studies. However, one can consult the following studies for the differences and discussions between these theories (Brownstein 2019, 2020, Johnson 2020, Wilson et al. 2020).

Fitzgerald and Hurst reviewed Forty two articles were identified as eligible. Seventeen used an implicit measure (Implicit Association Test in fifteen and subliminal priming in two), to test the biases of healthcare professionals.

Twenty five articles employed a between-subjects design, using vignettes to examine the influence of patient characteristics on healthcare professionals’ attitudes, diagnoses, and treatment decisions. The second method was included although it does not isolate implicit attitudes because it is recognised by psychologists who specialize in implicit cognition as a way of detecting the possible presence of implicit bias.

Twenty seven studies examined racial/ethnic biases; ten other biases were investigated, including gender, age and weight. Thirty five articles found evidence of implicit bias in healthcare professionals; all the studies that investigated correlations found a significant positive relationship between level of implicit bias and lower quality of care.

The evidence indicates that healthcare professionals exhibit the same levels of implicit bias as the wider population. The interactions between multiple patient characteristics and between healthcare professional and patient characteristics reveal the complexity of the phenomenon of implicit bias and its influence on clinician-patient interaction.

Their review also indicated that there may sometimes be a gap between the norm of impartiality and the extent to which it is embraced by healthcare professionals for some of the tested characteristics.

Bias Affects Clinical Judgement

Three studies found a significant correlation between high levels of physicians’ implicit bias against blacks on IAT scores and interaction that was negatively rated by black patients [23, 24, 44] and, in one study, also negatively rated by external observers [23]. Four studies examining the correlation between IAT scores and responses to clinical vignettes found a significant correlation between high levels of pro-white implicit bias and treatment responses that favoured patients specified as white [42, 45,46,47]. In one study, implicit prejudice of nurses towards injecting drug users significantly mediated the relationship between job stress and their intention to change jobs [48].

Twenty out of 25 assumption studies found that some kind of bias was evident either in the diagnosis, the treatment recommendations, the number of questions asked of the patient, the number of tests ordered, or other responses indicating bias against the characteristic of the patient under examination.

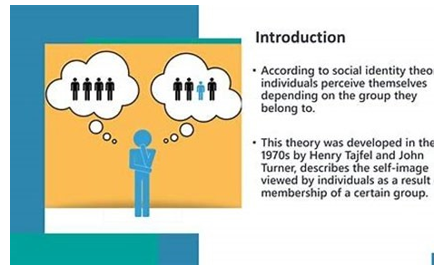

Figure 4

Determinants

Socio-demographic characteristics of physicians and nurses (e.g. gender, race, type of healthcare setting, years of experience, country where medical training received) are correlated with level of bias. In one study, male staff were significantly less sympathetic and more frustrated than female staff with self-harming patients presenting in A&E [26]. Black patients in the US –but not the UK-were significantly more likely to be questioned about smoking than white [28]. In another study, international medical graduates rated the African-American male patient in the vignette as being of significantly lower SES than did US graduates [38]. One study found that pediatricians held less implicit race bias compared with other MDs [47].

The theory of aversive racism, first posed in the 1970s, encompasses some of the most widely studied ideas in social psychology. According to theory developers Samuel L. Gaertner, PhD, of the University of Delaware, and John F. Dovidio, PhD, of Yale University, people may hold negative nonconscious or automatic feelings and beliefs about others that can differ from their conscious attitudes, a phenomenon known as implicit bias. When there’s a conflict between a person’s explicit and implicit attitudes-when people say they’re not prejudiced but give subtle signals that they are, for example-those on the receiving end may be left anxious and confused.

Lab studies have long tested these ideas in relation to employment decisions, legal decisions and more.

In 2003, the concepts received an empirical boost from “Unequal Treatment,” a report from an Institute of Medicine (IoM) panel made up of behavioral scientists, physicians, public health experts and other health professionals. The report concluded that even when access-to-care barriers such as insurance and family income were controlled for, racial and ethnic minorities received worse health care than non-minorities, and that both explicit and implicit bias played potential roles. “The report really opened a lot of doors to further research on bias in care,” says Dovidio, who served on the IoM panel.

Psychologists and others are now building on the IoM findings by exploring how specific factors, including physicians’ use of patronizing language and patients’ past experiences with discrimination, affect patients’ perception of providers and care. Research is also starting to look at how implicit bias affects the dynamics of physician-patient relationships and subsequent care for patients with particular diseases, such as cancer and diabetes.

Tackling this topic can be difficult because of the real-world challenges of getting medical professionals to engage in these studies, researchers say. Another problem is that the main measure used to assess implicit bias, the Implicit Association Test (IAT), has come under fire in recent years for reasons including poor test-retest reliability and the argument that higher IAT scores do not necessarily predict biased behavior.

While this disagreement remains to be resolved, researchers are starting to use other measures and techniques to assess implicit bias, as well as new methodologies to track patient attitudes and outcomes. And while the predictive power of the IAT may be relatively small, in the aggregate, even small effects can have large consequences for minority patients [2].

Implicit bias is called implicit for a reason-it’s not easy to capture or to fix, says Michelle van Ryn, PhD, an endowed professor at Oregon Health & Science University (OHSU). But it is worth a deeper dive because of its implications for patient treatment on both a personal and a health-care level, she says. “Implicit bias creates inequalities through many difficult-to-measure pathways, and as a consequence, people tend to underestimate its impact,” says van Ryn. “This kind of research is essential in making real progress toward health-care equality.”

How Bias Plays Out

One of the first psychologists to apply theories of aversive racism and implicit bias in a real-world medical setting is social psychologist Louis A. Penner, PhD, senior scientist at Wayne State University’s Karmanos Cancer Institute. Along with Dovidio, Gaertner and others, he asked patients and physicians before a medical appointment about their race-¬related attitudes, and measured physicians’ implicit bias. The researchers also video-recorded patients and physicians during the appointment and asked them to complete questionnaires afterward.

The team found that black patients felt most negatively toward physicians who were low in explicit bias but high in implicit bias, demonstrating the validity of the implicit-bias theory in real-world medical interactions [2]. Researchers are also examining ways that providers may inadvertently demonstrate such bias, including through language. In a study, Nao Hagiwara, PhD, at Virginia Commonwealth University, and colleagues found that physicians with higher implicit-bias scores commandeered a greater portion of the patient-physician talk time during appointments than did physicians with lower scores [2].

Those findings are consistent with research by Lisa A. Cooper, MD, of Johns Hopkins University School of Medicine and colleagues, who found that physicians high in implicit bias were more likely to dominate conversations with black patients than were those lower in implicit bias, and that black patients trusted them less, had less confidence in them, and rated their quality of care as poorer [2].

The individual words that physicians use can also signal implicit bias, Hagiwara has found. She looked at physicians’ tendency to use first-person plural pronouns such as “we,” “ours” or “us” when interacting with black patients. According to social psychology theories related to power dynamics and social dominance, people in power use such verbiage to maintain control over others of lesser power. In line with those theories, she found that physicians who scored higher in implicit bias spoke more of these words than colleagues lower in implicit bias, using language such as, “We’re going to take our medicine, right?” [2].

Specific Diseases and Populations

Another line of research is investigating physician and patient attitudes among patients with specific diseases. This work is shedding more light on the role that patients may play in poor communication and relationship outcomes, and eventually aims to show whether poor communication affects health outcomes.

In a study of black cancer patients and their physicians, Penner, Dovidio and colleagues found that, overall, providers high in implicit bias were less supportive of and spent less time with their patients than providers low in implicit bias. And black patients picked up on those attitudes: They viewed high-¬implicit-bias physicians as less patient-¬centered than physicians low in this bias. The patients also had more difficulty remembering what their physicians told them, had less confidence in their treatment plans, and thought it would be more difficult to follow recommended treatments [2].

In another study, Penner and colleagues looked more specifically at how past discrimination may influence black cancer patients’ perception of care and their reactions to it. Patients who reported high rates of past discrimination and general suspicion of their health care talked more during sessions, showed fewer positive emotions and rated their physicians more negatively than those who reported less past discrimination and lower suspicion [2]. She and colleagues will assess the role of physician communication behaviors as they relate to patients’ trust in and satisfaction with their providers, and then see how those interactions relate to health outcomes.

Medical Students

While most implicit-bias studies in health-care treatment have been conducted with black patients and nonblack providers, other researchers are investigating implicit bias in relation to other ethnic groups, people with obesity, sexual and gender minorities, people with mental health and substance use disorders, older adults and people with various health conditions.

Medical school is one arena where this work is taking place. OHSU’s van Ryn, who is founder and head of a translational research company called Diversity Science in Portland, Oregon, is principal investigator in a long-term study of medical students and residents examining whether and how the medical school and residency training environments might influence future doctors’ racial and other biases. The study asks students on a regular basis about their implicit and explicit attitudes toward racial and other minorities, and how these views might change over time.

In several studies using this data set, the team has found that student reports of organizational climate, contact with minority faculty and patients, and faculty role-modeling were more strongly related to changes in implicit and explicit bias than their experiences with formal curricula or formal training [2]. These include studies headed by health services researcher Sean Phelan, PhD, of the Mayo Clinic, that examine medical student reactions to patients who are obese and who identify as LGBT.

In prospective studies of the initial medical student cohort, he found results similar to those involving race: for example, that students with lower implicit-bias scores were more likely to have had frequent contact with LGBT faculty, residents, students and patients, and that those with higher scores were more likely to have been exposed to faculty who exhibited discriminatory behavior [2].

In terms of race, van Ryn’s team also found that students who entered medical school with lower implicit-bias scores and many positive experiences with people of different races were likely to build on those experiences during medical school, says Dovidio.

Another promising intervention, the prejudice habit-breaking intervention, is based on a theory developed by Patricia G. Devine, PhD, and William T.L. Cox, PhD, of the University of Wisconsin-Madison.

The intervention, which adopts the premise that bias, whether implicit or explicit, is a habit that can be overcome with motivation, awareness and effort, includes experiential, educational and training components. A study by Patrick S. Forscher, PhD, of the University of Arkansas, and colleagues found that compared with controls, people who received the intervention were more likely after 14 days to feel concern about the targets of prejudice and to label biases as wrong, though that awareness later declined.

However, in a subsample of original participants two years later, those who received the intervention were more likely than controls to object to an online essay endorsing racial stereotyping, the team found [2].

System 1 and System 2 Model of Thinking Yasar Suveren writes

This model of thinking was introduced in 2011 by Daniel Kahneman. This study was published in Turkish in 2018 (Kahneman 2018). It is widely adopted due to its simplicity and intuitive nature. The theory gives an analogy explaining how the human mind processes information. The brain is fast, automatic, and intuitive in the first system, as Oberai and Anand (2018) noted. In this state, the mind engages in innate mental activities that human beings were born with. They include mental activities meant to perceive the immediate surroundings, recognize objects, and read facial expressions, among others. Payne et al. (2017) emphasized that system 1 operates automatically and quickly, with no effort or voluntary control.

On the other hand, system 2 gives attention to the mental processes that demand it. It includes cognitive processes on complex computations (Mariani 2019). This brain system is often associated with subjective experiences of choice. Most people resonate with system 2 of thinking. They assume that their decision-making is characterized by making intentional choices on what to think about and do. Furthermore, Sander et al. (2020) note that this system can construct thoughts in orderly steps. In this case, one can resist processing some information.

On the other hand, system 1 mode of thinking is entirely involuntary. It is directly related to implicit biases that occur with little effort (Mariani 2019). When using this theory, it is noted that the implicit biases can differ amongst neighbors, friends, or even family members. A Model of Dual Attitudes According to Fitzgerald and Hurst (2017), the concept of dual attitudes is widely adopted in social psychology. It explains the idea that one can have two different attitudes about the same thing.

These are both implicit and explicit attitudes. The implicit attitude entails the intuitive response, which is often unconscious and uncontrolled. On the other hand, Weber and Wiersema (2017) note that the explicit attitude is conscious and controlled. These attitudes coexist in the individual’s mind, although the subject may not be aware of it. This theory is popularly used to explain unconscious bias. In this case, the implicit attitudes include the oblivious stereotype that subjects hold towards members of a particular social group.

The concept can be easily explained through the examination of racial prejudice. According to Weber and Wiersema (2017), individuals cultivate their views on race based on their immediate environment when growing up. For instance, their upbringing has a significant impact on the development of racial prejudice. Other influencing factors include the regional and ethnic background of an individual. Early exposure to prejudiced attitudes shapes their implicit views about members of other ethnic groups. However, when they grow up, they are bound to create different perspectives. For instance, with age, education, and exposure, individuals may shift their social attitudes to embrace an explicit attitude (Zheng 2016).

In most cases, the secondary attitudes are nonprejudicial to avoid any social judgments from other people. In such situations, the subject is said to have dual attitudes towards race. Glasgow (2016) notes that the subject would have to engage in an intensive self-examination to acknowledge the duality. In unconscious bias, this theory explains why people are not aware of the oblivious views that influence their decision-making and perspectives towards other people.

Social Identity Theory

The social identity theory was developed after a series of studies conducted by Henri Tajfel. Tajfel is a renowned British social psychologist who invested in minimal-group studies. The participants in these studies were assigned to groups that were designed to be as arbitrary as possible. When the people were told to transfer points to other participants, they gave more points to in-group members than out-group members. The studies were interpreted as showing that categorizing people in groups is a good factor influencing their thinking.

As Howard and Bornstein (2018) note, they are more prone to think of themselves as a group and not separate individuals. The theory was coined to explain how group membership can influence a person’s attitudes in social settings. Therefore, group membership helps people define who they are and relate with members of other groups. The theory has significantly influenced scholarly research as it reveals the connection between cognitive processes and behavioral motivation. Initially, the focus of the theory was to explain intergroup conflict and relations in a broader perspective.

As Lopez (2018) notes, later elaborations by Tajfel’s student, John Turner, and his colleagues expanded the application of the theory in explaining how people interpret their positions in a social setting. The theory was also used to elaborate on how social groups affect their perceptions of others. Some of these perceptions include social stereotypes, which are indicators of unconscious bias.

The theory also gives three cognitive processes that shape how unconscious bias is formed in the group context. The first mental process is social categorization. According to Howard and Bornstein (2018), social categorization refers to the tendency of individuals to perceive themselves and others based on constructed social categories. In this case, the subject is viewed as an interchangeable group instead of individuals with unique qualities. Here, one may hold implicit attitudes towards those that fall within a specific social category.

Glasgow (2016) identifies the second and third cognitive processes as social comparison and social identification. Social comparison refers to the process used by people to determine the value or social position of a group and its members.

For instance, schoolteachers are implicitly perceived to have a higher social standing compared to garbage collectors. Lastly, Faucher (2016) notes that social identification reveals that people perceive themselves as active observers in social situations. Therefore, their sense of self and how they relate with others shape their attitudes towards other individuals and group members. Social identity is a result of these three factors. Zheng (2016) defines the concept as an individual’s knowledge of belonging to a particular social group and the valuation of its membership. The motivation of social behavior explains how individuals develop unconscious bias based on social groups.

According to the theory, people generally prefer to identify with the positive traits of the groups that they belong to. In addition, they are inclined to seek out the positive qualities and attitudes from their in-group members. This inclination facilitates unconscious bias as they may focus more on the negative characteristics of out-group members.

Many people do so to downplay the importance of positive qualities in other groups. It increases the risk of identity threats where members of a group feel like their competence devalues (Howard and Bornstein 2018). Additionally, it may result in inter- group conflicts, which are among the consequences of unconscious bias. Manifestations of Unconscious Bias as Buetow (2019) notes, identifying unconscious bias requires a high level of introspection.

Moreover, it is the critical factor in determining ways to overcome oblivious prejudice. Knowing how implicit bias manifests will facilitate effective reflection at an individual level. It will also help identify instances when the individual or someone else is a victim of bias. Faucher (2016) also notes that understanding the manifestation of prejudice can help cultivate the confidence to speak up against any negative behavior.

Consequently, it facilitates the creation of an inclusive environment where all individuals are treated as equals. This section explores some of the common manifestations of unconscious bias.

Gender Bias

According to Fitzgerald and Hurst (2017), gender bias refers to preferring one gender over the other. It is often referred to as sexism. Gender bias is often manifested when someone unconsciously associates certain stereotypes with different genders. In these situations, someone may be treated differently simply because of their sex. Here, the skills, capabilities, and qualities that the subject possesses are not considered.

According to gender studies in the United States, 90% of the participants were biased against women (Como et al. 2019). Since it falls under explicit attitudes, the number of people with an unconscious bias against women is assumed to be higher. According to Como et al. (2019), 50% of men said they had more job rights than women.

The results of the study further assert the continued prevalence of gender bias in society. A study by Oberai and Anand (2018) focused on why the issue of gender bias still occurs in modern society. First, he notes that the problem stems from the prevailing societal beliefs about men and women. For instance, society has continually taught that men are assertive, decisive, and strong. On the other hand, women are expected to be warm, caring, and sympathetic. These assumptions are commonly used to give generalized qualities to members of either group.

Faucher (2016) also noted that many people possess a dual attitude on the issue. In this case, many people were raised in environments where women were considered inferior to men. However, when they grow up, they embrace the concept of gender equality, where both genders are treated as equals. However, their implicit attitudes continue to affect them unknowingly.

Unconscious Bias

As Shore et al. (2011) point out, disparities in healthcare have been increasing at an alarming rate. Compelling evidence shows that the underrepresented groups in healthcare are often victims of unconscious bias. In this case, implicit attitudes refer to the associations that alter caregivers’ perceptions, dictating how they interact with the patient.

Stereotyping and prejudice play a significant role in purporting the existing healthcare disparities instead of mitigating them. The unintended differences are often reflected in medical school admission and faculty hiring and promotion. In this case, ageism, gender bias, and name bias are very prominent. Unconscious bias is also commonly experienced in inpatient care. According to a study by Consul et al. (2021), white and black caregivers are likely to treat patients of their own race better. Similarly, patients have a high preference for caregivers from a similar ethnic background. Patients also tend to feel more confident when assigned to male practitioners, especially for high-risk medical procedures. On the same note, young doctors are viewed as inexperienced, and patients may opt for older doctors. It robs the caregivers of a fair chance to practice, which interferes with their career growth (Kallman 2017).

It also adversely affects their motivations, which directly influence the quality of services they offer to patients. Compared to heterosexual patients, members of the LGBTQ community experience higher rates of health disparities. It is a result of both conscious and unconscious bias that has been projected on them. The problem begins with the presentation of clinical information in hospitals. Here, the patients are required to give their age, presumed gender, and their racial identity.

According to Maina et al. (2018), the information fuels unconscious bias towards the patient. Basing medical care on stereotypes may result in premature closure or missed diagnosis, which puts the patient at more risk. For instance, at the beginning of the human immunodeficiency virus (HIV) pandemic, it was assumed that the disease could only be transmitted to members of the gay community.

The assumption hindered timely recognition of infections in women, children, and heterosexual men. Apart from sexual orientation, other factors that affect the judgment and behavior of health practitioners include socio-economic status, age, weight, and disabilities, among others (Kallman, 2017). Mitigating implicit bias in this sector will help reduce the disparities in it.

Medical errors occur in 1.7-6.5 % of all hospital admissions causing up to 100,000 unnecessary deaths each year, and perhaps one million in excess injuries in the USA [1, 2]. In 2008, medical errors cost the USA $19.5 billion [3]. The incremental cost associated with the average event was about US$ 4685 and an increased length of stay of about 4.6 days. The ultimate consequences of medical errors include avoidable hospitalizations, medication underuse and overuse, and wasted resources that may lead to patients’ harm [4, 5].

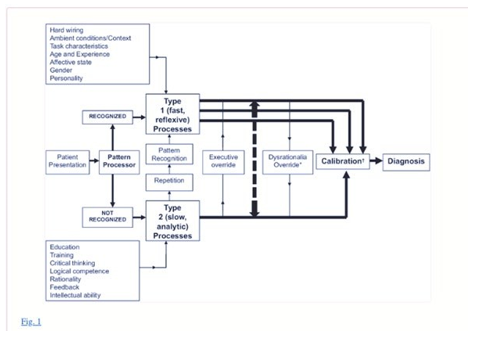

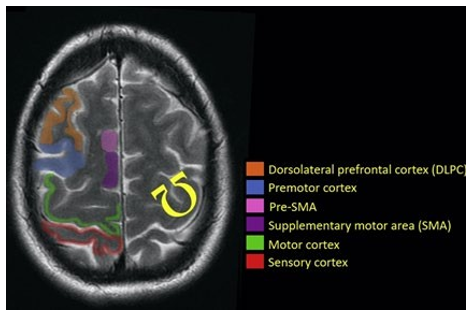

Kahneman and Tversky introduced a dual-system theoretical framework to explain judgments, decisions under uncertainty, and cognitive biases. System 1 refers to an automatic, intuitive, unconscious, fast, and effortless or routine mechanism to make most common decisions (Figure 1). Conversely, system 2 makes deliberate decisions, which are non-programmed, conscious, usually slow and effortful [6]. It has been suggested that most cognitive biases are likely due to the overuse of system 1 or when system 1 overrides system 2 [7-9]. In this framework, techniques that enhance system 2 could counteract these biases and thereby improve diagnostic accuracy and decrease management errors.

A model for diagnostic reasoning based on dual-process theory (from Ely et al. with permission).[9] System 1 thinking can be influenced by multiple factors, many of them subconscious (emotional polarization toward the patient, recent experience with the diagnosis being considered, specific cognitive or affective biases), and is therefore represented with multiple channels, whereas system 2 processes are, in a given instance, single-channeled and linear. System 2 overrides system 1 (executive override) when physicians take a time-out to reflect on their thinking, possibly with the help of checklists. In contrast, system 1 may irrationally override system 2 when physicians insist on going their own way (e.g., ignoring evidence-based clinical decision rules that can usually outperform them). Notes: Dysrationalia denotes the inability to think rationally despite adequate intelligence. “Calibration” denotes the degree to which the perceived and actual diagnostic accuracy correspond.

In the last three decades, we learned about the importance of patient- and hospital-level factors associated with medical errors. For example, standardized approaches (e.g. Advanced Trauma Life Support, ABCs for cardiopulmonary resuscitation) at the health system level lead to better outcomes by decreasing medical errors [16, 17]. However, physician-level factors were largely ignored as reflected by reports from scientific organizations [18-20]. It was not until the 1970s that cognitive biases were initially recognized to affect individual physicians’ performance in daily medical decisions [6, 21-24]. Despite these efforts, little is known about the influence of cognitive biases and personality traits on physicians’ decisions that lead to diagnostic inaccuracies, medical errors or impact on patient outcomes. While a recent review on cognitive biases and heuristics suggested that general medical personnel is prone to show cognitive biases, it did not answer the question whether these biases actually relate to the number of medical errors in physicians [25].

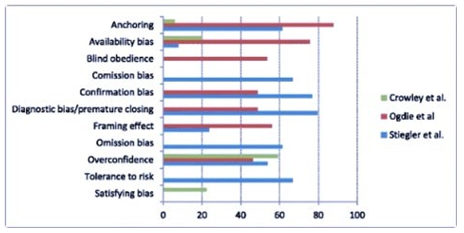

The most commonly studied personality trait was tolerance to risk or ambiguity (n = 5), whereas the framing effects (n = 5) and overconfidence (n = 5) were the most common cognitive biases.

There was a wide variability in the reported prevalence of cognitive biases (Figure 3). For example, when analyzing the three most comprehensive studies that accounted for several cognitive biases (Figure 4), the availability bias ranged from 7.8 to 75.6 % and anchoring from 5.9 to 87.8 %, suggestive of substantial heterogeneity among studies. In summary, cognitive biases may be common and present in all included studies. The framing effect, overconfidence, and tolerance to risk/ambiguity were the most commonly studied cognitive biases. However, methodological limitations make it difficult to provide an accurate estimation of the true prevalence.

Prevalence of cognitive biases in the top three most comprehensive studies [39, 50, 52] Numbers represent percentages reflecting the frequency of the cognitive bias. Note the wide variation in the prevalence of cognitive biases across studies

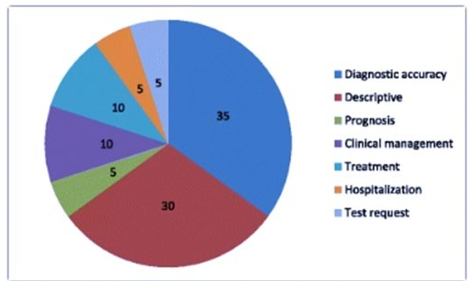

Figure 5: Outcome measures of studies evaluating cognitive biases. Numbers represent percentages. Total number of studies = 20. Note that 30 % of studies are descriptive and 35 % target diagnostic accuracy. Only few studies evaluated medical management, treatment, hospitalization or prognosis

In summary, their findings suggest that cognitive biases (from one to two thirds of case-scenarios) may be associated with diagnostic inaccuracies. Evidence from five out of seven studies suggests a potential influence of cognitive biases on management or therapeutic errors [38, 43, 46, 47, 50]. Physicians who exhibited information bias, anchoring effects and representativeness bias, were more likely to make diagnostic errors [38, 43, 46, 50].

Early recognition of physicians’ cognitive and biases are crucial to optimize medical decisions, prevent medical errors, provide more realistic patient expectations, and contribute to decreasing the rising health care costs altogether [3, 8, 54]. In the present systematic review, we had four objectives. First, we identified the most commonly reported cognitive biases (i.e., anchoring and framing effects, information biases) and personality traits (e.g. tolerance to uncertainty, aversion to ambiguity) that may potentially affect physicians’ decisions. All included studies found at least one cognitive factor/bias, indicating that a large number of physicians may be possibly affected [39, 50, 52]. Second, we identified the effect of physician’s cognitive biases or personality traits on medical tasks and on medical errors. Studies evaluating physicians’ overconfidence, the anchoring effect, and information or availability bias may suggest an association with diagnostic inaccuracies [30, 35, 40, 42, 45, 52, 53].

Moreover, anchoring, information bias, overconfidence, premature closure, representativeness and confirmation bias may be associated with therapeutic or management errors [38, 43, 46, 47, 50]. Misinterpretation of recommendations and lower comfort with uncertainty were associated with overutilization of diagnostic tests [46]. Physicians with better coping strategies and tolerance to ambiguity could be related to optimal management [43]. For the third objective – identifying the relation between physicians’ cognitive biases and patient’s outcomes- only 10 % of studies provided data on this area [41,43].

The fourth and final objective was to identify gaps in the literature. They found that only few (<50 %) of an established set of cognitive biases [26] were assessed, including: overconfidence, and framing effects. Other listed and relevant biases were not studied (e.g. aggregation bias, feedback sanction, hindsight bias). For example, aggregation bias (the assumption that aggregated data from clinical guidelines do not apply to their patients) or hindsight bias (the tendency to view events as more predictable than they really are) both compromise a realistic clinical appraisal, which may also lead to medical errors [18, 26].

In the present systematic review, they highlighted the relevance of recognizing physicians’ personality traits and cognitive biases. Although cognitive biases may affect a wide range of physicians (and influence diagnostic accuracy, management, and therapeutic decisions), their true prevalence remains unknown.

Need for New Standards

Amidst years of evidence that the care provided to patients is inequitable, the need to address racism in medicine head-on has created a new standard for all domains of medicine, including medical education. The individual decisions of providers are often influenced by bias, leading to substandard care and uncomfortable patient interactions. In pediatric emergency medicine (PEM), racial disparities have been described in several aspects of care, including, but not limited to, treatment of pain for children with appendicitis, utilization of imaging, and antibiotic prescriptions.

Both in the emergency department and outside it, there is a racial disparity in nonaccidental trauma allegations, investigations, and outcomes. Black children are more frequently referred for investigation and removed from their family's care across the country. There remains debate regarding the multifactorial nature of this racial disproportionality, but one of these factors is likely the racial bias underlying individual decision-making. At least one study has demonstrated that standardizing medical decision-making regarding abuse workups in pediatric hospitals erases the racial disparity in abuse allegations by medical providers.

This strongly suggests that medical providers, left to their own gestalt of when to be concerned about nonaccidental trauma, can be influenced by racial implicit bias. When addressing individual racism and implicit bias, there are several potential domains to consider, including the bias of the learners themselves, the bias of team members, and the bias of patients. Each of these domains contains unique challenges and the need for overlapping but distinct skill sets. It is imperative for all medical providers to be aware of how racial bias impacts care and be prepared to address it as part of a larger effort to undo racial health disparities and improve the patient and family medical experience. Emergency department physicians in particular must be adept at utilizing such skills in a fast-paced environment that often includes a rotating group of team members and consultants.

A variety of educational resources have been developed to combat provider bias and racism. Skill building for uncomfortable scenarios can be challenging in a lecture or other more theoretical format. Simulation offers a new potential modality for combating racial bias. Addressing the bias of others on the medical team can be difficult in the moment, and early learners often describe a deer-in-the-headlights panic, or a passivity, when faced with these scenarios. This discomfort makes such an interaction ripe for simulation, where learners can practice their skills in a realistically stressful but safe learning environment. A few authors have described the use of standardized patients to improve learning about racial bias, and this modality has successfully been used in the past to teach cultural competency. However, there are few published studies on the use of simulation to address racism and implicit bias, as well as no similar simulation publications in MedEdPORTAL

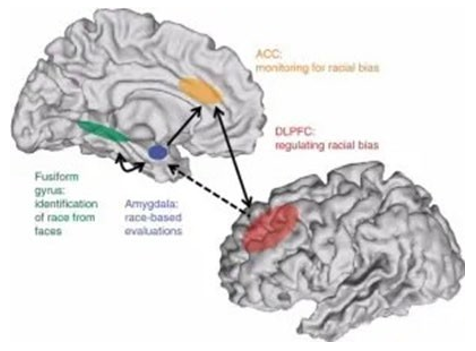

The Neurobiology of Bias

Pascal Molenberghs writes: [12].

Recently it has become possible to investigate the neural mechanisms that underlie these in-group biases, and hence this review will give an overview of recent developments on the topic. Rather than relying on a single brain region or network, it seems that subtle changes in neural activation across the brain, depending on the modalities involved, underlie how we divide the world into 'us' versus 'them'.These insights have important implications for our understanding of how in-group biases develop and could potentially lead to new insights on how to reduce them.

Through evolution, the human brain has developed to adjust to complex social group living (Dunbar, 2011). Neuroimaging studies have shown that our neural correlates respond differently to in-group and out-group members (Eberhardt, 2005, Amodio, 2008, Ito and Bartholow, 2009, Chiao and Mathur, 2010, Kubota et al., 2012, Eres and Molenberghs, 2013).

Understanding how these neural correlates are influenced by group membership is important for a better understanding of how complex social problems such as racism and in-group bias develop. Race is just one of many dimensions that people can use to categorize themselves. Gender, age, profession, ethnicity, status, country of birth, sports team, social group and education are just a few examples that we use to categorize people as belonging either to the in-group or out-group. Research has shown that people categorize themselves and others even based on trivial criteria (Tajfel et al., 1971) and this categorization can be very fluid and is often context dependent (Turner et al., 1994).

How we Categorize others

From a theoretical perspective, racism is one aspect of a larger psychological phenomenon called in-group bias. Our brains have developed to adapt to complex social situations. Discriminating whether someone belongs to the same or a different group could be vital in order to behave correctly in some situations, such as during battle.

The same psychological mechanism can, however, lead to highly problematic behaviors. Everyone belongs to many different groups in their life: In-group bias can be observed between fans of sports teams, supporters of political parties, or students from competing universities. In-group bias is highly dependent on context. Someone can show a bias against another person in one situation (e.g., during a football game when the two people support opposing teams), but categorize them as belonging to the same group in another context (e.g., when engaged in a political discussion and realizing that their views align). This demonstrates how arbitrary and meaningless these categorizations often are.

Functional magnetic resonance imaging studies have shown that the medial prefrontal cortex is particularly involved in social categorization. This brain area has also been found to be activated in studies in which participants were asked to think about their own personal attributes. This indicates that there is a relatively close association between thinking about ourselves and thinking about the social group(s) we belong to. This makes a lot of sense, as people also identify as members of the groups they belong to (e.g., "I am a Baltimore Ravens supporter.").

How We Perceive the Actions of Others

One important insight from psychological research is that people can perceive the same action very differently if it is conducted by a member of the same group or a member of a different group. In one empirical study by the author of the review article, participants were arbitrarily divided into two teams (Molenberghs et al., 2013) and watched videos of individuals from their own team and the competing team performing hand actions. Participants had to judge the speed of these hand movements and on average rated their own teams to be faster, even though the hand movements in the videos were performed at exactly the same speed.

In an additional functional magnetic resonance imaging study with the same task, the scientists found that participants who indicated a strong difference between the two groups showed an increase of activity in the inferior parietal lobule-a brain area that coordinates perception and action-when watching the videos, but not in subsequent decision making when rating the clips. These findings suggest that in-group bias already occurs very early in perception, not only when making a conscious decision about how to act.

How We Feel Empathy Towards Somebody Else

One of the neuroscientific key findings about racism is that on average people express less empathy towards other people who do not belong to their own group. Empathy describes the ability to understand what somebody else might think or feel and to act in an appropriate manner. For example, one study found that ethnic group membership can modulate the neural responses associated with empathy (Xu et al., 2009). Here, the authors used functional magnetic imaging to record brain activation in white and Chinese participants while they were watching video clips of white and Chinese faces being either touched with a Q-tip (non-painful) or poked by a syringe (painful).

The scientists showed that both white and Chinese participants showed increased activation in the anterior cingulate cortex and the inferior frontal cortex when watching a video clip in which a person of their own ethnic group was experiencing pain. These brain areas have previously been shown to be activated when someone experiences pain themselves. Thus, the same brain areas that mediate the first person pain experience are also involved in feeling empathy towards somebody else experiencing pain.

Importantly, the scientists found that this empathic brain response was significantly decreased when the participants viewed faces of individuals from other ethnic groups experiencing pain. Thus, in-group bias affects how much someone feels the pain of somebody else, which might contribute to why racist individuals would have less of a problem hurting somebody belonging to a different ethnic group than somebody who belongs to their own ethnic group.

Jennifer Edgoose, Md, Mph, Michelle Quiogue, Md, Faafp, And Kartik Sidhar, Md have suggested a strategy to reduce implicit bias by first discovering one's blind spots and then actively working to dismiss stereotypes and attitudes that affect your interactions.

For the last 30 years, science has demonstrated that automatic cognitive processes shape human behavior, beliefs, and attitudes. Implicit or unconscious bias derives from our ability to rapidly find patterns in small bits of information. Some of these patterns emerge from positive or negative attitudes and stereotypes that we develop about certain groups of people and form outside our own consciousness from a very young age. Although such cognitive processes help us efficiently sort and filter our perceptions, these reflexive biases also promote inconsistent decision making and, at worst, systematic errors in judgment [13].

Cognitive processes lead us to associate unconscious attributes with social identities. The literature explores how this influences our views on race, ethnicity, age, gender, sexual orientation, and weight, and studies show many people are biased in favor of people who are white, young, male, heterosexual, and thin [4]. Unconsciously, we not only learn to associate certain attributes with certain social groupings (e.g., men with strength, women with nurturing) but also develop preferential ranking of such groups (e.g., preference for whites over blacks).

This unconscious grouping and ranking takes root early in development and is shaped by many outside factors such as media messages, institutional policies, and family beliefs. Studies show that health care professionals have the same level of implicit bias as the general population and that higher levels are associated with lower quality care [5]. Providers with higher levels of bias are more likely to demonstrate unequal treatment recommendations, disparities in pain management, and even lack of empathy toward minority patients [13].

In addition, stressful, time-pressured, and overloaded clinical practices can actually exacerbate unconscious negative attitudes. Although the potential impact of our biases can feel overwhelming, research demonstrates that these biases are malleable and can be overcome by conscious mitigation strategies [13]. They recommend three overarching strategies to mitigate implicit bias – educate, expose, and approach –They further broke down these strategies into eight evidence-based tactics one can incorporate into any quality improvement project, diagnostic dilemma, or new patient encounter. Together, these eight tactics spell out the mnemonic IMPLICIT. See below:

Educate

When we fail to learn about our blind spots, we miss opportunities to avoid harm. Educating ourselves about the reflexive cognitive processes that unconsciously affect our clinical decisions is the first step. The following tactics can help:

Introspection

It is not enough to just acknowledge that implicit bias exists. As clinicians, we must directly confront and explore our own personal implicit biases. As the writer Anais Nin is often credited with saying, “We don't see things as they are, we see them as we are.” To shed light on your potential blind spots and unconscious “sorting protocols,” we encourage CAREGIVERS to take one or more implicit association tests.

Discovering a moderate to strong bias in favor of or against certain social identities can help you begin this critical step in self exploration and understanding. One can also complete this activity with your clinic staff and fellow physicians to uncover implicit biases as a group and set the stage for addressing them. For instance, many of us may be surprised to learn after taking an implicit association test that we follow the typical bias of associating males with science-an awareness that may explain why the patient in our first case example addressed questions to the male medical student instead of the female attending.

Mindfulness

It should come as no surprise that we are more likely to use cognitive shortcuts inappropriately when we are under pressure. Evidence suggests that increasing mindfulness improves our coping ability and modifies biological reactions that influence attention, emotional regulation, and habit formation. There are many ways to increase mindfulness, including meditation, yoga, or listening to inspirational texts. In one study, individuals who listened to a 10-minute meditative audiotape that focused them and made them more aware of their sensations and thoughts in a nonjudgmental way caused them to rely less on instinct and show less implicit bias against black people and the aged [3].

Expose

It is also helpful to expose ourselves to counter-stereotypes and to focus on the unique individuals we interact with. Similarity bias is the tendency to favor ourselves and those like us. When our brains label someone as being within our same group, we empathize better and use our actions, words, and body language to signal this relatedness. Experience bias can lead us to overestimate how much others see things the same way we do, to believe that we are less vulnerable to bias than others, and to assume that our intentions are clear and obvious to others. Gaining exposure to other groups and ways of thinking can mitigate both of these types of bias. The following tactics can help:

Perspective Taking

This tactic involves taking the first-person perspective of a member of a stereotyped group, which can increase psychological closeness to that group [8]. Reading novels, watching documentaries, and listening to podcasts are accessible ways to reach beyond our comfort zone. To authentically perceive another person's perspective, however, you should engage in positive interactions with stereotyped group members in real life. Increased face-to-face contact with people who seem different from you on the surface undermines implicit bias.

Learn to Slow Down

To recognize our reflexive biases, we must pause and think. For example, the next time you interact with someone in a stereotyped group or observe societal stereotyping, such as through the media, recognize what responses are based on stereotypes, label those responses as stereotypical, and reflect on why the responses occurred. One might then consider how the biased response could be avoided in the future and replace it with an unbiased response.

Additionally, research strongly supports the use of counter-stereotypic imaging to replace automatic responses. For example, when seeking to contradict a prevailing stereotype, substitute highly defined images, which can be abstract (e.g., modern Native Americans), famous (e.g., minority celebrities like Oprah Winfrey or Lin-Manuel Miranda), or personal (e.g., your child's teacher). As positive exemplars become more salient in your mind, they become cognitively accessible and challenge your stereotypic biases.

Individuation

This tactic relies on gathering specific information about the person interacting with you to prevent group-based stereotypic inferences. Family physicians are trained to build and maintain relationships with each individual patient under their care. Our own social identities intersect with multiple social groupings, for example, related to sexual orientation, ethnicity, and gender. Within these multiplicities, we can find shared identities that bring us closer to people, including shared experiences (e.g., parenting), common interests (e.g., sports teams), or mutual purpose (e.g., surviving cancer). Individuation could have helped the health care workers in Alisha's labor and delivery unit to avoid making judgments based on stereotypes. We can use this tactic to help inform clinical decisions by using what we know about a person's specific, individual, and unique attributes [1].

Approach

Like any habit, it is difficult to change biased behaviors with a “one shot” educational approach or awareness campaign. Taking a systematic approach at both the individual and institutional levels, and incorporating a continuous process of improvement, practice, and reflection, is critical to improving health equity.

Check Your Messaging

Using very specific messages designed to create a more inclusive environment and mitigate implicit bias can make a real difference. As opposed to claiming “we don't see color” or using other colorblind messaging, statements that welcome and embrace multiculturalism can have more success at decreasing racial bias.

Institutionalize Fairness

Organizations have a responsibility to support a culture of diversity and inclusion because individual action is not enough to deconstruct systemic inequities. To overcome implicit bias throughout an organization, consider implementing an equity lens – a checklist that helps you consider your blind spots and biases and assures that great ideas and interventions are not only effective but also equitable.

Another example would be to find opportunities to display images in your clinic's waiting room that counter stereotypes. You could also survey your institution to make sure it is embracing multicultural (and not colorblind) messaging.

Take two. Resisting implicit bias is lifelong work. The strategies introduced here require constant revision and reflection as you work toward cultural humility. Examining your own assumptions is just a starting point. Talking about implicit bias can trigger conflict, doubt, fear, and defensiveness. It can feel threatening to acknowledge that you participate in and benefit from systems that work better for some than others. This kind of work can mean taking a close look at the relationships you have and the institutions of which you are a part.

Education

Education, exposure, and a systematic approach to understanding implicit bias may bring us closer to our aspirational goal to care for all our patients in the best possible way and move us toward a path of achieving health equity throughout the communities we serve. The mnemonic IMPLICIT can help us to remember the eight tactics we all need to practice. While disparities in social determinants of health are often beyond the control of an individual physician, we can still lead the fight for health equity for our own patients, both from within and outside the walls of health care.

With our specialty-defining goal of getting to know each patient as a unique individual in the context of his or her community, family physicians are well suited to lead inclusively by being humble, respecting the dignity of each person, and expressing appreciation for how hard everyone works to overcome bias [55-73].

References

- Classen, D. C., Pestotnik, S. L., Evans, R. S., & Burke, J. P. (1991). Computerized surveillance of adverse drug events in hospital patients. Jama, 266(20), 2847-2851.

- Bates, D. W., Cullen, D. J., Laird, N., Petersen, L. A., & Small,S. D., et al. (1995). Incidence of adverse drug events and potential adverse drug events: implications for prevention. Jama, 274(1), 29-34.

- Andel, C., Davidow, S. L., Hollander, M., & Moreno, D. A. (2012). The economics of health care quality and medical errors. Journal of health care finance, 39(1), 39.

- Gionet, L., & Roshanafshar, S. (2013). Health at a Glance.Select Health Indicators of First Nations People Living Off Reserve, Métis and Inuit.

- Ioannidis, J. P., & Lau, J. (2001). Evidence on interventions to reduce medical errors: an overview and recommendations for future research. Journal of general internal medicine, 16, 325-334.

- Tversky, A., & Kahneman, D. (1974). Judgment under Uncertainty: Heuristics and Biases: Biases in judgments reveal some heuristics of thinking under uncertainty. Science, 185(4157), 1124-1131.

- Mamede, S., Van Gog, T., Van Den Berge, K., Van Saase, J. L., & Schmidt, H. G. (2014). Why do doctors make mistakes? A study of the role of salient distracting clinical features. Academic Medicine, 89(1), 114-120.

- van den Berge, K., & Mamede, S. (2013). Cognitive diagnostic error in internal medicine. European journal of internal medicine, 24(6), 525-529.

- Ely, J. W., Graber, M. L., & Croskerry, P. (2011). Checklists to reduce diagnostic errors. Academic Medicine, 86(3), 307-313.

- Dhillon, B. S. (1989). Human errors: a review. Microelectron Reliab, 29(3):299-304.

- Stripe, S. C., Best, L. G., Cole-Harding, S., Fifield, B., & Talebdoost, F. (2006). Aviation model cognitive risk factors applied to medical malpractice cases. The Journal of the American Board of Family Medicine, 19(6), 627-632.

- Chassin, M. R. (1998). Is health care ready for Six Sigma quality?. The Milbank Quarterly, 76(4), 565-591.

- Corn, J. B. (2009). Six sigma in health care. Radiologic technology, 81(1), 92-95.

- Zeltser, M. V., & Nash, D. B. (2010). Approaching the evidence basis for aviation-derived teamwork training in medicine. American Journal of Medical Quality, 25(1), 13-23.

- Ballard, S. B. (2014). The US commercial air tour industry: a review of aviation safety concerns. Aviation, space, and environmental medicine, 85(2), 160-166.

- Kern, K. B., Hilwig, R. W., Berg, R. A., Sanders, A. B., & Ewy,G. A. (2002). Importance of continuous chest compressions during cardiopulmonary resuscitation: improved outcome during a simulated single lay-rescuer scenario. Circulation, 105(5), 645-649.

- Collicott, P. E., & Hughes, I. (1980). Training in advanced trauma life support. Jama, 243(11), 1156-1159.

- Michaels, A. D., Spinler, S. A., Leeper, B., Ohman, E. M., & Alexander, K. P., et al. (2010). Medication errors in acute cardiovascular and stroke patients: a scientific statement from the American Heart Association. Circulation, 121(14), 1664-1682.

- Khoo, E. M., Lee, W. K., Sararaks, S., Abdul Samad, A., Liew, S. M., Cheong, A. T., ... & Hamid, M. A. (2012). Medical errors in primary care clinics–a cross sectional study. BMC family practice, 13, 1-6.

- Jenkins, R. H., & Vaida, A. J. (2007). Simple strategies to avoid medication errors. Family Practice Management, 14(2), 41-47.

- Marewski, J. N., & Gigerenzer, G. (2012). Heuristic decision making in medicine. Dialogues in clinical neuroscience, 14(1), 77-89.

- Wegwarth, O., Schwartz, L. M., Woloshin, S., Gaissmaier, W., & Gigerenzer, G. (2012). Do physicians understand cancer screening statistics? A national survey of primary care physicians in the United States. Annals of internal medicine, 156(5), 340-349.

- Elstein, A. S. (1983). Analytic methods and medical education: problems and prospects. Medical Decision Making, 3(3), 279-284.

- Elstein, A. S. (1976). Clinical Judgment: Psychological Research and Medical Practice: Interdisciplinary effort may lead to more relevant research and improved clinical decisions. Science, 194(4266), 696-700.

- Blumenthal-Barby, J. S., & Krieger, H. (2015). Cognitive biases and heuristics in medical decision making: a critical review using a systematic search strategy. Medical Decision Making, 35(4), 539-557.

- Croskerry, P. (2003). The importance of cognitive errors in diagnosis and strategies to minimize them. Academic medicine, 78(8), 775-780.

- Peabody, J. W., Luck, J., Glassman, P., Jain, S., & Hansen, J., et al. (2004). Measuring the quality of physician practice by using clinical vignettes: a prospective validation study. Annals of internal medicine, 141(10), 771-780.

- Eva, K. W. (2005). What every teacher needs to know about clinical reasoning. Medical education, 39(1), 98-106.

- Liberati, A., Altman, D. G., Tetzlaff, J., Mulrow, C., Gøtzsche,P. C., Ioannidis, J. P., ... & Moher, D. (2009). The PRISMA statement for reporting systematic reviews and meta-analyses of studies that evaluate health care interventions: explanation and elaboration. Annals of internal medicine, 151(4), W-65.

- Meyer, A. N., Payne, V. L., Meeks, D. W., Rao, R., & Singh,H. (2013). Physicians’ diagnostic accuracy, confidence, and resource requests: a vignette study. JAMA internal medicine, 173(21), 1952-1958.

- Wells, G. A., Shea, B., O’Connell, D., Peterson, J., Welch, V., Losos, M., & Tugwell, P. (2000). The Newcastle-Ottawa Scale (NOS) for assessing the quality of nonrandomised studies in meta-analyses.

- Cochrane handbook for systematic reviews of interventions version 5.1.0 updated March 2011.

- Shi, Q., MacDermid, J. C., Santaguida, P. L., & Kyu, H. H. (2011). Predictors of surgical outcomes following anterior transposition of ulnar nerve for cubital tunnel syndrome: a systematic review. The Journal of hand surgery, 36(12), 1996-2001.

- Mamede, S., Splinter, T. A., van Gog, T., Rikers, R. M., & Schmidt, H. G. (2012). Exploring the role of salient distracting clinical features in the emergence of diagnostic errors and the mechanisms through which reflection counteracts mistakes. BMJ quality & safety, 21(4), 295-300.

- Mamede, S., van Gog, T., van den Berge, K., Rikers, R. M., van Saase, J. L., van Guldener, C., & Schmidt, H. G. (2010). Effect of availability bias and reflective reasoning on diagnostic accuracy among internal medicine residents. Jama,304(11), 1198-1203.

- Msaouel, P., Kappos, T., Tasoulis, A., Apostolopoulos, A. P., Lekkas, I., Tripodaki, E. S., & Keramaris, N. C. (2014). Assessment of cognitive biases and biostatistics knowledge of medical residents: a multicenter, cross-sectional questionnaire study. Medical education online, 19(1), 23646.

- Ross, S., Moffat, K., McConnachie, A., Gordon, J., & Wilson,P. (1999). Sex and attitude: a randomized vignette study of the management of depression by general practitioners. British Journal of General Practice, 49(438), 17-21.

- Perneger, T. V., & Agoritsas, T. (2011). Doctors and patients’ susceptibility to framing bias: a randomized trial. Journal of general internal medicine, 26, 1411-1417.

- Ogdie, A. R., Reilly, J. B., Pang, W. G., Keddem, S., Barg, F. K., Von Feldt, J. M., & Myers, J. S. (2012). Seen through their eyes: residents’ reflections on the cognitive and contextual components of diagnostic errors in medicine. Academic Medicine, 87(10), 1361-1367.

- Friedman, C., Gatti, G., Elstein, A., Franz, T., Murphy, G., & Wolf, F. (2001). Are clinicians correct when they believe they are correct? Implications for medical decision support. In MEDINFO 2001 IOS Press, 454-458.

- Baldwin, R. L., Green, J. W., Shaw, J. L., Simpson, D. D., Bird, T. M., Cleves, M. A., & Robbins, J. M. (2005). Physician risk attitudes and hospitalization of infants with bronchiolitis. Academic Emergency Medicine, 12(2), 142-146.

- Reyna, V. F., & Lloyd, F. J. (2006). Physician decision making and cardiac risk: effects of knowledge, risk perception, risk tolerance, and fuzzy processing. Journal of Experimental Psychology: Applied, 12(3), 179.

- Yee, L. M., Liu, L. Y., & Grobman, W. A. (2014). The relationship between obstetricians’ cognitive and affective traits and their patients’ delivery outcomes. American journal of obstetrics and gynecology, 211(6), 692-e1.

- Graber, M. A., Bergus, G., Dawson, J. D., Wood, G. B., Levy,B. T., & Levin, I. (2000). Effect of a patient’s psychiatric history on physicians’ estimation of probability of disease. Journal of general internal medicine, 15(3), 204-206.

- Bytzer, P. (2007). Information bias in endoscopic assessment. Oficial journal of the American College of Gastroenterology ACG, 102(8), 1585-1587.

- Sorum, P. C., Shim, J., Chasseigne, G., Bonnin-Scaon, S., Cogneau, J., & Mullet, E. (2003). Why do primary care physicians in the United States and France order prostate-specific antigen tests for asymptomatic patients?. Medical Decision Making, 23(4), 301-313.

- Redelmeier, D. A., & Shafir, E. (1995). Medical decision making in situations that offer multiple alternatives. Jama, 273(4), 302-305.

- DiBonaventura, M. D., & Chapman, G. B. (2008). Do decision biases predict bad decisions? Omission bias, naturalness bias, and influenza vaccination. Medical Decision Making, 28(4), 532-539.

- Saposnik, G., Cote, R., Mamdani, M., Raptis, S., Thorpe, K. E., Fang, J., ... & Goldstein, L. B. (2013). JURaSSiC: accuracy of clinician vs risk score prediction of ischemic stroke outcomes. Neurology, 81(5), 448-455.

- Stiegler, M. P., & Ruskin, K. J. (2012). Decision-making and safety in anesthesiology. Current Opinion in Anesthesiology, 25(6), 724-729.

- Gupta, M., Schriger, D. L., & Tabas, J. A. (2011). The presence of outcome bias in emergency physician retrospective judgments of the quality of care. Annals of emergency medicine, 57(4), 323-328.

- Crowley, R. S., Legowski, E., Medvedeva, O., Reitmeyer, K., Tseytlin, E., Castine, M., ... & Mello-Thoms, C. (2013). Automated detection of heuristics and biases among pathologists in a computer-based system. Advances in Health Sciences Education, 18, 343-363.

- Mamede, S., Schmidt, H. G., Rikers, R. M., Custers, E. J., Splinter, T. A., & van Saase, J. L. (2010). Conscious thought beats deliberation without attention in diagnostic decision-making: at least when you are an expert. Psychological research, 74, 586-592.

- Zwaan, L., Thijs, A., Wagner, C., van der Wal, G., & Timmermans, D. R. (2012). Relating faults in diagnostic reasoning with diagnostic errors and patient harm. Academic Medicine, 87(2), 149-156.

- Graber, M. L., Kissam, S., Payne, V. L., Meyer, A. N., & Sorensen, A., et al. (2012). Cognitive interventions to reduce diagnostic error: a narrative review. BMJ quality & safety, 21(7), 535-557.

- Balla, J. I., Heneghan, C., Glasziou, P., Thompson, M., & Balla, M. E. (2009). A model for reflection for good clinical practice. Journal of evaluation in clinical practice, 15(6), 964-969.

- Raab, M., & Gigerenzer, G. (2015). The power of simplicity: a fast-and-frugal heuristics approach to performance science. Frontiers in psychology, 6, 1672.

- Elwyn, G., Thompson, R., John, R., & Grande, S. W. (2015). Developing IntegRATE: a fast and frugal patient-reported measure of integration in health care delivery. International Journal of Integrated Care, 15.

- Hyman, D. J., Pavlik, V. N., Greisinger, A. J., Chan, W., & Bayona, J., et al. (2012). Effect of a physician uncertainty reduction intervention on blood pressure in uncontrolled hypertensives-a cluster randomized trial. Journal of general internal medicine, 27, 413-419.

- Kachalia, A., & Mello, M. M. (2013). Defensive medicine— legally necessary but ethically wrong?: Inpatient stress testing for chest pain in low-risk patients. JAMA internal medicine, 173(12), 1056-1057.

- Smith, T. R., Habib, A., Rosenow, J. M., Nahed, B. V., &Babu, M. A., et al. Cybulski, G., ... & Heary, R. F. (2015). Defensive medicine in neurosurgery: does state-level liability risk matter?. Neurosurgery, 76(2), 105-114.

- Bhatia, R. S., Levinson, W., Shortt, S., Pendrith, C., & Fric-Shamji, E., et al. (2015). Measuring the effect of Choosing Wisely: an integrated framework to assess campaign impact on low-value care. BMJ quality & safety, 24(8), 523-531.

- Levinson, W., & Huynh, T. (2014). Engaging physicians and patients in conversations about unnecessary tests and procedures: Choosing Wisely Canada. Cmaj, 186(5), 325-326.

- Källberg, A. S., Göransson, K. E., Östergren, J., Florin, J., & Ehrenberg, A. (2013). Medical errors and complaints in emergency department care in Sweden as reported by care providers, healthcare staff, and patients–a national review. European Journal of Emergency Medicine, 20(1), 33-38.

- Studdert, D. M., Mello, M. M., Gawande, A. A., Gandhi,T. K., & Kachalia, A., et al. (2006). Claims, errors, and compensation payments in medical malpractice litigation. New England journal of medicine, 354(19), 2024-2033.

- Hartling, L., Milne, A., Hamm, M. P., Vandermeer, B., & Ansari, M., et al. (2013). Testing the Newcastle Ottawa Scale showed low reliability between individual reviewers. Journal of clinical epidemiology, 66(9), 982-993.

- Lo, C. K. L., Mertz, D., & Loeb, M. (2014). Newcastle-Ottawa Scale: comparing ‘reviewers to authors’ assessments. BMC medical research methodology, 14, 1-5.

- Stangierski, A., Warmuz-Stangierska, I., Ruchala, M., Zdanowska, J., & Glowacka, M. D., et al. (2012). Medical errors–not only patients’ problem. Archives of Medical Science, 8(3), 569-574.

- Graber, M. L. (2013). The incidence of diagnostic error in medicine. BMJ quality & safety, 22(Suppl 2), ii21-ii27.

- Cunningham, W. A., Johnson, M. K., Raye, C. L., Gatenby, J. C., & Gore, J. C., et al. (2004). Separable neural components in the processing of black and white faces. Psychological science, 15(12), 806-813.

- Molenberghs, P. (2013). The neuroscience of in-group bias.Neuroscience & Biobehavioral Reviews, 37(8), 1530-1536.

- Molenberghs, P., Halász, V., Mattingley, J. B., Vanman, E. J., & Cunnington, R. (2013). Seeing is believing: Neural mechanisms of action–perception are biased by team membership. Human brain mapping, 34(9), 2055-2068.

- Xu, X., Zuo, X., Wang, X., & Han, S. (2009). Do you feel my pain? Racial group membership modulates empathic neural responses. Journal of Neuroscience, 29(26), 8525-8529.