Research Article - (2025) Volume 3, Issue 1

Fabric Inspection Using Computer Vision

Received Date: Dec 22, 2024 / Accepted Date: Jan 24, 2025 / Published Date: Jan 31, 2025

Copyright: ©Â©2025 Randitha Senarathne, et al. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Senarathne, R., Thennakoon, R. (2025). Fabric Inspection Using Computer Vision. Int J Med Net, 3(1), 01- 07.

Abstract

The textile industry is one of the basic parts of global manufacturing and is about to undergo a significant metamorphosis with the rise in technologies of artificial intelligence and computer vision. Traditionally, fabric inspection has been labor-intensive, requiring a lot of time and highly critical eyes for spotting defects, making it a process often blemished by human error. The advent of AI-enabled technology is allowing textile producers to implement automated inspection systems that enhance efficiency and raise quality benchmarks. This shift not only increases productivity but also guarantees uniformity in production by minimizing defects, ushering in a new age of intelligent manufacturing.

In this project, we developed a fabric inspection system based on computer vision with the YOLOv8 model. Our approach was trained on a dataset containing most frequent fabric defects, including holes, foreign yarn, and slubs (thread errors), as well as surface contamination like dirty marks, dye patches, and oil patches. Several models have been experimented on the dataset, including VGG, ResNet, and YOLOv8, and YOLOv8 performs best in all major metrics: accuracy, precision, recall, F1 score, model size, and prediction time.

To make this solution easily accessible and user-friendly, we implemented a FastAPIbased web application that is capable of real-time fabric quality evaluation. The application integrates our YOLOv8 model with a Logitech C270 camera, which captures RGB images for immediate detection of defects and classification of fabric quality using fault rate. This is an example of how AI can elevate the inspection in textiles, making it faster, more accurate, and more reliable than ever.

Introduction

The textile industry has seen some remarkable development through the use of Artificial Intelligence, mainly in quality control practices. Fabric inspection is one of the critical stages in production and has conventionally relied on manual assessment is time-consuming and prone to human error. The rise in computer vision techniques has enabled the production of automatic fabric inspection, offering a reliable alternative with faster and more accurate detection of defects. This automation reduces costs and improves consistency in quality inspections, so it is a must-have tool in the modern era of textile manufacturing.

The type of fabric defects may vary to a large extent. For example, foreign yarns, holes, slubs, and surface contaminations are common for many types of fabrics, and the occurrence of these defects can seriously affect the quality of the fabrics. Any automated inspection system for fabrics has to be sensitive to such defects to ensure that only fabrics of desired quality are finally brought to the market.

In this project, a computer vision-based fabric inspection system is presented with YOLOv8. Our work is to recognize common fabric defects so that high-quality fabric production can be ensured through real-time analysis.

To build our dataset, we conducted data collection during factory visits to MAS Intimates at Mawathagama as part of an industry- collaborated project. As we had only limited availability of images of fabric defects, we combined our dataset with the TILDA fabric defect dataset to source more data. The defects targeted during our study were holes, foreign yarn, slubs (mistakes of thread), and surface contamination (dirty marks, dye patches, and oil spots).

We have trained our dataset on various models like VGG, ResNet, and YOLOv8. Of all the models, YOLOv8 performed best in terms of accuracy, precision, recall, F1 score, model size, and prediction time.

We initially created a web application with Flask to calculate the fabric fault rate. However, the Flask-based solution was very slow and not suitable for real-time prediction. In response to the challenge, we implemented a web application using FastAPI that significantly improved the speed and responsiveness of the system. The final application is intended to connect with a Logitech C270 camera for capturing RGB images and allowing real-time defect detection, thus evaluating whether the fabric meets the defined quality standards depending on the calculated fault rate.

Related work

Automatic fabric inspection is one of the most important areas of research in the textile industry, and many works have dealt with the use of computer vision and artificial intelligence techniques to speed up quality inspection and improve accuracy. This section shows some important contributions that have pushed this area of research forward. Several studies, like [1] and [2], focused on the application of deep learning methods in recognizing specific types of defects in textiles. In [1], a CNN-based approach was applied to recognize hairiness in pile textiles, where RGB images were captured using a stereo ZED camera for classification. On the other hand, the generic computer vision model for defect detection and classification on different fabric types was developed by [2], handling those challenges that emerged in the patterned and multicolored textiles with higher techniques such as weighted double lowrank decomposition (WDLRD) and Deep-JSLR. All the papers presented the importance of defect detection but are bounded by either a narrow scope on specific kinds of defects or poor performances over complex patterns [1,2].

Other works, like [3] and [4], have applied neural networks and transfer learning to defect recognition. The work of aimed at low- cost solutions for textile industries in developing countries. In this work, a multi-layer neural network was applied to classify four types of defects. Although simple and low-cost, it relied much on manual pre-processing of images. On the other hand, employed transfer learning by using CNN architectures, especially ResNet50, and achieved high accuracy. However, reliance on transfer learning might limit the flexibility to adapt to different defect datasets and may require extensive fine-tuning to solve new data [3,4].

[5] explored unsupervised learning with a convolutional denoising autoencoder for fabric defect identification. In this method, image- patch reconstruction and synthesis of the detection results are performed in a multilevel approach in order to retain robustness even when the number of defect samples is low. Effective at finding small defects, the unsupervised approach may be less accurate when dealing with specific, visually recognizable defects, like slubs or dye patches.

Other than the above works, in a comprehensive review, [6] pointed out the need for automated inspection systems in the textile industry and further emphasized that human visual inspection alone was not sufficient to meet present-day needs. Their review broadly covered methods such as texture analysis, frequency domain approaches, GLCM, feature fusion, and deep learning-based approaches and concluded that deep learning is promising, but real-time defect detection remains an open challenge. In a similar view, [7] classified fabric defect detection methods as statistical, spectral, and model-based approaches and recommended the combination of the three methods in order to get good results. In [8], the authors developed a computer vision system and installed it on a circular knitting machine that used Gabor wavelet transform together with neural networks to detect and classify the defects. Positive results were reported both in the offline and online settings, with the system’s performance compared against human inspection. Moreover, [9] proposed a semi-automated defect identification methodology and presented the drastic reductions in cost and time that are achieved by using automation. Also, [10] focused on using a deep convolutional neural network for fabric defect classification based on features of texture and color, which resulted in high accuracy on different fabric datasets and hence demonstrated the effectiveness of advanced learning algorithms in the domain of defect identification and classification.

In contrast, this project uses the YOLOv8 framework for real- time fabric defect detection to address the shortcomings identified in previous literature by focusing on different defect categories, including holes, slubs, surface contamination, and foreign yarn, while embedding an efficient data acquisition and prediction mechanism through a FastAPI-based web application. This industry-specific dataset enhancement, featuring information from MAS Intimates and TILDA, augmented with real-time processing capabilities, ensures a much-needed comprehensive solution for automating fabric inspection.

Methodology

Data Collection

Data collection for our fabric inspection system was performed by taking pictures during factory visits to MAS Intimates, and it was complemented with the TILDA fabric defect dataset. The pictures taken were for a variety of defects such as holes, foreign yarn, slubs, surface contamination, as well as good images without defects. To deal with class imbalance, data augmentation was conducted, resulting in roughly 5000 images per class. Preprocessing involved resizing images to 64x64 pixels to reduce computational requirements and create a homogeneous set. Each frame was then converted to grayscale and copied into three channels, as required by the model’s input format. Normalization involved scaling pixel values within the [0, 1] range.

Figure 1: Dataset Class Distribution

Model Architecture

Our model leverages the YOLOv8 architecture, which contains an input layer that reads images with a size of 64x64 pixels over three color channels, a backbone containing convolutional layers to extract features, and a Feature Pyramid Network (FPN) inside the neck to enhance feature maps at different scales. This architectural design is very critical to detecting fabric defects of various sizes. The top layer generates class probabilities and bounding box coordinates, which helps in the detection of defects such as surface contamination, slubs, foreign yarn, and holes. The YOLOv8 framework is efficient in real-time detection with low latency and is ideal to satisfy the demands of our project.

|

Layer No. |

Layer Name (Type) |

Output Shape |

No. of Parameters |

|

1 |

Input |

64×64×3 |

0 |

|

2 |

Conv2D-1 |

64×64×32 |

896 |

|

3 |

MaxPooling2D |

32×32×32 |

0 |

|

4 |

Conv2D-2 |

32×32×64 |

18,496 |

|

5 |

MaxPooling2D |

16×16×64 |

0 |

|

6 |

Conv2D-3 |

16×16×128 |

73,856 |

|

7 |

MaxPooling2D |

8×8×128 |

0 |

|

8 |

Dropout |

8×8×128 |

0 |

|

9 |

Conv2D-4 (FPN layer) |

8×8×256 |

295,168 |

|

10 |

MaxPooling2D |

4×4×256 |

0 |

|

11 |

Dropout |

4×4×256 |

0 |

|

12 |

Conv2D-5 (Neck Layer) |

4×4×512 |

1,180,160 |

|

13 |

MaxPooling2D |

2×2×512 |

0 |

|

14 |

Flatten |

2048 |

0 |

|

15 |

Dense |

256 |

524,544 |

|

16 |

Dense (Output Layer) |

5 |

1,285 |

|

|

Total Parameters |

|

2,094,405 |

Table 1: Summary of Model Architecture

Training Process

During training, the dataset was split into training (70%), validation (20%), and test (10%) subsets. The YOLOv8 model was trained and evaluated on accuracy, precision, recall, F1 score, prediction time, and model size for defect detection in fabrics.

Figure 2: Dataset Split for Train, Validation, and Test Sets

Fault Rate Calculation in Report Generation

For fault rate calculation, the detection system loads the YOLO model on a GPU (CUDA) or Apple Silicon (MPS) if available. WebSocket streams live video frames to the FastAPI application, where each frame is processed in real-time to detect and classify defects. Fabric defects are assigned fault points based on their type, size, and frequency. A defect of foreign yarn, slub, or surface contamination is assigned 1 fault point under standard conditions. However, if these defects are large in either lengthwise or breadthwise dimensions (where length exceeds twice the breadth), they are assigned 4 fault points. Additionally, if more than 3 defects of these types are detected within one meter (tracked over 30 frames), they are also assigned 4 fault points. A hole defect is assigned 4 fault points regardless of size. This grading system aids in standardizing fabric quality assessments based on defect severity and distribution. Upon generating a report, the FastAPI application calculates the fault rate using fabric dimensions supplied by the user. A JSON report is then generated, detailing the fabric length, width, detected defects, and calculated fault rate, facilitating a quantitative assessment of defect density in the inspected fabric section.

Fault Rate Equation

The fault rate is calculated as follows: (PFault Points × 0.84 × 100) Fault Rate = Fabric Length (m) × Fabric Width (m)

where:

- PFault Points is the total sum of fault points assigned based on defect detection. Fabric Length (m) and Fabric Width (m) are the dimensions of the fabric section provided by the user. In this study, we classify fabric quality based on the calculated fault rate, defined as follows:

• Good Quality: Fault rate < 15

• Moderate Quality: Fault rate between 15 and 28

• Rejected Quality: Fault rate > 28

These thresholds allow for standardized quality assessment and are used to determine the suitability of fabric for various applications.

Figure 3: Proposed Flowchart for Fabric Inspection

Results and Discussion

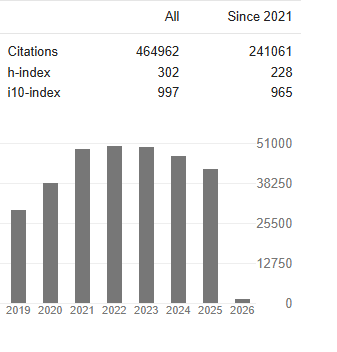

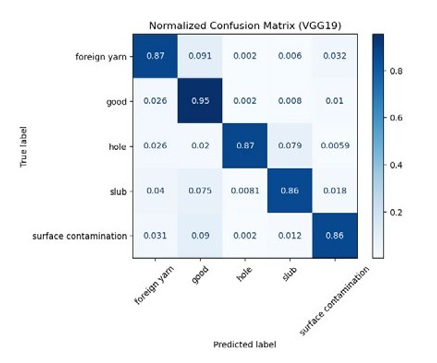

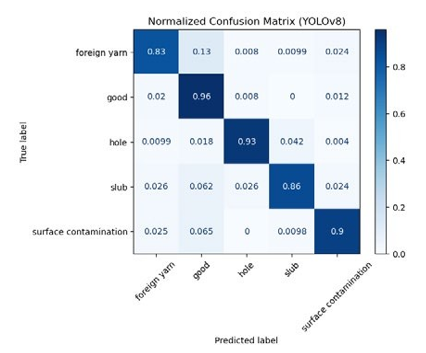

The dataset was trained by three models, ResNet50, VGG19, and YOLOv8. To evaluate the performances of these models, confusion matrices were used, which gave a graphical representation of their accuracy in classifying fabric defects. Shown below are the confusion matrices for ResNet50, VGG19, and YOLOv8.

Figure 4: Normalized Confusion Matrix for ResNet50

Figure 5: Normalized Confusion Matrix for VGG19

Figure 6: Normalized Confusion Matrix for YOLOv8

Figure 7: Performance Metrics Comparison for ResNet50, VGG19, and YOLOv8

|

Model |

Accuracy |

Precision |

Recall |

F1 Score |

Prediction Time (ms) |

Model Size (MB) |

|

YOLOv8 |

0.8954 |

0.9024 |

0.8953 |

0.8989 |

4.2 |

10.3 |

|

VGG19 |

0.8831 |

0.8908 |

0.8832 |

0.8841 |

25 |

246.8 |

|

ResNet50 |

0.8600 |

0.8697 |

0.8597 |

0.8602 |

17 |

308.6 |

Table 2: Model Performance Metrics

The evaluation of these models showed that YOLOv8 had better results in terms of accuracy, precision, recall, F1-score, prediction time, and model size compared to the other models, therefore it is considered the best model for fabric inspection in this study.

The YOLOv8 was integrated into a FastAPI application after the training process. If an image is sent to the application, it detects the defects in real-time and generates a report. The measurement of the fabric length and width, class predictions, and fault rate calculation are appended to the report. An example of the input and output from the FastAPI application is shown below.

Figure 8: Experimental Results

Conclusion

This project successfully developed an efficient automatic fabric inspection system using computer vision and deep-learning-based methodologies. The system accomplished high performance general fabric defect identification, including holes, foreign yarn,slubs, and surface contamination, using the YOLOv8 model. This model was trained on a dataset composed of both factory-acquired data and the TILDA dataset, which allows for real-time fabric defect detection with very encouraging performance metrics in terms of accuracy, precision, recall, and F1 score.

The system was able to run in real time by integrating a web application driven by Fast API, with an intuitive interface for fabric quality assessment through live video feed. This system is much faster, consistent, and more accurate than the traditional manual inspection techniques and saves human error, improving efficiency in textile production.

This solution is a good representative of the capabilities of these AI-driven inspection systems in the textile sector and sets a base for further development and improvement of automated quality control. Further efforts can concentrate on still-finer system refinement to deal with more complex patterns, optimization of prediction times, and an extension of the defect classification features with a much broader array of textile imperfections.

Acknowledgements

Sincere gratitude is extended to our supervisor, Dr. Mahima Weerasinghe, and cosupervisor, Mr. Dinith Primal, for their invaluable guidance and support throughout the project. Special thanks are also given to our MAS clients, Mr. Dias Senarathne and Mr. Madura Dissanayake, for their contributions and insights, which greatly aided the successful completion of this work.

References

- Khanal, S. R., Silva, J., Gonzalez, D. G., Castella, C., Perez,J. R. P., & Ferreira, M. J. (2022). Fabric hairiness analysis for quality inspection of pile fabric products using computer vision technology. Procedia Computer Science, 204, 591-598.

- Islam, A., Akhter, S., & Mursalin, T. E. (2008). Automated textile defect recognition system using computer vision and artificial neural networks. International Journal of Materialsand Textile Engineering, 2(1), 110-115.

- Cheung, W. H., & Yang, Q. (2024). Fabric defect detection using AI and machine learning for lean and automated manufacturing of acoustic panels. Proceedings of the Institution of Mechanical Engineers, Part B: Journal of Engineering Manufacture, 238(12), 1827-1836.

- Mei, S., Wang, Y., & Wen, G. (2018). Automatic fabric defect detection with a multi-scale convolutional denoising autoencoder network model. Sensors, 18(4), 1064.

- Mo, D. (2022). Development of a computer vision model forquality inspection in textile industry.

- Rasheed, A., Zafar, B., Rasheed, A., Ali, N., Sajid, M., Dar, S. H., ... & Mahmood, M. T. (2020). Fabric defect detection using computer vision techniques: a comprehensive review. Mathematical Problems in Engineering, 2020(1), 8189403.

- Kumar, A. (2008). Computer-vision-based fabric defect detection: A survey. IEEE transactions on industrial electronics, 55(1), 348-363.

- Saeidi, R. G., Latifi, M., Najar, S. S., & Saeidi, A. G. (2005). Computer vision-aided fabric inspection system for on- circular knitting machine. Textile Research Journal, 75(6), 492-497.

- Divyadevi, R., & Kumar, B. V. (2019, January). Survey of automated fabric inspection in textile industries. In 2019 International Conference on Computer Communication and Informatics (ICCCI) (pp. 1-4). IEEE.

- Jeyaraj, P. R., & Samuel Nadar, E. R. (2019). Computer vision for automatic detection and classification of fabric defect employing deep learning algorithm. International Journal of Clothing Science and Technology, 31(4), 510-521.