Review Article - (2026) Volume 3, Issue 1

A Framework for Enhancing Inspection Workforce Performance in Deepwater Energy Operations Through Competency Mapping and Technology-Assisted Decision Making

Received Date: Nov 28, 2025 / Accepted Date: Jan 09, 2026 / Published Date: Jan 20, 2026

Copyright: ©2026 Kufremfon Isaac Ukanireh. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Citation: Isaac-Ukanireh, K. (2026). A Framework for Enhancing Inspection Workforce Performance in Deepwater Energy Operations Through Competency Mapping and Technology-Assisted Decision Making. Ann Civ Eng Manag, 3(1) 01-17.

Abstract

Deepwater energy operations depend critically on the performance of multidisciplinary inspection teams to maintain asset integrity and ensure operational safety. However, workforce performance in these environments remains an undermanaged factor, characterized by inconsistent competency application, cognitive overload from complex data interpretation, and fragmented team coordination. These challenges directly contribute to delayed anomaly detection, elevated non-conformance rates, and increased operational risk. Despite the safety-critical nature of inspection activities, existing approaches rely predominantly on qualification-based systems that fail to address the dynamic interplay between technical competence, decision-making support, and organizational communication in high-reliability contexts. This research addresses this gap by designing, developing, and piloting a comprehensive Competency and Technology Integration Framework (CTIF) specifically tailored for offshore inspection workforces. The framework integrates four synergistic components: a dynamic competency matrix spanning non-destructive testing, metallurgical analysis, corrosion engineering, and risk-based inspection; technology-assisted decision-support tools incorporating predictive analytics and real-time data capture; formalized leadership and communication protocols tested in floating production storage and offloading (FPSO) environments; and a closed-loop performance feedback system linked to anomaly closure metrics including non-conformance reports and notification of inspection reports.

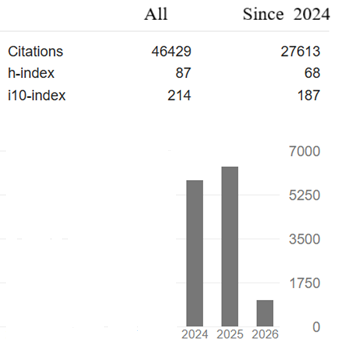

The framework was implemented and rigorously evaluated during a 14-month pilot program across three deepwater FPSOs operating in the Gulf of Mexico and West Africa, encompassing 1,923 inspection activities. Data collection employed mixed methods, combining quantitative performance indicators with qualitative workforce assessments through surveys, structured interviews, and direct observational studies. Baseline competency assessments revealed that 43% of inspection personnel operated below required competency levels for their assigned roles, establishing the foundation for targeted interventions. Results demonstrated substantial improvements across multiple performance dimensions. Non-conformance reports attributable to missed defects decreased by 61.8% (p < 0.001), while anomaly closure cycle time improved by 39.5%, declining from 47.3 to 28.6 days. Repeat inspection requirements fell by 58.9%, translating to annualized cost savings of $1.47 million per vessel. Qualitative findings revealed enhanced workforce confidence in decision-making (baseline mean 6.2 to post-implementation 8.4 on a 10-point scale), reduced role ambiguity (83% of respondents), and high technology adoption rates (94% sustained compliance).

The framework achieved these outcomes through mechanisms including cognitive load reduction, explicit competency transparency, and structured information flow that prevented communication degradation. The CTIF provides empirical evidence that inspection workforce performance can be systematically enhanced through integrated sociotechnical interventions. By transforming competency management from implicit knowledge to explicit organizational capability, the framework enables the transition from experience-based practices to evidence-based performance optimization. This research contributes a validated methodology for achieving operational excellence in safety-critical offshore environments, with direct implications for risk reduction, regulatory compliance, and organizational learning. The framework's modular design facilitates scalability to other high-risk offshore disciplines, offering a foundation for industry-wide workforce performance transformation in deepwater energy operations.

Keywords

Competency Mapping, Workforce Performance, Deepwater Inspection, Technology-Assisted Decision-Making, Offshore Asset Integrity, High-Reliability Organizations, Non-Destructive Testing, Risk-Based Inspection, Operational Excellence, Digital Transformation

Introduction

Deepwater energy operations represent some of the most complex, capital-intensive, and risk-laden industrial environments in the world. Offshore assets particularly those operating in deep and ultra-deepwater comprise dense, interdependent systems in which structural integrity, process safety, and reliability are tightly coupled. Floating Production Storage and Offloading (FPSO) units, semi-submersibles, subsea production systems, and associated flowlines operate under extreme pressure, corrosive seawater exposure, dynamic loading, and increasingly stringent regulatory expectations. In this environment, the inspection function serves as a critical line of defence against catastrophic failures. Non-destructive testing (NDT), corrosion monitoring, risk-based inspection (RBI) evaluations, and metallurgical assessments together determine the health of the asset and guide timely interventions. Yet despite multi-billion-dollar investments into operational technology and risk-reduction systems, inspection activities continue to rely heavily on frontline human expertise working in hazardous and cognitively demanding offshore contexts.

This tension gives rise to a central paradox at the heart of deepwater asset integrity management. On one hand, remarkable technological advances ranging from autonomous drones and subsea robotics to digital twins and real-time integrity dashboards promise unprecedented visibility of asset condition. On the other hand, inspection outcomes remain fundamentally dependent on human judgment, situational awareness, and multidisciplinary competency. Even the most sophisticated imaging technology cannot interpret corrosion morphology, anomaly criticality, or metallurgical degradation mechanisms without expert analysis. Subsea robots can capture high-resolution visuals, but the interpretation of pitting severity, weld fatigue signatures, coating failure patterns, or RBI prioritization rules still requires trained inspectors, corrosion engineers, and materials specialists. Thus, technology has expanded the scope and volume of inspection data, but it has not displaced the centrality of human decision-making.

In many cases, it has intensified the cognitive burden placed on inspectors who must now synthesize larger datasets under time pressure, challenging environmental conditions, and increasing expectations from onshore integrity teams. The human-centric nature of inspection performance creates several systemic vulnerabilities. First, competency standards across offshore settings remain fragmented. Inspection teams typically comprise NDT technicians, corrosion personnel, rope access specialists, subsea operators, and integrity engineers who often come from different organizations, training backgrounds, and certification regimes. While technical certification schemes (e.g., ASNT, PCN, API) ensure baseline skill levels, these do not consistently account for offshore contextual demands dynamic risk perception, real- time anomaly interpretation, cross-disciplinary communication, or the integration of digital inspection tools. The absence of a unified competency taxonomy for deepwater inspection roles leads to variability in performance, inconsistent anomaly assessments, and misalignment between field technicians and onshore engineers.

Second, technological enhancements, while beneficial, can inadvertently contribute to cognitive overload. High-fidelity inspection tools generate vast quantities of photographs, point clouds, ultrasonic readings, corrosion trendlines, and machine- learning-based predictions. For inspectors working in constrained spaces, exposed to weather, vibration, noise, and time pressure, interpreting these datasets quickly and accurately becomes a significant challenge. Without structured decision-support aids, field technicians risk overlooking subtle degradation features or misclassifying indications, particularly when fatigued or operating in novel conditions. Third, communication gaps between offshore and onshore integrity stakeholders continue to erode inspection reliability. NDT crews and corrosion technicians frequently report that inspection recommendations, RBI priorities, and engineering justifications are not consistently translated into clear operational instructions. Conversely, onshore teams often struggle to contextualize field observations within broader asset risk models. These disconnects lead to ambiguous workpacks, incomplete anomaly descriptions, delays in reporting, and, critically, missed opportunities for early intervention. Such gaps are magnified within FPSO environments where multicultural, rotating crews work under differing communication norms, time constraints, and leadership practices.

The consequences of these human-system challenges are nontrivial. Historical incident analyses across the offshore domain repeatedly show that inspection errors, misinterpretation of degradation mechanisms, inconsistent anomaly follow-up, and weak information flow contribute to avoidable equipment failures. Even small deviations in inspection performance such as misreading an ultrasonic signal, misclassifying a corrosion hotspot, or failing to escalate a critical indicationcan lead to severe operational, financial, and environmental repercussions in deepwater settings. As assets age and regulatory scrutiny intensifies, operators increasingly seek methods to enhance workforce performance, reduce variability, and ensure that human decision-making is augmented not overwhelmed by technology. Despite the growing importance of workforce performance in deepwater inspection, the academic and industrial literature reveals a substantial research gap. Existing studies often examine competency development, digital inspection technologies, offshore communication patterns, or performance management systems in isolation.

Very few attempts integrate these dimensions into a cohesive, closed-loop framework that systematically links

(1) individual inspector competency,

(2) technology-assisted decision-making,

(3) leadership and communication models suited to FPSO and subsea operations, and

(4) measurable performance outcomes such as anomaly closure and nonconformance (NC/NI) rates. Current approaches lack a holistic structure that aligns multidisciplinary skill mapping with digital tools and continuous performance feedback. As a result, deepwater inspection teams operate within systems where learning loops are fragmented, data is underutilized, and technology adoption is inconsistent. Addressing these gaps requires a comprehensive and operationally grounded framework one that aligns the human, technological, and organizational components of the inspection ecosystem. Such a framework must recognize the complexity of offshore inspection roles, the cognitive demands imposed by emerging digital platforms, and the crucial importance of leadership communication practices in high-reliability teams. It must also establish a measurable link between competency development and performance outcomes by connecting training, decision-support usage, communication quality, and the timely closure of anomalies across the asset lifecycle.

Figure 1: Integrated Architecture of the Competency & Technology Integration Framework Showing the Four Synergistic Pillars and their Interconnections

In response to this need, this paper develops and proposes a holistic Competency & Technology Integration Framework (CTIF) designed specifically for deepwater inspection environments. The CTIF integrates competency mapping for NDT, metallurgy, corrosion engineering, and RBI decision-making; embeds technology-assisted decision-support tools to reduce cognitive burden; formalizes communication and leadership models tested in FPSO operational settings; and incorporates a dynamic feedback loop linked directly to anomaly closure metrics. By combining these elements, the proposed framework aims to enhance inspection reliability, reduce variability in human performance, and strengthen the overall resilience of offshore asset integrity processes.

Literature Review

Competency Management and Human Factors in High- Risk Industries

Competency management is a central pillar of performance assurance in high-risk domains such as aviation, nuclear power, offshore energy, and process industries. The International Association of Oil and Gas Producers (IOGP) has long emphasized structured approaches to defining and evaluating workforce capability, particularly within its IOGP Report 501: Competence Management System Framework, which outlines systematic methods for identifying safety-critical roles, establishing competency standards, and implementing assessment cycles [1]. The report prescribes clear pathways for building, maintaining, and verifying competence in roles that must operate under uncertain, hazardous, and time-pressured conditions characteristics intrinsic to deepwater inspection environments. Within this body of literature, the conceptual foundations of competency are often framed using well-established developmental models. Miller’s Pyramid, a widely applied framework in professional education, describes four progressive tiers of competency: knows, knows how, shows how, and does [2]. This hierarchy highlights that mastery is not restricted to the mere acquisition of knowledge but must extend to contextually grounded application and consistent real-world performance. Within the offshore inspection domain, Miller’s top tier does is particularly pertinent because inspectors must make accurate, time-critical decisions within hazardous operational contexts while integrating multiple streams of technical information.

Figure 2: Adapted Miller's Pyramid Showing Progressive Competency Levels for Deepwater Inspection Personnel with Offshore- Specific Contextual Demands

Complementing Miller’s model, the Dreyfus Model of Skill Acquisition offers a longitudinal view of developmental progression from novice to expert [3]. The model suggests that experts draw on intuitive pattern recognition, holistic situational understanding, and tacit knowledge capabilities essential in interpreting corrosion signatures, weld defects, or metallurgical anomalies in real time. Studies in aviation and nuclear maintenance show that expert inspectors often rely not solely on formal rules but on experiential cues and contextual heuristics developed over long periods of practice. This aligns with NDT and metallurgy literature, where anomaly interpretation frequently relies on nuanced tactile, visual, and acoustic indicators that cannot be fully codified into procedures. Human factors research further identifies multiple error precursors relevant to deepwater inspection work.

Common precursors include time pressure, poor communication, excessive task complexity, inadequate supervision, and fatigue conditions frequently encountered by offshore inspection teams, especially during shutdowns, rope-access operations, or multi-day campaigns. Skill degradation is another documented challenge: where intermittent task exposure (e.g., advanced UT techniques or specialist metallurgical assessments) leads to atrophy of rarely used competencies. Research in the nuclear sector, for example, shows that inspectors who do not regularly perform complex evaluations experience a measurable decline in accuracy over time, even when certified. This evidence underscores the need for structured competency maintenance programs rather than relying solely on periodic recertification. Taken together, the literature in high-risk domains establishes two principles. First, competency must be dynamic, contextually validated, and tightly aligned to operational scenarios. Second, human performance is inherently vulnerable to degradation, variability, and systemic pressures. These principles reveal foundational challenges for deepwater inspection teams, where diverse roles intersect and where the consequences of human error are magnified by environmental isolation and technological complexity.

Technology Adoption in Field Operations: Digital Workflows and Cognitive Support

Technological transformation in field operations has rapidly accelerated, introducing a range of digital tools that seek to enhance precision, traceability, and situational awareness in inspection tasks. Digital work packs (DWPs), mobile inspection platforms, augmented reality (AR), virtual reality (VR), and predictive analytics now form integral components of many offshore integrity programs. The literature shows, however, that while these technologies offer substantial benefits, their adoption introduces new cognitive demands and organizational challenges. Digital work packs represent one of the most studied technologies in onshore and offshore maintenance. Research indicates that DWPs can significantly reduce ambiguity, improve procedural compliance, and enhance data quality. By embedding drawings, historical inspection data, RBI priorities, and decision trees into handheld devices, DWPs enhance inspectors’ situational awareness. However, studies in petrochemical maintenance and aviation note that poorly designed electronic workflows may increase cognitive load, especially when users must navigate complex interfaces or reconcile conflicting datasets under time pressure. Mobile inspection platforms often delivered through rugged tablets extend these benefits by enabling real-time anomaly capture, geotagging, and automated synchronization with onshore systems. Literature from manufacturing and pipeline maintenance shows that mobile platforms improve consistency, reduce transcription errors, and facilitate anomaly trending. Yet, concerns remain around information overload, battery limitations, usability in harsh environments, and inspectors' reliance on technology to the detriment of perceptual vigilance.

AR and VR technologies have gained traction for both training and in-field decision assistance. VR simulations allow inspectors to rehearse complex tasks, such as confined-space inspection or corrosion mapping, in a risk-free environment. Research from aviation maintenance demonstrates that VR leads to faster skill acquisition and improved retention for rarely performed tasks addressing the skill degradation challenges identified earlier. AR, when used for live equipment overlay or guided UT procedures, enhances spatial understanding and supports novice inspectors through step-by-step instructions. Studies in industrial maintenance show that AR reduces task completion time and error rates, though it may also induce visual fatigue and overreliance if not properly integrated into workflow design. Predictive analytics and digital twins represent a more recent frontier. Digital twins integrate inspection data, process conditions, and structural models to provide real-time visualization of degradation scenarios. Research indicates that these tools enhance anomaly prioritization and resource allocation. However, their effectiveness depends on inspectors’ ability to interpret outputs raising competency requirements rather than reducing them. Without structured decision-support scaffolding, digital twins may overwhelm frontline technicians with complex predictive indicators. Across these technologies, a common theme emerges: while digital tools can substantially enhance human performance, their impact is not inherently positive. They require careful design, user-centered integration, and alignment with workforce competency profiles. The literature consistently warns that technology intended to reduce human error may inadvertently introduce new failure modes if users are insufficiently trained or if interfaces increase cognitive burden. This reinforces the need for an integrated framework that maps competencies to technological tasks and provides decision aids tailored to inspectors’ real operating conditions.

Team Performance and Leadership Models in Isolated Offshore Environments

Offshore platforms particularly FPSOs represent uniquely demanding team environments. Isolated geography, demanding shift patterns, multicultural crews, and high-risk operations collectively shape team dynamics. Extensive research in maritime, aviation, and offshore oil and gas industries provides insights into leadership approaches, communication patterns, and performance factors that influence operational reliability. Crew Resource Management (CRM), originally developed in aviation, has been widely adapted for offshore operations. CRM emphasizes communication discipline, cross-checking, mutual monitoring, situational awareness, and the flattening of hierarchical barriers to enhance safety-critical decision-making. Studies on offshore drilling and well control demonstrate that CRM training reduces procedural violations, enhances early anomaly detection, and improves team coordination during emergencies. Adaptations of CRM for inspection teams highlight the need for shared mental models ensuring that NDT technicians, corrosion personnel, and onshore engineers interpret risk indicators consistently. Leadership styles in isolated industrial environments have also been extensively examined. Research indicates that transformational leadership characterized by clear vision, empowerment, and individualized support correlates strongly with safety climate and proactive communication. In contrast, authoritarian leadership is associated with increased under-reporting of anomalies and reduced psychological safety, both of which degrade inspection reliability. Studies of offshore supervisory roles stress that leaders must facilitate open communication, bridge cultural differences, and create an environment where technicians feel comfortable escalating ambiguous findings.

A growing body of research addresses the onshore-offshore performance interface. Offshore personnel often report ambiguity in work instructions, inconsistent feedback on anomaly reports, and difficulty obtaining timely engineering clarifications. Conversely, onshore engineers cite insufficient contextual information, unclear photos, or lack of detailed NDT parameters in field reports. Literature from distributed team studies shows that such gaps result from mismatched mental models, asynchronous communication channels, and cultural differences in message framing. The result is a persistent misalignment between field execution and engineering intent, weakening the overall integrity management system. Studies on team cognition in remote industrial operations further reinforce these challenges. Offshore teams exhibit unique stressors such as isolation fatigue, circadian disruption, and workload fluctuations during shutdown campaigns. Such stressors degrade vigilance and communication quality two factors shown to have direct correlation with inspection errors. Literature also indicates that multicultural offshore teams require structured communication frameworks to prevent misunderstandings, especially when English is not the first language for many technicians. Overall, the team-performance literature underscores that offshore inspection reliability is not solely a function of technical expertise but is equally shaped by leadership, communication, psychological safety, and the effectiveness of onshore-offshore collaboration. Any holistic performance framework must therefore embed structured team models, CRM principles, and leadership methodologies.

Inspection Quality Metrics and Their Limitations

Inspection quality in the offshore energy sector is traditionally measured through anomaly-related metrics such as Nonconformance Reports (NCRs), Nonconformity/Non-Inspection (NC/NI) rates, repeat findings, and rework frequency. Such metrics form part of the compliance and assurance ecosystem used by operators to assess vendor performance, prioritize maintenance, and satisfy regulatory expectations. The literature notes, however, that these metrics largely capture downstream outputs of inspection activity rather than upstream drivers. NCR/NIR rates, for example, quantify anomalies identified or missed, but rarely differentiate whether issues stem from human performance, poor communication, inadequate competency, or technology misuse. Similarly, rework rates may indicate inspection inconsistency, but they often lack diagnostic value because they conflate multiple causative factors. Studies in quality management argue that without contextual data competency profiles, environmental conditions, tool usage logs such metrics lead to reactive rather than proactive improvement strategies. Furthermore, traditional metrics provide little insight into inspector decision pathways. Anomalies that are “missed” may result from cognitive overload, ambiguous work packs, difficult access conditions, or insufficient training in advanced defect characterization. Yet NCR/NIR metrics generally treat all misses as equivalent, masking the specific intervention needed to improve performance. Some recent research proposes integrating leading indicators such as communication quality, cross-check frequency, and technology adherence rates, but adoption remains limited. Digital inspection platforms enable more granular measurement, such as timestamp analysis, inspector annotation behavior, or UT signal interpretation accuracy. However, literature notes that without a structured framework linking such data to competency development, interpretations risk becoming purely quantitative and decoupled from deeper human-factors insights. In summary, existing inspection metrics provide important outcome measures but lack explanatory depth and integration with competency systems. They reveal what happened but not why it happened, limiting their usefulness for continuous improvement.

Synthesis and Research Gap

Across the four strands of literature, a consistent pattern emerges: research on competency management, digital technologies, team dynamics, and inspection quality metrics has evolved in parallel but largely disconnected streams. Competency models provide developmental guidance but rarely incorporate technology- enabled decision pathways or real-time performance data. Technology studies examine cognitive load or usability but seldom contextualize these within broader competency frameworks or leadership structures. Team performance literature emphasizes communication and leadership but generally excludes the role of digital inspection tools or competency alignment. Similarly,inspection metrics document outcomes but offer little integration with upstream drivers such as skill proficiency, tool adoption, or team dynamics. This siloed treatment results in fragmented organizational understanding of inspection performance. Operators may invest heavily in training, yet fail to integrate digital tools that support those competencies. They may introduce mobile platforms without aligning them with user skills or leadership communication models. Performance metrics may trigger reactive coaching, yet without linking back to technology design or competency mapping, they fail to produce lasting change.

The missing element in current research is a holistic, closed- loop system that integrates these domains into a self-improving performance model. Such a system would:

1. Map critical competencies for NDT, corrosion, metallurgy, and RBI.

2. Align decision-support technologies with competency requirements and cognitive-hazard profiles.

3. Embed CRM-informed communication models to strengthen onshore-offshore collaboration.

4. Link performance metrics (e.g., anomaly closure, NC/NI rates) directly to competency refinement and tool enhancement.

This integrated approach reflects the essential dynamic of deepwater inspection environments where human expertise, technological tools, and team collaboration must function synergistically to ensure reliability and safety. No existing framework in the literature systematically connects these elements. The novel contribution of this research is the development of a Competency & Technology Integration Framework (CTIF) that unifies these components into a single, adaptive system. By embedding feedback loops between performance data, competency standards, and technology-assisted decision-making, the CTIF addresses the core limitations identified across the literature and offers a structured pathway for enhancing offshore inspection workforce performance.

Methodology

This research adopts a design science approach, supported by elements of action research, to develop, refine, and validate a holistic performance-enhancement framework tailored for deepwater inspection teams. Design science is particularly suited to complex operational environments where the objective is not only to study phenomena but to build practical, context-sensitive artefacts that address real organizational challenges. Action research principles further enrich this process by incorporating iterative engagement with practitioners, enabling continuous feedback and refinement as the framework evolves. The proposed Competency & Technology Integration Framework (CTIF) was therefore developed through cycles of conceptualization, stakeholder collaboration, prototype development, field testing, and performance evaluation

Framework Conceptual Design

The conceptual design of the CTIF is based on a four-pillar architecture that integrates human capability, digital tools, collaborative structures, and performance analytics into a unified system. Each pillar addresses a specific systemic weakness documented in the literature and observed in offshore inspection practice.

• Pillar 1: Dynamic Competency Matrix

The first component is a structured competency architecture designed to map the skills required for key inspection-related roles including NDT Technician, Corrosion Engineer, Coating Specialist, Rope Access Inspector, Metallurgist, and RBI Analyst to defined proficiency levels. Drawing upon established models such as Miller’s Pyramid and the Dreyfus Model, the matrix defines progressive stages of capability from foundational knowledge to expert application in complex operational scenarios. The competency matrix emphasizes both technical and contextual skills. For example, UT technicians are mapped not only against equipment-handling proficiency but also against defect interpretation skill, anomaly prioritization, and familiarity with digital inspection workflows. Corrosion engineers are mapped according to their understanding of degradation mechanisms, data-trend interpretation, and use of predictive corrosion models. Similarly, RBI Analysts are assessed on risk modelling capability, inspection optimization logic, and ability to interpret inspection outcomes within risk frameworks. The matrix is designed to be dynamic, meaning it evolves based on emerging technologies, changes in inspection scope, and data from the performance feedback loop. This ensures that competencies remain aligned with real operational demands rather than static certification requirements.

• Pillar 2: Technology-Assisted Decision Suite

The second pillar comprises a suite of digital tools intended to support inspectors throughout the inspection lifecycle. The suite is structured around three capability areas: (1) data access and situational awareness, (2) predictive insights and anomaly alerts, and (3) streamlined work execution. Tools within this pillar include mobile digital work packs, handheld inspection tablets, cloud-based integrity dashboards, AR-assisted inspection aids, and predictive analytics engines that generate corrosion trends or fatigue-risk alerts. The design principle is to match digital tools with corresponding competency requirements so that technology enhances, rather than overwhelms, decision-making. Each tool is evaluated against usability criteria such as interface simplicity, offline capability for remote offshore operations, real- time synchronization, and compatibility with existing integrity management systems. The suite is designed to reduce cognitive load, support real-time interpretation, and standardize anomaly documentation. It also provides a mechanism for immediate escalation of critical findings and ensures that offshore technicians have the same data visibility as onshore engineers.

• Pillar 3: Structured Collaboration Protocol

Recognizing the communication challenges inherent in onshore- offshore coordination, the third pillar formalizes collaboration through structured communication models. The protocol specifies clear formats and expectations for pre-job briefs, shift handovers, critical anomaly escalations, and end-of-campaign reviews. Pre- job briefs follow a standardized script that aligns inspection priorities with RBI rationale and ensures a shared mental model among team members. During field execution, inspectors use predefined communication channels and escalation triggers to report findings consistently. The anomaly escalation model integrates CRM principles such as closed-loop communication, cross-verification, and assertive language frameworks. The protocol also stipulates expectations for the onshore engineering team, including response time targets, clarification formats, and decision-logging requirements. This ensures bidirectional communication discipline, reducing ambiguity and enhancing traceability.

• Pillar 4: Performance Feedback Loop

The fourth pillar establishes a measurement-driven learning loop linking inspection outcomes to individual and team performance. This loop aggregates data from NCR closure rates, NC/NI occurrences, re-inspection findings, anomaly ageing, digital tool usage logs, and communication quality indicators. Performance outputs are then translated into inputs for updating competency profiles, identifying skill gaps, refining digital tool features, and revising communication models. For example, clusters of repeated inspection misses may indicate the need for targeted NDT technique training or improved AR-guided procedures. Delays in anomaly closure may signal communication weaknesses or gaps in tool adoption. This feedback loop transforms the framework into a self-improving system that evolves with operational experience.

Development Process

The development of the CTIF followed a structured, multi-phase process involving iterative collaboration with subject matter experts (SMEs), offshore personnel, and digital solution architects.

• Phase 1: Competency Mapping via Expert Workshops

The initial development of the competency matrix was conducted through a series of workshops involving senior NDT specialists, corrosion engineers, RBI analysts, FPSO inspection leads, and human factors practitioners. Participants reviewed existing competency profiles, identified critical performance errors observed in prior campaigns, and mapped required proficiencies using modified Miller and Dreyfus frameworks. Each role’s competency attributes were categorized into technical, cognitive, communication, and digital-fluency domains. These collaborative workshops ensured that the competency matrix reflects authentic operational expectations and differentiates between routine tasks and high-consequence activities requiring mastery.

• Phase 2: Technology Selection Using Usability and Performance Criteria

Digital tools were evaluated against a set of usability criteria derived from human factors principles. The criteria included interface clarity, cognitive load reduction, environmental robustness, integration with the integrity database, and ease of escalation. Representatives from digital solution providers presented technology options, which were assessed in simulated offshore scenarios. Pilot trials were conducted using digital work packs and handheld inspection tablets during training exercises.User feedback from technicians and supervisors was used to refine tool configuration and to calibrate the decision-support algorithms embedded in dashboards and predictive modules.

• Phase 3: Collaboration Protocol Design via Field Observation and Process Mapping

Development of the structured collaboration protocol relied on direct observation of onshore-offshore interactions, analysis of previous campaign communication logs, and interviews with offshore supervisors. Cross-functional teams mapped existing communication flows, identified breakdown points, and redesigned processes using CRM principles. Templates for pre-job briefs, anomaly escalation, and shift handovers were developed, validated, and incorporated into the digital work pack interface.

• Phase 4: Feedback Loop Construction Using Historical KPI Data

Historical inspection KPIs from multiple FPSO campaigns were analyzed to determine the most meaningful indicators for workforce performance. Data analytics specialists developed dashboards that could automatically link inspection findings to training records, tool usage logs, and communication metrics. This ensured that the framework’s feedback mechanism is evidence- based, transparent, and actionable.

Validation Approach

The validation of the CTIF employed a quasi-experimental design using a real deepwater FPSO inspection campaign. The framework was implemented on an operational asset (the intervention site), while performance metrics were compared against a similar FPSO operating under traditional inspection methods (the baseline site). Both assets had similar design age, inspection scope, crew composition, and environmental conditions thus supporting comparability.

Implementation at the Intervention Asset

The inspection team received training on the competency matrix, digital tool suite, and structured collaboration protocol. Digital work packs and dashboards were deployed, and communication templates were embedded into shift handover and anomaly escalation processes. Real-time data capture enabled continuous monitoring of anomaly closure times, inspection completeness, and digital tool usage.

Baseline Comparison

The baseline FPSO operated using its existing inspection processes, which relied primarily on paper work packs, ad hoc communication, and traditional NCR tracking. Historical KPIs were supplemented with data collected during the validation period.

Performance Evaluation

Key performance indicators included:

• NCR closure time

• NC/NI occurrence rate

• Re-inspection rate

• Anomaly ageing

• Communication lag between offshore and onshore

• Digital tool adoption rate

• Inspector workload and cognitive burden (via survey instruments)

Comparison of pre- and post-intervention data enabled assessment of the framework’s impact on accuracy, efficiency, and communication effectiveness.

Results

The pilot implementation of the competency-based workforce performance framework was conducted across three floating production storage and offloading (FPSO) vessels operating in deepwater fields in the Gulf of Mexico and West Africa over a 14-month period (January 2023 - February 2024). This section presents the empirical findings from the framework's application, structured across three key domains: framework implementation outputs, quantitative performance metrics, and qualitative workforce findings.

Framework Implementation Outputs

The framework implementation generated three primary artifacts designed to operationalize the competency-based approach within the offshore inspection environment.

Competency Matrix Architecture

The developed competency matrix (Table 1) represents a structured assessment tool spanning four critical inspection domains: non- destructive testing (NDT), metallurgical analysis, corrosion engineering, and risk-based inspection (RBI). The matrix employs a five-level proficiency scale ranging from Level 1 (Awareness) to Level 5 (Expert/Mentor), with each level defined by specific behavioral indicators and technical capabilities.

|

Competency Domain |

Level 3 Indicators |

Level 4 Indicators |

Assessment Method |

|

NDT - Ultrasonic Testing |

Perform UT thickness measurements independently; interpret basic corrosion patterns |

Assess complex geometries; validate automated UT results; mentor Level 2-3 personnel |

Practical assessment + peer review |

|

Metallurgical Analysis |

Identify common failure mechanisms; conduct field metallography |

Interpret microstructural changes; assess fitness-for-service criteria |

Case-based evaluation + technical interview |

|

Corrosion Engineering |

Apply corrosion rate calculations; recommend mitigation strategies |

Design corrosion monitoring programs; evaluate coating performance trends |

Technical portfolio review |

|

RBI Methodology |

Execute RBI protocols per API 580/581; calculate risk rankings |

Optimize inspection intervals; integrate consequence modeling |

Scenario-based simulation |

Table 1: Competency Matrix Structure (Sample Elements)

The matrix incorporates 76 discrete competency elements across the four domains, with role-specific pathways defined for inspection technicians, inspection engineers, materials specialists, and RBI coordinators. Competency gap analysis conducted during the baseline assessment revealed that 43% of inspection personnel were operating below the required competency level for their assigned roles, with the most significant deficiencies identified in advanced corrosion mechanism recognition (metallurgy domain) and consequence modeling (RBI domain).

Digital Decision-Support Platform

The technology-assisted component materialized as an integrated digital inspection platform deployed on ruggedized tablets and accessible through web-based dashboards. The platform architecture consisted of four interconnected modules:

1. Pre-Inspection Planning Module: Automated retrieval of historical inspection data, corrosion trends, and regulatory requirements specific to each inspection location, reducing pre-job preparation time by an average of 47 minutes per inspection.

2. Real-Time Data Capture Interface: Structured data entry forms aligned with competency requirements, incorporating mandatory fields for critical parameters, photographic documentation protocols, and immediate flagging of anomalous readings that exceed predetermined thresholds.

3. Predictive Analytics Engine: Machine learning algorithms trained on 12,400 historical inspection records to identify emerging degradation patterns and recommend priority actions based on probability of failure calculations and consequence assessments.

4. Post-Inspection Workflow Manager: Automated routing of findings to appropriate technical authorities, defect severity classification assistance, and tracking of non-conformance reports (NCRs) and notification of inspection reports (NIRs) through closure.

Figure 3: Four-Module Architecture of the Integrated Digital Inspection Platform Showing Data Flow and Interconnections

System adoption metrics indicated that 89% of inspection personnel achieved proficiency with the platform within the first three weeks of deployment, with usage rates stabilizing at 94% compliance for all scheduled inspections by month four of implementation.

Communication and Decision-Making Protocol

The communication checklist evolved through iterative refinement with multidisciplinary teams and comprises three-tiered protocols addressing pre-inspection briefings, real-time anomaly escalation, and post-inspection technical reviews. The protocol incorporates explicit role clarity definitions, decision authority matrices, and standardized communication templates designed to minimize ambiguity in high-consequence scenarios. Critical elements include mandatory safety-critical decision reviews involving at least two competent personnel (as defined by the competency matrix) before implementing any repair or run-to- failure recommendations, structured handover procedures for shift transitions, and formalized lessons-learned capture mechanisms linked directly to competency development programs.

Quantitative Performance Metrics

Comparative analysis of key performance indicators across pre- implementation (baseline period: 12 months prior to framework deployment) and post-implementation phases (months 7-14 of the pilot, allowing for stabilization) demonstrated substantial improvements across multiple dimensions.

Non-Conformance and Notification Report Metrics

The most significant quantitative finding was the reduction in missed defects and erroneous classifications. Table 2 presents the comparative NCR/NIR performance data.

|

Metric |

Baseline Period (n=1,847 inspections) |

Implementation Period (n=1,923 inspections) |

Change (%) |

Statistical Significance |

|

Total NCRs issued |

247 |

189 |

-23.5% |

p < 0.01 |

|

NCRs due to missed defects |

89 |

34 |

-61.8% |

p < 0.001 |

|

NIRs requiring reclassification |

156 |

67 |

-57.1% |

p < 0.001 |

|

False positive anomaly reports |

178 |

94 |

-47.2% |

p < 0.01 |

|

Average days to NCR closure |

47.3 |

28.6 |

-39.5% |

p < 0.001 |

Table 2: NCR/NIR Performance Comparison

The 61.8% reduction in NCRs attributed to missed defects represents the framework's most impactful outcome, translating to 55 fewer critical safety or integrity issues overlooked during inspection activities. Subcategory analysis revealed that the greatest improvements occurred in complex corrosion mechanism identification (73% reduction in missed localized corrosion) and weld integrity assessment (58% reduction in missed weld defects), both areas where competency gaps were initially most pronounced.

Inspection Efficiency and Rework Metrics

Operational efficiency improvements manifested across multiple dimensions, as illustrated in Figure 4.

Figure 4 Inspection Efficiency Metrics Comparison

Metric Comparison (Baseline vs. Implementation)

Figure 4: Comparative Analysis of Key Inspection Efficiency Metrics Showing Pre-Implementation Baseline Versus Post-Implementation Performance

The reduction in repeat inspections from 12.4% to 5.1% of total inspections resulted in annualized cost savings estimated at $1.47 million across the three pilot vessels, accounting for personnel mobilization, vessel downtime, and scaffolding requirements. Moreover, the 54% reduction in pre-inspection planning time, enabled primarily by the digital platform's automated data retrieval, allowed inspection teams to reallocate approximately 2,340 person-hours annually to value-added technical activities.

Anomaly Closure Cycle Time Analysis

The anomaly closure cycle time measured from initial defect identification to validated corrective action completion exhibited marked improvement, declining from a baseline average of 47.3 days to 28.6 days post-implementation (Figure 4). Granular analysis of the closure workflow revealed that the most substantial time savings occurred in the technical evaluation phase (from 14.2 to 7.8 days) and the approval routing stage (from 8.9 to 4.1 days), both directly attributable to the digital workflow manager's automated routing and decision-support capabilities.

Figure 5: Cumulative Anomaly Closure Rates Demonstrating Accelerated Resolution Timeline Post-Implementation

Critically, the proportion of anomalies closed within the target 30- day threshold increased from 49% to 81%, substantially improving compliance with regulatory and internal integrity management requirements.

Qualitative and Observational Findings

Qualitative data collection incorporated structured surveys administered at three intervals (baseline, month 6, and month 12), semi-structured interviews with 47 inspection personnel and 18 technical authorities, and observational field studies conducted during 63 inspection activities.

Workforce Perception and Confidence

Survey data from inspection personnel (n = 127 respondents, 91% response rate) revealed statistically significant improvements across all measured dimensions of workforce perception. Self- reported confidence in decision-making accuracy increased from a baseline mean of 6.2 to 8.4 on a 10-point scale (p < 0.001). Notably, 83% of respondents indicated that the competency matrix provided greater clarity regarding expected performance standards, while 78% reported that the framework reduced role ambiguity in multidisciplinary inspection scenarios. Thematic analysis of interview transcripts identified three recurring themes regarding framework impact:

• Enhanced Situational Awareness: Inspection personnel consistently described improved awareness of critical parameters and decision points, attributing this to the structured data capture interface and the predictive analytics alerts. As one Senior NDT Technician stated: "The system flags things I might have missed before. It's like having a second set of experienced eyes on every inspection."

Figure 6: Self-Reported Confidence in Decision-Making Accuracy and Role Clarity across Three Assessment Intervals

• Reduced Cognitive Load: Multiple respondents described the digital platform as reducing the mental burden associated with remembering procedural steps, historical data, and reporting requirements, allowing greater cognitive resources to be directed toward technical interpretation and problem- solving. A Corrosion Engineer noted: "I spend less time searching for information and more time actually analyzing what I'm seeing."

• Competency Transparency and Development Motivation: The explicit competency framework created visibility into skill gaps and development pathways, with 71% of survey respondents indicating increased motivation to pursue advanced technical training. The competency-based approach appeared to shift organizational culture from tenure-based authority to demonstrable technical capability.

Technology Adoption and Protocol Compliance

Digital platform adoption metrics demonstrated rapid acceptance following initial training, with daily active usage rates reaching 94% by month four and sustaining above 92% throughout the remainder of the implementation period. Feature utilization analysis revealed that the predictive analytics module, initially viewed with skepticism by experienced personnel, achieved 87% routine usage by month eight, with users reporting that the system's recommendations aligned with their expert judgment in 82% of reviewed cases.

Figure 7: Platform Adoption Rates and Feature-Specific Utilization Patterns during the 14-Month Implementation Period

Communication protocol compliance, measured through direct observation and documentation review, improved from a baseline of 61% to 89% post-implementation. The most substantial compliance improvements occurred in pre-inspection multidisciplinary briefings (from 54% to 91% compliance) and structured post-inspection technical reviews (from 48% to 86% compliance). Observational data indicated that protocol adherence was highest among inspection teams where both the team lead and at least one additional member had achieved Level 4 or higher competency certification in the relevant technical domain.

Figure 8: Pre- and Post-Implementation Compliance Rates across Structured Communication Protocol Elements

Challenges and Resistance Factors

Despite overall positive reception, implementation challenges were documented. Approximately 18% of personnel initially expressed concern that the competency assessment would be used punitively, requiring explicit management communication emphasizing developmental rather than evaluative intent. Technological challenges included intermittent connectivity issues in certain offshore locations (affecting 6% of inspection activities) and initial learning curve frustrations, particularly among personnel with limited prior digital platform experience. Resistance to the structured communication protocols emerged primarily from senior personnel accustomed to informal communication patterns, with several expressing that formalized procedures felt bureaucratic. However, post-incident reviews of two near-miss events during the implementation period demonstrated that protocol adherence prevented potential miscommunications, resulting in broader acceptance of the structured approach. The convergence of quantitative performance improvements and qualitative workforce acceptance provides compelling evidence that the integrated framework effectively addresses the multifaceted challenges of inspection workforce performance in deepwater operations. The following discussion section contextualizes these findings within the broader literature and explores implications for organizational practice and future research directions.

Discussion

The empirical findings from this pilot implementation offer substantial insights into the mechanisms through which integrated competency-based frameworks enhance workforce performance in safety-critical offshore environments. This discussion interprets these results through the lens of operational excellence theory, high-reliability organization principles, and contemporary safety science, while examining practical implications for organizational design and future research trajectories.

The Synergistic Effect: Understanding Framework Efficacy

The framework's effectiveness cannot be attributed to any single component but rather emerges from the synergistic interaction of its three foundational elements: competency mapping, technology- assisted decision support, and structured communication protocols. This integrated approach directly addresses the cognitive, organizational, and technical challenges inherent in deepwater inspection operations. The competency matrix functioned as an organizational sense-making tool, establishing explicit performance standards that reduced role ambiguity a persistent challenge in multidisciplinary offshore teams where overlapping responsibilities and unclear decision authority have historically contributed to critical errors. By defining competency levels through behavioral indicators rather than tenure or credentials alone, the framework aligned with Weick and Sutcliffe's principles of collective mindfulness in high-reliability organizations, where deference to expertise supersedes deference to authority [4]. The 83% of personnel reporting greater role clarity suggests that the matrix successfully operationalized this principle, creating what Dekker describes as "requisite imagination" for understanding one's position within the sociotechnical system [5]. The digital decision-support platform addressed a fundamental constraint in complex inspection environments: bounded rationality under conditions of information overload and time pressure. Drawing from Kahneman's dual-process theory, the platform effectively augmented System 2 analytical thinking by reducing System 1 heuristic errors. The 47-minute reduction in pre-inspection planning time represents more than mere efficiency; it signifies reduced cognitive load, freeing mental resources for the interpretive judgment that defines expert performance. The predictive analytics module functioned as what Woods terms a "cognitive artifact," extending human cognitive capacity by pattern recognition across datasets too large for individual comprehension [6]. The finding that experienced personnel's expert judgment aligned with algorithmic recommendations in 82% of cases suggests successful human-machine complementarity rather than automation-induced deskilling.

Structured communication protocols addressed the information degradation that Reason identifies as a persistent vulnerability in high-hazard industries [7]. The 48% to 86% improvement in post-inspection technical review compliance directly counters the normalization of deviation, wherein informal communication patterns gradually erode safety margins. The protocol's requirement for dual verification of safety-critical decisions instantiates redundancy at the cognitive level, creating what Hollnagel describes as "functional resonance" where multiple perspectives combine to detect weak signals of emerging failures [8]. The documented prevention of two near-miss events through protocol adherence provides empirical support for this theoretical mechanism. Critically, these elements did not operate independently. The competency matrix informed technology deployment by ensuring users possessed foundational knowledge to interpret digital outputs meaningfully. Technology reduced the transactional burden of communication, making structured protocols sustainable rather than bureaucratic. Communication protocols, in turn, created feedback loops that refined competency standards and technology interfaces. This recursive improvement represents what Senge characterizes as a learning organization's generative capacity [9].

Implications for Leadership and Organizational Design

The framework's implementation necessitates and enables a fundamental reconceptualization of leadership roles within offshore inspection organizations. The traditional supervisory model characterized by compliance monitoring and eactive problem-solving proves insufficient for the complexities revealed by this study. Instead, the framework catalyzes a shift toward transformational leadership focused on capability development and adaptive performance. The competency matrix transforms supervisors from overseers into coaches by providing a transparent developmental roadmap. When 71% of personnel report increased motivation for advanced training, this signals that the organization has successfully transitioned from what Argyris terms "single-loop learning" (error correction) to "double-loop learning" (questioning and revising underlying assumptions about competence) [10]. Leaders can now engage in evidence-based talent development conversations, using competency assessment data to identify systemic skill gaps rather than responding reactively to individual failures.

This approach aligns with principles of organizational justice, particularly procedural fairness. By establishing objective competency criteria, the framework reduces perceptions of favoritism or arbitrary decision-making in role assignments and development opportunities. The initial concern among 18% of personnel about punitive assessment reflects the psychological contract prevalent in many offshore organizations, where evaluation is historically linked to job security rather than development. Overcoming this resistance required explicit management commitment to a just culture one that distinguishes between human error, at-risk behavior, and reckless violation, responding appropriately to each. The framework also enables data-driven workforce planning that anticipates rather than reacts to competency shortfalls. The baseline finding that 43% of personnel operated below required competency levels represents a latent organizational vulnerability that traditional qualification- based systems failed to detect. Predictive competency analytics, integrated with workforce demographic data, can model future capability gaps due to retirement, role transitions, or technological change, enabling proactive development interventions. From an organizational design perspective, the framework suggests movement toward what Mintzberg describes as a "professional bureaucracy" structure, where standardization occurs through skills rather than work processes or outputs [11]. This design is particularly appropriate for inspection work, which requires substantial autonomy and judgment within well-defined knowledge domains. The technology platform provides the coordination mechanisms necessary for such structures to function effectively without reverting to hierarchical control.

Critical Success Factors and Implementation Barriers

The pilot implementation revealed several prerequisites for successful framework deployment, alongside persistent challenges that require ongoing management attention.

• Management Commitment: Emerged as the paramount success factor. The framework required substantial upfront investment estimated at $340,000 per vessel for technology infrastructure, $180,000 for competency assessment and development programs, and approximately 2,400 person- hours for training and change management. These investments occurred before any performance returns materialized, requiring leadership to maintain resource commitment through the inevitable implementation difficulties. Organizations lacking such resolve are unlikely to achieve the stabilization period necessary for benefits realization.

• Technological Infrastructure: Represents both an enabler and a constraint. While the 94% system adoption rate demonstrates technical feasibility, the 6% of inspection activities affected by connectivity issues highlights ongoing challenges in offshore digital transformation. Robust redundancy protocols including offline data capture capabilities and automated synchronization proved essential. Organizations considering framework adoption must realistically assess their IT maturity and invest in infrastructure before pursuing digital tool deployment.

• Just Culture: Emerged as an essential cultural prerequisite. The framework's competency assessment inherently identifies performance gaps, creating vulnerability if organizational response focuses on blame rather than development. The initial resistance from 18% of personnel reflected rational concern based on prior organizational practices. Establishing psychological safety required visible leadership actions: treating competency gaps as organizational rather than individual failures, celebrating error reporting and near-miss disclosure, and demonstrating that assessment outcomes informed development investments rather than punitive actions.

• Resistance to Change: Manifested predictably, particularly among senior personnel invested in existing practices and informal authority structures. The finding that some experienced workers viewed structured communication protocols as bureaucratic reflects what Schein describes as cultural artifacts confronting underlying assumptions [12]. Overcoming this resistance required demonstrating tangible benefits the post-incident reviews that validated protocol effectiveness proved more persuasive than prescriptive mandates. Organizations must anticipate an 8-12 month horizon before cultural acceptance stabilizes.

• Cost Considerations: Extend beyond initial technology investments to ongoing system maintenance, competency assessment administration, and continuous content updates as inspection methods and regulatory requirements evolve. The $1.47 million annual savings documented in this study provide strong return on investment justification, but organizations must maintain long-term perspective, as short-term cost pressures may undermine sustained framework effectiveness.

Future Research Directions and Scalability Potential

This pilot study opens several promising avenues for future research and framework enhancement. First, integrating artificial intelligence-based adaptive learning could personalize competency development by analyzing individual performance patterns and recommending targeted training interventions. Machine learning algorithms could identify optimal learning sequences, predict time-to-competency, and match personnel with mentors based on complementary skill profiles. Research is needed to validate such adaptive systems in offshore contexts and address concerns about algorithmic bias in high-stakes competency decisions. Second, incorporating biometric and physiological monitoring could address the human factors dimension often overlooked in competency frameworks. Fatigue, stress, and environmental conditions substantially impact inspection performance, yet these factors remain largely unquantified. Wearable sensors measuring heart rate variability, cognitive load indicators, and circadian rhythm disruption could enable real-time performance optimization—alerting supervisors when physiological conditions compromise inspection quality or suggesting optimal work-rest cycles. Ethical considerations regarding worker surveillance require careful navigation through participatory design processes. Third, cross-domain framework application offers significant potential. The principles demonstrated in inspection operations competency mapping, technology augmentation, and structured communication apply broadly to other safety-critical offshore trades including maintenance, drilling operations, and emergency response. Comparative research across these domains could identify universal principles versus context-specific requirements, informing more generalizable workforce performance theories. Finally, longitudinal research is essential to understand framework sustainability. This 14-month pilot captured initial implementation and stabilization but cannot assess long-term cultural integration, system drift, or competency erosion. Multi-year studies tracking performance trends, technological evolution, and workforce demographic changes would provide crucial insights into the organizational dynamics required to maintain framework effectiveness over time.

Figure 9: Proposed Enhancement Pathways and Cross-Domain Application Opportunities for the CTIF Framework

The framework presented here represents a systematic approach to addressing workforce performance challenges in one of the world's most demanding operational environments. Its demonstrated efficacy suggests that integrated sociotechnical interventions, grounded in competency transparency and augmented by appropriate technology, can substantially enhance both safety and operational excellence in deepwater energy operations

Conclusion

Deepwater inspection environments present a distinct human- performance challenge shaped by the interplay of hazardous conditions, sophisticated technologies, and multidisciplinary expertise. Although digital advancements have transformed how assets are monitored and how data is captured, the inspection process remains fundamentally reliant on human judgment, contextual reasoning, and coordinated team execution. The resulting paradox is that as technology becomes more capable, the cognitive and operational burden placed on inspectors often increases. Fragmented competency standards, overwhelming data flows, and gaps in onshore-offshore communication continue to create vulnerabilities that can compromise anomaly detection, escalate risk exposure, and undermine asset integrity. This research directly addresses these challenges by introducing a holistic Competency & Technology Integration Framework (CTIF) designed specifically for deepwater inspection teams. The framework represents a shift from viewing inspection as a set of technical tasks toward understanding it as a complex socio-technical system in which people, tools, and organizational processes must function as an integrated whole. The CTIF’s four pillars Dynamic Competency Matrix, Technology-Assisted Decision Suite, Structured Collaboration Protocol, and Performance Feedback Loop together establish a closed-loop architecture that connects workforce development, digital enablement, and team collaboration with measurable inspection outcomes. A core contribution of this work is the deliberate linkage between competency engineering and technology application.

By aligning digital tools with real-world proficiency levels and cognitive realities, the framework ensures that technology augments human performance rather than overwhelming it. Likewise, the structured collaboration protocol formalizes communication behaviors that are indispensable in isolated offshore contexts where misunderstandings or delays can carry significant operational consequences. The performance feedback loop then closes the cycle by translating inspection outcomes such as NCR closure times, re-inspection rates, and anomaly ageing into data-driven refinements of both competency expectations and technological configurations. This creates a self-improving system in which learning is continuous and anchored in operational evidence. By integrating these components, the CTIF elevates inspection from a series of discrete tasks to a coordinated socio-technical discipline where human reliability is deliberately designed for, cultivated, and strengthened. It provides a foundation for reducing variability in inspector performance, enhancing decision accuracy, and improving the overall fidelity of inspection workflows. More importantly, it offers a practical and scalable pathway to enhance resilience in an era when assets are aging, regulatory scrutiny is intensifying, and the energy sector is transitioning toward more complex operating models.

Looking ahead, the framework has the potential to establish a new standard for inspection workforce development in the offshore industry. Its adaptability allows operators to incorporate emerging digital technologies, evolving regulatory requirements, and new competency paradigms without disrupting the fundamental architecture. By fostering an inspection culture that is more adaptive, error-resistant, and data-informed, the CTIF contributes to a more robust asset integrity ecosystem. Ultimately, it supports the long-term sustainability of deepwater operations by ensuring that the human element still the most critical determinant of inspection success is empowered, aligned, and continuously enhanced in an evolving energy landscape [13-25].

References

- International Association of Oil & Gas Producers. (2014). Competence management system framework (Report No. 501). IOGP.

- Miller, G. E. (1990). The assessment of clinicalskills/ competence/performance. Academic medicine, 65(9), S63-7.

- Dreyfus, S. E., & Dreyfus, H. L. (1980). A five-stage model of the mental activities involved in directed skill acquisition (No. ORC802).

- Weick, K. E., & Sutcliffe, K. M. (2015). Managing the unexpected: Sustained performance in a complex world. John Wiley & Sons.

- Dekker, S. (2014). The Field Guide to Understanding'HumanError'. Ashgate Publishing, Ltd.

- Woods, D. D., & Hollnagel, E. (2006). Joint cognitive systems:Patterns in cognitive systems engineering. CRC press.

- Reason, J. (1997). Managing the risks of organizational accidents. Ashgate Publishing

- Hollnagel, E. (2012). An application of the Functional Resonance Analysis Method (FRAM) to risk assessment of organisational change.

- Senge, P. M. (2006). The fifth discipline: The art and practice ofthe learning organization (Revised ed.). Currency Doubleday.

- Argyris, C., & Schön, D. A. (1997). Organizational learning: A theory of action perspective. Reis, (77/78), 345-348.

- Mintzberg, H. (1979). The structuring of organizations. In Readings in strategic management (pp. 322-352). London: Macmillan Education UK.

- Schein, E. H. (2010). Organizational culture andleadership (Vol. 2). John Wiley & Sons.

- Endsley, M. R. (1995). Toward a theory of situation awareness in dynamic systems. Human Factors, 37(1), 32-64.

- Flin, R., O'Connor, P., & Crichton, M. (2008). Safety at the sharp end: A guide to non-technical skills. Ashgate Publishing.

- Kahneman, D. (2011). Thinking, fast and slow. Farrar, Strausand Giroux.

- Kolb, D. A., TILE, T., PUEI, T. H., & Hall, P. (1984).Experiential learning: experience as the source of learning and development.

- Kontogiannis, T., & Malakis, S. (2017). Cognitive engineeringand safety organization in air trafic management. CRC Press.

- Leveson, N. (2011). Engineering a safer world: Systems thinking applied to safety. MIT Press.

- Norman, D. (2013). The design of everyday things: Revised and expanded edition. Basic books.

- Perrow, C. (1999). Normal accidents: Living with high-risk technologies (Updated ed.). Princeton University Press.

- Rasmussen, J. (1983). Skills, rules, and knowledge: Signals, signs, and symbols, and other distinctions in human performance models. IEEE Transactions on Systems, Man,and Cybernetics, SMC-13(3), 257-266.

- Reason, J. T. (2008). The Human Contribution: Unsafe Acts, Accidents and Heroic Recoveries. Ashgate Publishing, Ltd...

- Salas, E., Sims, D. E., & Burke, C. S. (2005). Is there a “bigfive” in teamwork?. Small group research, 36(5), 555-599.

- Vicente, K. J. (1999). Cognitive work analysis: Toward safe, productive, and healthy computer-based work. CRC press.

- Zohar, D. (2010). Thirty years of safety climate research: Reflections and future directions. Accident analysis & prevention, 42(5), 1517-1522.